AI will replace people, because most people aren't great at their job

A reality check about the end of the (middle-class) worker

The equation that governed professional careers for half a century was elegantly simple: a mediocre accountant is better than no accountant. A mediocre designer is better than no design. Being “better than nothing” was sufficient to secure a stable middle-class knowledge work career.

That equation just broke.

Large language models now provide what I call the ‘60% good’ work as a baseline. I do not have any studies or empiric research to support it, it’s an illustration. The number is random and is not at the same time: ‘60% good’, it means good enough but also, it means above the average. More critically, most stakeholders cannot reliably distinguish between 60% and 100% output quality. Being “better than nothing” has lost its economic value. You now need to be demonstrably, obviously better than free and instant.

It’s not new, but it’s worse

Thirteen years ago, I encountered a French academic paper that stayed with me: Pierre Braun’s “Tout le monde est-il graphiste?” / Is Everyone a Graphic Designer? Written in 2012, it documented what happened when Microsoft added WordArt to Word.

Suddenly, millions of non-designers could create “professional” graphics for church bulletins, school newsletters, event flyers. I had just graduated from one of the best European design schools, and remember this paper having its moment among French professionals. Like my peers, I remember laughing at all these delicious examples of daily occurrences of bad graphic design. So-much-so that I still think of it today when I see a poster for a circus glued on an underpass pillar. Braun interviewed these amateur designers and documented their work, work that they genuinely believed was professional-level.

The pattern was: if commodity design got handled by amateurs, then only truly distinctive professional designers survive. The middle, competent but not exceptional, struggled or disappeared.

That was one feature, in one application, affecting one profession, maybe a few thousands contract jobs globally over a decade.

What’s happening now is the same pattern at internet scale, across every knowledge work profession simultaneously, compressed into months rather than years. To understand the mechanics of this transition, let’s examine design. Not because designers face unique circumstances, but because design makes the abstract concrete, and because I do know design very well. The dynamics playing out in design studios are a preview of what’s coming for every knowledge work profession.

Susskind was kind of right, just not on the timeline

Daniel Susskind’s A world without work argued that technological unemployment would arrive gradually through task encroachment; machines slowly will assume components of jobs until entire roles become redundant. He was fundamentally correct about the mechanism but, writing in 2020, underestimated the velocity.

What we’re witnessing isn’t gradual task encroachment but wholesale capability replacement at the median performance level. The question Susskind raised, what happens when machines can do most of what most workers do, is no longer theoretical. His framework clarifies what’s happening: technology doesn’t eliminate jobs that are difficult, it eliminates jobs where the delta between human performance and machine performance is imperceptible to the people making purchasing decisions.

A mediocre designer produces work that stakeholders perceive as marginally better than AI output. That margin doesn’t justify the cost differential. An exceptional designer produces work where the quality gap is obvious even to non-experts. That margin commands premium pricing.

The uncomfortable implication is that most knowledge workers have been operating in the imperceptible delta.

The Plutonomy thesis and why no one is coming to save you

We’ve all heard some skeptic retort: ‘But if no one has a job, who will buy the products?’ This statement reveals a dramatic blindness within the working class.

In 2005, Citigroup analysts published internal memos describing developed economies as “plutonomies”: economies powered by and structured for the wealthy. The key recommendation was that investment strategies should focus exclusively on high earners and disregard the middle class entirely, as the middle class was becoming economically irrelevant to growth and consumption patterns. The memos leaked and briefly surfaced before disappearing from discourse. But the analysis was prescient, and its implications for the current moment are profound.

In a plutonomy, the economic actors who historically drove labor protections (corporations, banks, policymakers responding to consumer markets) lose their incentive to protect the middle class. If your growth model depends on the top 20% of earners capturing 80% of consumption, you simply don’t care what happens to median workers. They’re not your customer base. They’re not your future revenue. They’re economically irrelevant.

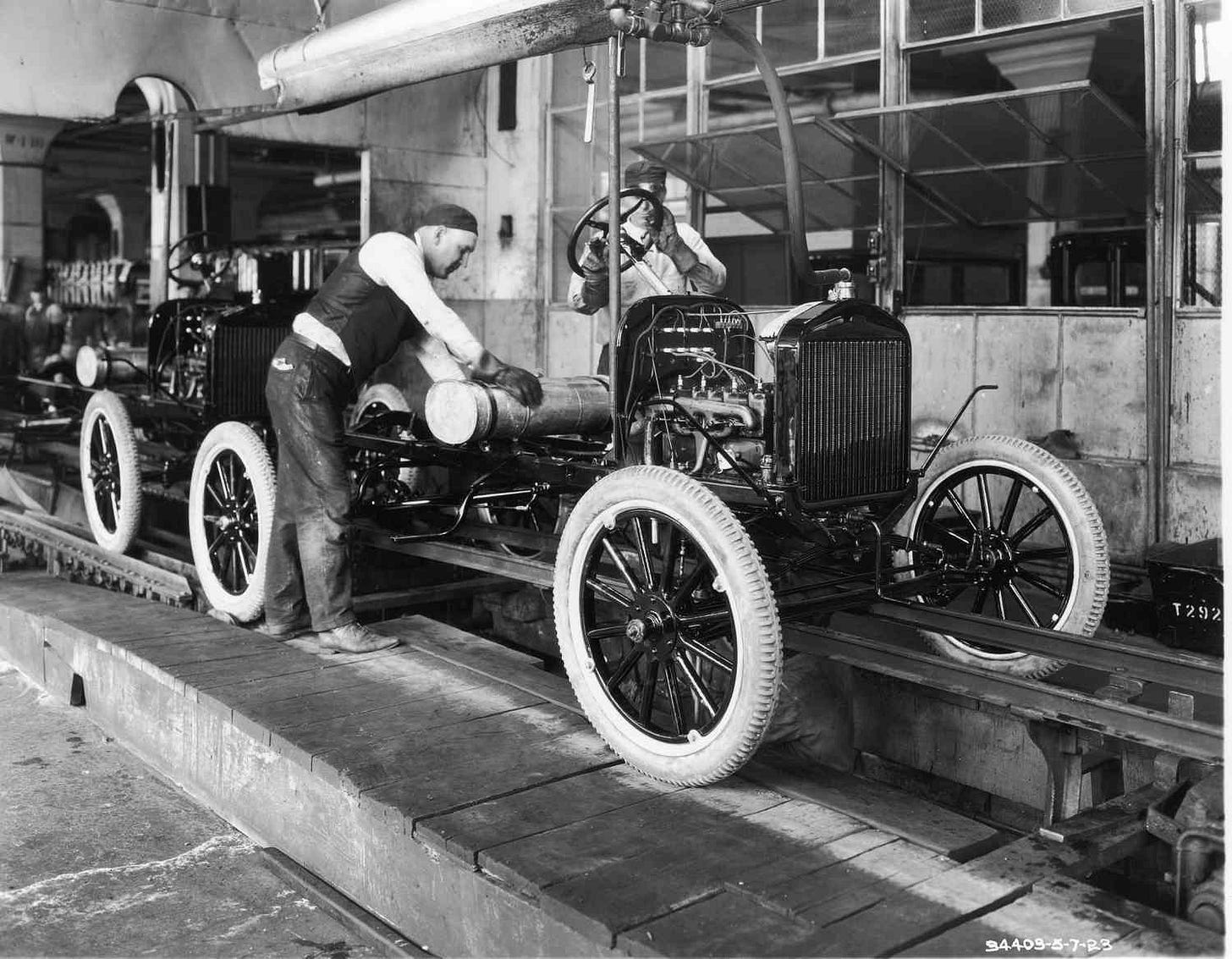

This is why the current labor market transformation is different from previous automation waves. During industrialization, during computerization, capital still needed mass consumers. Henry Ford famously paid workers enough to buy Ford cars. That economic logic drove minimum wages, labor protections, and social safety nets. Capital needed labor as consumers. That logic is breaking. When economic growth concentrates at the top, when luxury goods and premium services drive margins, when B2B and enterprise sales dominate, well, you don’t need a broad middle-class consumer base. Which means you don’t need to preserve middle-class employment.

We’re watching labor market bifurcation meet consumption bifurcation. AI eliminates the economic value of adequate knowledge workers at precisely the moment when economic actors lose incentive to preserve their employment. There’s no institutional pressure to “save” median workers because the growth model doesn’t require their purchasing power.

The comfortable assumption that market forces or policy will ultimately preserve middle-class work rests on obsolete economics. No one is coming to save the average worker, because we’re not planning on extracting money from them anymore.

The fact that stakeholders are non-experts will accelerate the collapse

The cruel mechanism that makes this transformation rapid rather than gradual is what could be called an organizational-level Dunning-Kruger effect.

Braun documented this with WordArt users who created what he called “closed loops” where amateur designers validated each other’s mediocrity within “cultural microcosms.” They genuinely couldn’t see the gap between their work and professional work.

Now multiply that dynamic by every department in every company. When a non-expert stakeholder uses AI to generate a presentation, write marketing copy, or design an interface, the output appears professional. The stakeholder experiences emotional investment: they did it themselves (the ‘Ikea’ effect)! They cannot perceive the gap between 60% and 100% quality because they lack domain expertise.

More perniciously, they become invested in believing AI output is sufficient. Hiring an expert becomes psychologically threatening as it might reveal their own output is mediocre.

A CEO generates a strategy deck with AI, shows it to executives also using AI, everyone agrees it looks professional. The organization systematically loses the ability to recognize genuine expertise. ChatGPT writes serviceable marketing copy. Copilot writes functional code. AI generates reasonable data insights. In each domain, stakeholders without expertise cannot reliably distinguish adequate from exceptional output. So they default to the free option.

Being excellent is insufficient. You must be visibly, obviously excellent to people who cannot evaluate excellence. This is a considerably higher bar.

The tyranny of the majority

There’s a second-order effect: aesthetic and qualitative convergence.

AI systems train on averaged human output and generate averaged results. As AI output proliferates and becomes the reference standard, the next generation trains on increasingly AI-influenced data.

We get a systemic convergence toward an indistinguishable adequacy that would strike Alexis de Tocqueville as the final, sad triumph of mass conformism. In design, this manifests as visual homogeneity.

Every startup website follows identical patterns. Every AI-generated logo clusters around the same formal solutions. Every interface converges toward identical patterns.

Braun observed this with WordArt: “An enormous import/export operation of templates without justified relationship to their use.” Back then it was church bulletins. Now it’s every brand, every product, every interface. This creates a paradox: in a landscape of uniform adequacy, genuine distinctiveness becomes either invisible (stakeholders have lost calibration) or the only thing that matters (in contexts where differentiation is critical). Compare an AI-generated startup website to Linear’s or Arc’s site. To a design professional, the difference is immediately apparent (typography, purposeful white space, coherent interaction language). To most stakeholders? “They both look clean and modern.”

When everything meets minimum viable aesthetic standard, quality becomes imperceptible. This is fatal for the “pretty good” professional whose output was predicated on being noticeably better than nothing.

Commodity or moat

What emerges is radical market bifurcation. At the commodity tier we have AI-generated output for everything that doesn’t require differentiation: homogenous, acceptable, instant. Where most organizations will operate by default for most functions. At the premium tier, work that creates genuine moats. Apple’s liquid glass design language, computationally expensive, instantly recognizable, cannot be trivially replicated. M3’s expressive typography system, highly opinionated, polarizing, unmistakably distinctive. Arc browser’s interaction model, divisive but defended because the craft is apparent.

They share a differentiation obvious enough that even non-experts perceive value. Often polarizing and definitely not optimizing for universal appeal. Requiring genuine expertise and taste that AI cannot yet replicate. When Braun studied how professional designers responded to WordArt democratization, he found they created work “that couldn’t be replicated through standard tools.” One example: designers created a gallery identity using hand-carved stamps and aggressive digital manipulation. When tools democratize, craft becomes the differentiator. We’re watching this pattern repeat at scale.

The economic logic is straightforward. For organizations: in commodity domains, embrace AI and eliminate roles. In differentiation domains, pay premium compensation for exceptional talent. The middle option, paying median salaries for adequate work, no longer makes economic sense. There are but two ways ahead for professionals: become exceptional or exit.

Susskind called this “taskification”: the decomposition of jobs into tasks. But what we’re seeing is simpler: it’s pure elimination of roles where median performance is indistinguishable from AI output.

Go big or go home

Here’s where the bifurcation creates unexpected opportunities: for premium brands, design becomes more valuable, not less.

Apple’s liquid glass isn’t just aesthetic preference, but a strategic decision that dictates hardware capabilities and software architecture. The design language requires specific GPU performance, display technology, and rendering pipelines. Design decisions are driving technical roadmaps, not following them.

This represents a fundamental inversion. For decades, UI design accommodated technical constraints. Now, at the premium tier, technical infrastructure accommodates design ambition. When differentiation is your only moat, design becomes strategic imperative rather than cosmetic afterthought.

We will likely see the emergence of the CDO (Chief Design Officer) as a C-suite fixture at companies competing on differentiation.

Not as “head of the design team” but as strategic leadership shaping product direction, technical investment, and market positioning. Design elevated from execution function to strategic discipline.

This pattern extends beyond design. When AI handles commodity execution, what becomes valuable? Not technical facility, but the capacity for intentional differentiation. Consider what makes Apple’s liquid glass work. It’s not the technical execution, look at Dribble to see that any competent designer could render translucent interfaces. It’s the deliberate cultural references: modernist architecture’s love of transparency, iOS’s original depth language, physical industrial design’s material vocabulary. They’re strategic deployments of cultural meaning. AI can generate surface aesthetics, sure but it can’t yet wield semiotics with intent.

Or take the question of when to break conventions. AI excels at applying established procedures. What it can’t do is exercise judgment about which procedure applies, when the pattern doesn’t fit, how to synthesize contradictory constraints into something coherent. That requires not just knowing how to execute, but understanding why you’re executing, the context, the audience, the cultural moment, the specific tensions you’re resolving.

This is what creates defensible value: not isolated competence, but contextual wisdom. The uncomfortable implication is that we’ve spent decades building education systems and professional frameworks optimized for exactly what AI now commoditizes.

You should tell your kids to major in Classics

If AI commoditizes execution and procedural knowledge, what remains valuable? The ability to make intentional decisions. To understand context. To deploy cultural references with precision. To exercise taste. To synthesize constraints into coherent vision. To know when to follow conventions and when to break them. These aren’t technical skills, but humanistic disciplines.

We’re facing a world where critical thinking matters more than rote knowledge. Where understanding philosophy, history, semiotics, cultural theory becomes more professionally valuable than memorizing syntax or procedures. Where the question “why this choice instead of that choice?” supersedes “how do I execute this choice?”

This has profound implications for education. We’ve spent decades optimizing education systems to produce workers who can execute procedures reliably. We’ve defunded humanities, elevated STEM, focused on measurable technical skills. We’ve built an education system designed to produce exactly the kind of worker AI is now replacing.

Perhaps it’s time to recognize that the humanities, critical thinking, cultural analysis, historical context, philosophical reasoning, aren’t luxury disciplines for the wealthy. They’re the core competencies for the only jobs that will remain economically viable. I’m not arguing everyone needs a philosophy degree. I’m arguing that the skillset that creates defensible value in an AI-saturated market looks much more like humanities training than technical certification.

This doesn’t mean more positions. It means fewer positions, but requiring deeper, broader, more sophisticated thinking. Not everyone can develop these capabilities, and that’s the uncomfortable part. But pretending technical reskilling solves the problem is worse than useless, it’s actively misleading. The positions that survive require what I’ll call intentional design: the capacity to make deliberate choices rooted in cultural understanding, contextual analysis, and sophisticated judgment. This applies whether you’re designing interfaces, crafting strategy, writing copy, or structuring code. The discipline changes; the requirement doesn’t.

AI eliminates the value of execution. What remains is intention, context, and judgment. These are humanistic capabilities, not technical ones.

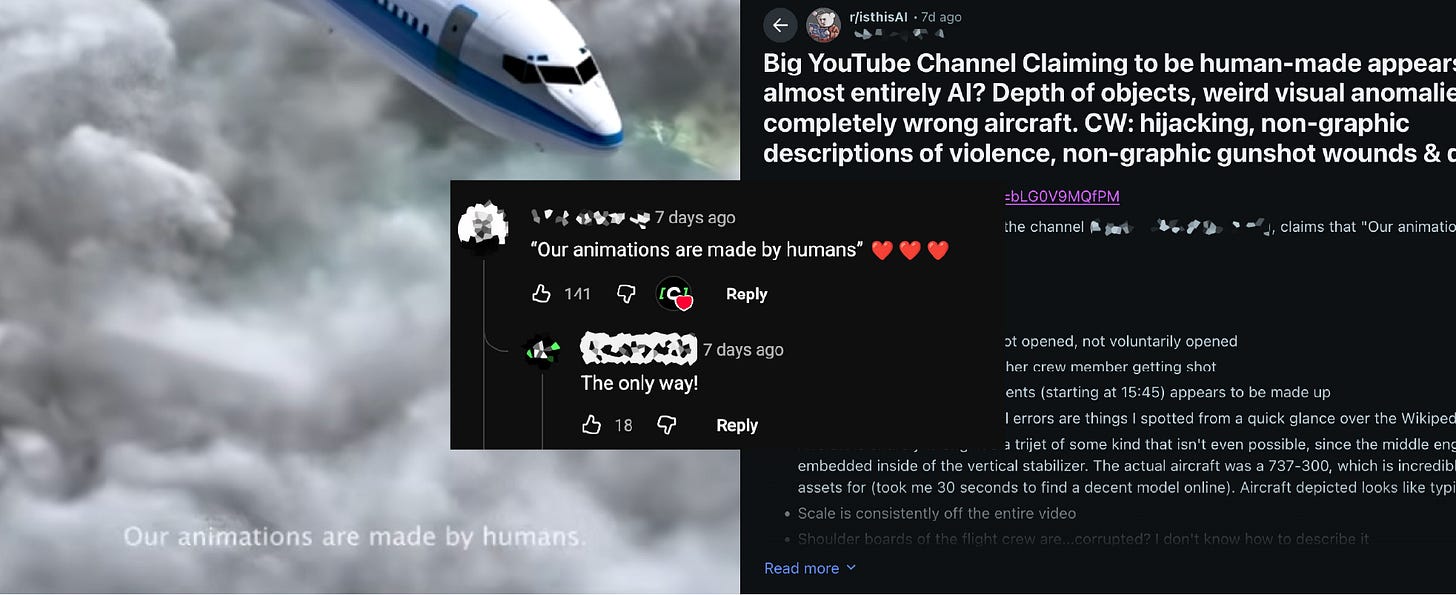

“Human-Made” as premium signal

One emerging category worth examining is the emergence of explicitly positioning “human-made” as value. As AI saturation increases, “designed by humans” becomes a market signal. Not nostalgic rejection of technology but strategic positioning in a commoditized landscape. See how some Youtube channels now explicitly advertise “AI-free creative work.” How viewers loose it in the comments when they spot AI.

The positioning is rational: not as distinctive as Apple-level investment, but clearly differentiated from AI commodity, to appeal to buyers who can perceive quality differences and value the human element.

This creates a new middle tier. Smaller than the collapsing “adequate professional” category, but viable for practitioners who can credibly position on craft rather than competing on price with AI.

Nobody asked to be exceptional

The true tragedy isn’t that people are lazy or incompetent. It’s that the system accommodated normalcy. Most people sought to be adequate at their jobs, not world-class. The social contract worked: develop competence, provide steady value, receive stable compensation.

That contract is shattered. Being “adequate” had economic value when the alternative was an empty chair. When the alternative is free AI at 60% quality, adequacy has no market value. The people losing positions aren’t failures. They’re normal. That category is evaporating.

Here's what makes this moment historically distinct: the architects of this technology aren't offering reassuring rhetoric about 'job evolution' or 'augmentation’: instead treating mass displacement as an inevitable transition rather than a manageable evolution. They're building capability while offering vague assurances that "new jobs will emerge" without specifying what those are: "Entire classes of jobs will go away," though Sam Altman (OpenAI CEO) emphasized that "entirely new classes of jobs that will come."

"Usually what happens is new, even better jobs arrive to take the place of some of the jobs that get replaced. We'll see if that happens this time." - Demis Hassabis (Google DeepMind CEO) for CNN

The conversation we’re not having

The discourse remains trapped in an obsolete binary: Is AI good (augmentation! productivity!) or bad (theft! displacement!)? Both framings miss the point.

The actual crisis lays on the fact that AI is completing the detachment of economic value from human purpose. For millennia, work defined identity. Our surnames, Fisher, Smith, Baker, rooted individual worth in occupation. For the first time in human history, the economic engine is functionally indifferent to the employment of the statistical majority.

We’re debating whether AI will “steal jobs” when the real question is: how do we organize a society where most citizens are economically unnecessary?

The plutonomy analysis told us the middle class was becoming irrelevant as consumers. AI tells us they’re becoming irrelevant as producers. These aren’t separate crises but the same systemic realignment.

In democracies, political power historically derived from economic necessity. Capital needed labor as producers and consumers, which gave labor political leverage. If the majority is economically unnecessary, do they retain political weight? Or does economic irrelevance eventually translate to political irrelevance? Societies are built around values shared by their citizens. When economic growth becomes disconnected from most citizens’ participation, what happens to their political agency? Do we accept a world where the economically irrelevant become politically irrelevant? Or do we fundamentally restructure how we organize power, worth, and participation?

There’s an optimistic reading here: perhaps this is the moment we finally decouple human worth from economic productivity. Perhaps this forces us to develop identity beyond work, aka time for art, community, intellectual pursuit, ... The Renaissance ideal of the whole human, not the industrial ideal of the productive human. But that optimistic reading requires deliberate political and social restructuring, it requires frameworks for dignity without employment, meaning without productivity, political participation without economic leverage. We’re not building those frameworks. We’re not even discussing them.

Instead, we’re watching plutonomy meet technological displacement with no counterpressure. Economic actors don’t need the majority as consumers or producers. Which means they have no incentive to preserve their economic participation. And potentially, no incentive to preserve their political participation either.

We built an entire civilization that tells people their worth derives from their work. Then we built an economic system that doesn’t need their work. And we’re offering no alternative framework for worth, meaning, or political participation.

So?

What I read in that French paper over a decade ago is now happening across every domain simultaneously. The timeline compressed from a decade to months and the scale expanded from one profession to civilization.

We’re not having the conversation that matters. We’re debating whether AI will “augment” or “replace” when the real questions are: what happens when work no longer defines worth?

What happens when the system no longer needs the majority? Do the economically irrelevant retain political power? Can we build frameworks for meaning beyond productivity?

We’ve spent millennia building identity around occupation and in half a decade, AI would have severed that connection. This is either catastrophic (a world where the majority are economically and politically irrelevant) or it’s liberating (finally freeing humans from the equation that worth equals work).

Which future we get depends on choices we’re not yet making and conversations we’re not yet having.

Super article Anna, agree 👍🏻 100%. You did articulate and unpack what people are feeling but in fear.

Great article...I firmly believe in young people driving the humanities in their education as well!