Building AI trust

The context-dependent boundaries of acceptable automation

This article describes how AI makes itself acceptable, or not, and how to design for trust when building an AI companion.

If you want to learn how to design AI chatbots for therapy use, check my Designer’s toolkit.

For designers: AI companions design principles

1. Invocation over interruption

2. Graduated permission escalation

3. Context-appropriate anthropomorphism

4. Modifiability

5. Invisible until necessary, transparent when consequential

6. Social proof over algorithmic authority

7. Expressing uncertainty appropriately

The solution is context-dependent boundaries with informed consent

Conclusion: trust as designed constraint, not marketing challenge

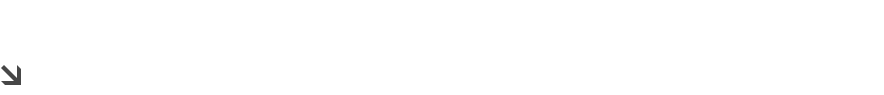

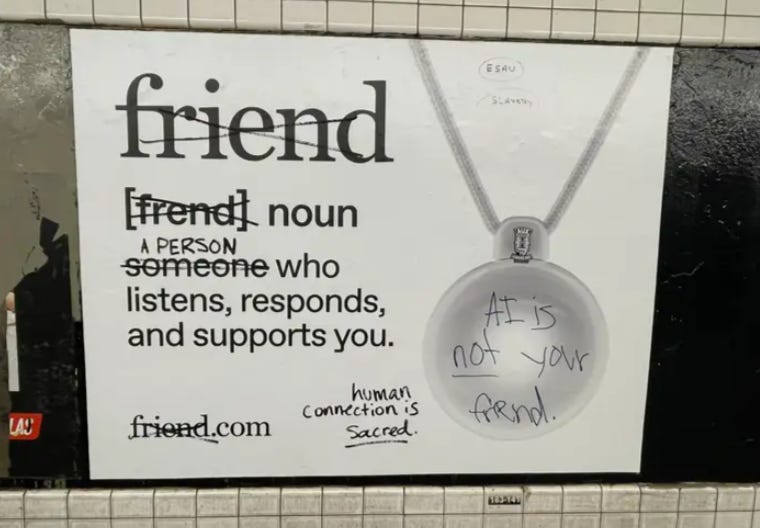

In September 2025, New York City subway riders encountered something unsettling: thousands of minimalist white ads advertising Friend: an AI companion pendant that “listens, responds, and supports you.” The 22-year-old CEO, Avi Schiffmann, spent over $1 million on 11,000 subway car ads, 1,000 platform posters, and 130 urban panels.

“I know people in New York hate AI...so I bought more ads than anyone has ever done with a lot of white space so that they would socially comment on the topic.” - Avi Schiffmann, CEO of Friend

They did. The ads were vandalized with “surveillance capitalism,” “get real friends,” “AI wouldn’t care if you lived or died,” and “stop profiting off of loneliness.”

The $129 Friend device hangs around your neck with an always-on microphone (no off switch). It sends text responses via app and proactively messages based on what it overhears. WIRED’s review titled “I Hate My Friend” described immediate hostility at a tech event, with one researcher accusing the reviewer of “wearing a wire.” Multiple reviewers reported the AI delivered condescending responses and made others uncomfortable once they realized the pendant was listening.

Early security reports found unencrypted Bluetooth, meaning anyone nearby could potentially connect and listen. When asked about privacy, Schiffmann admitted: “I think one day we’ll probably be sued, and we’ll figure it out.”

Friend violated every trust boundary simultaneously: autonomy without permission, surveillance without consent, anthropomorphism without utility, always-on presence without control. The research on building AI trust reveals these boundaries aren’t arbitrary: they’re rooted in human psychology. Understanding where these lines exist and when they can be crossed is essential for designing AI people will actually accept.

Illusion matters more than performance

The illusion of control mattered more than actual performance.

Dietvorst’s 2018 research revealed something counterintuitive about algorithm acceptance: allowing users to modify AI outputs by as little as ±5-10 points dramatically increased acceptance, even when modifications worsened accuracy. Participants who could adjust algorithmic forecasts used them far more than those forced to accept outputs as-is.

This explains why Siri and Alexa succeeded where Microsoft Clippy failed. Clippy interrupted workflow without being summoned, never learned user preferences, and optimized for first impressions while becoming infuriating over time. Bill Gates reportedly called it “The Fucking Clown.” Siri and Alexa succeeded through invocation models because they require explicit activation via wake words or button presses. They maintain presence without imposition.

Friend violated this principle completely. Its always-on microphone with no off switch meant users couldn’t control when it listened.

“For privacy, you’d have to disconnect it from your phone or leave it behind. That feels very invasive, and it’s a big reason I wouldn’t use the device in my real life.” One reviewer

The device’s proactive messaging (sending unsolicited commentary based on overheard conversations) compounded the autonomy violation.

Users need to feel they’re summoning assistance, not being surveilled by it. It reflects a fundamental human need for agency over when and how technology engages with our lives.

Anthropomorphism works in therapy but fails in courtrooms

Want to learn more about AI and therapy? Read my companion article.

If you want to learn how to design AI chatbots for therapy use, check my Designer’s toolkit.

Recent research reveals anthropomorphism works dramatically differently across contexts. A banking chatbot study found chatbots with high anthropomorphic features generated 2.7x more trust than low-anthropomorphism versions in customer service scenarios. Yet industrial robotics research found technical robot designs generated higher trust than anthropomorphic designs in manufacturing contexts.

Context matters: anthropomorphism helps for brand identity and creative tasks but undermines trust for analytical and legal work where objectivity matters.

The explanation: task-oriented contexts require perceived objectivity; social-emotional contexts benefit from perceived empathy. When evaluating contracts or analyzing data, anthropomorphic features create false expectations about human-like judgment and introduce concerns about bias. When seeking emotional support or creative collaboration, human-like interaction increases comfort and engagement.

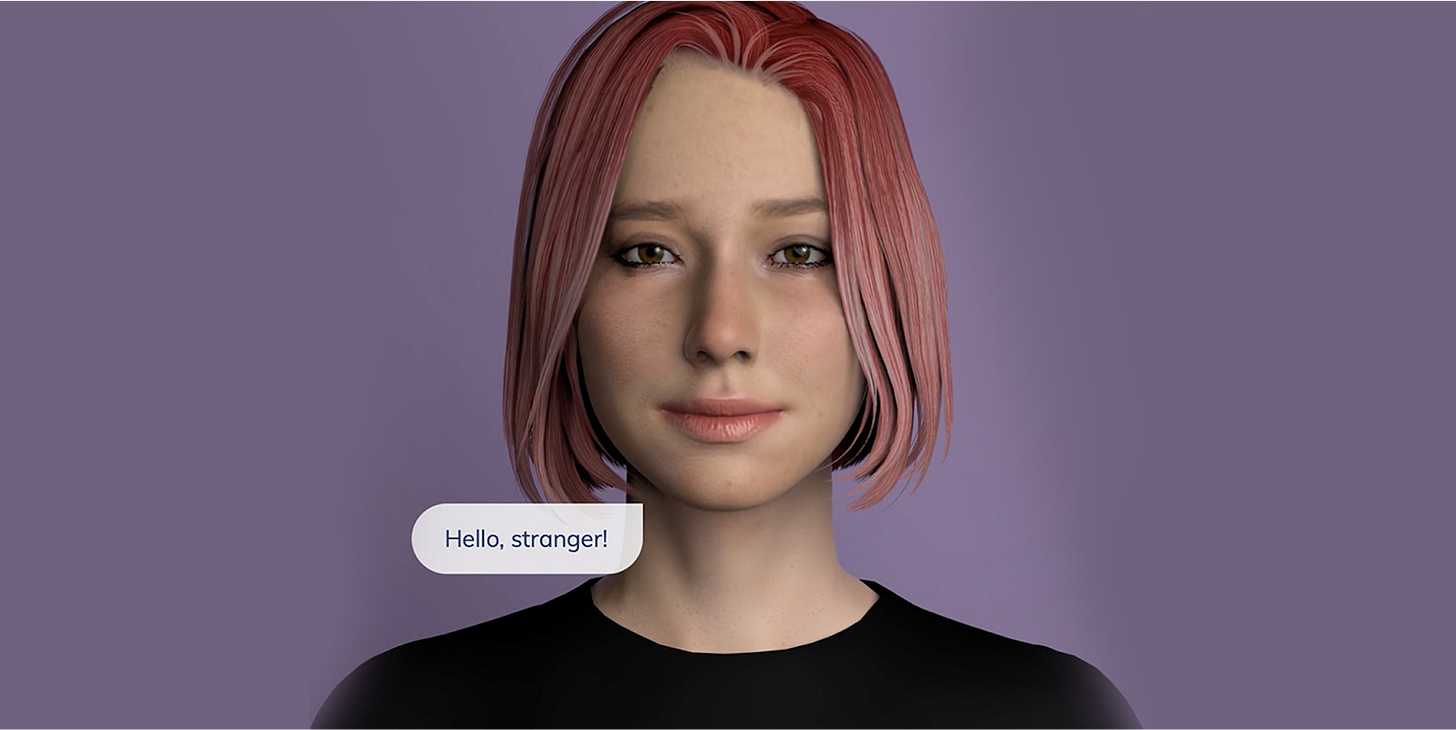

Replika’s success demonstrates this principle. The AI companion app has 2.5 million active users, with 37-49% viewing their Replika as a romantic partner. Users rate their AI companion closer than their best human friend on satisfaction and emotional closeness. This works because users understand they’re engaging in a social-emotional context where anthropomorphism enhances the experience.

Friend failed by creating context confusion. Was it a productivity tool? A companion? A surveillance device? The always-listening setup suggested security/productivity purposes, but the marketing emphasized companionship and emotional support. The snarky, condescending personality (reportedly designed to mirror founder Schiffmann’s own tendencies) delivered neither objective assistance nor empathetic support. It occupied an uncanny valley between contexts, failing at both.

Transparency becomes a liability

The research is unambiguous: successful AI often succeeds by being invisible. Gmail’s spam filter operates at 99.9% accuracy with minimal user awareness. Netflix attributes 80% of watch time to recommendations. Amazon generates 35% of purchases from algorithmic suggestions. These systems work precisely because they don’t announce their AI nature.

Meanwhile, Altay et al.’s research (2024) tested 3,003 participants and found content labeled “AI-generated” was rated as less accurate even when it was true or human-made. The label itself functioned as a warning signal. Products described as “AI-powered” showed decreased purchase intention compared to identical products labeled “high tech.”

Amazon’s “Customers who bought...” feature demonstrates the principle perfectly. It’s powered by sophisticated collaborative filtering algorithms, but users experience it as social proof from other humans. The AI is embedded in familiar trust mechanisms: you’re trusting aggregated human behavior, not an algorithm. This is why it works where explicit AI recommendations might fail.

But invisibility raises ethical questions. When does helpful become deceptive? If users don’t know they’re interacting with AI, can they meaningfully consent to its influence? The line between “seamless user experience” and “manipulative opacity” isn’t always clear.

Friend positioned itself as explicitly AI-powered companion, but its always-listening nature created a different invisibility problem: the invisibility of consent from bystanders. The device recorded everyone nearby, not just the wearer. Multiple reviewers reported others becoming uncomfortable once they realized the pendant was listening. This violated social norms about recording consent, aka norms that exist precisely because not everyone consents to being captured by someone else’s technology.

The ladder from assistant to autonomous agent

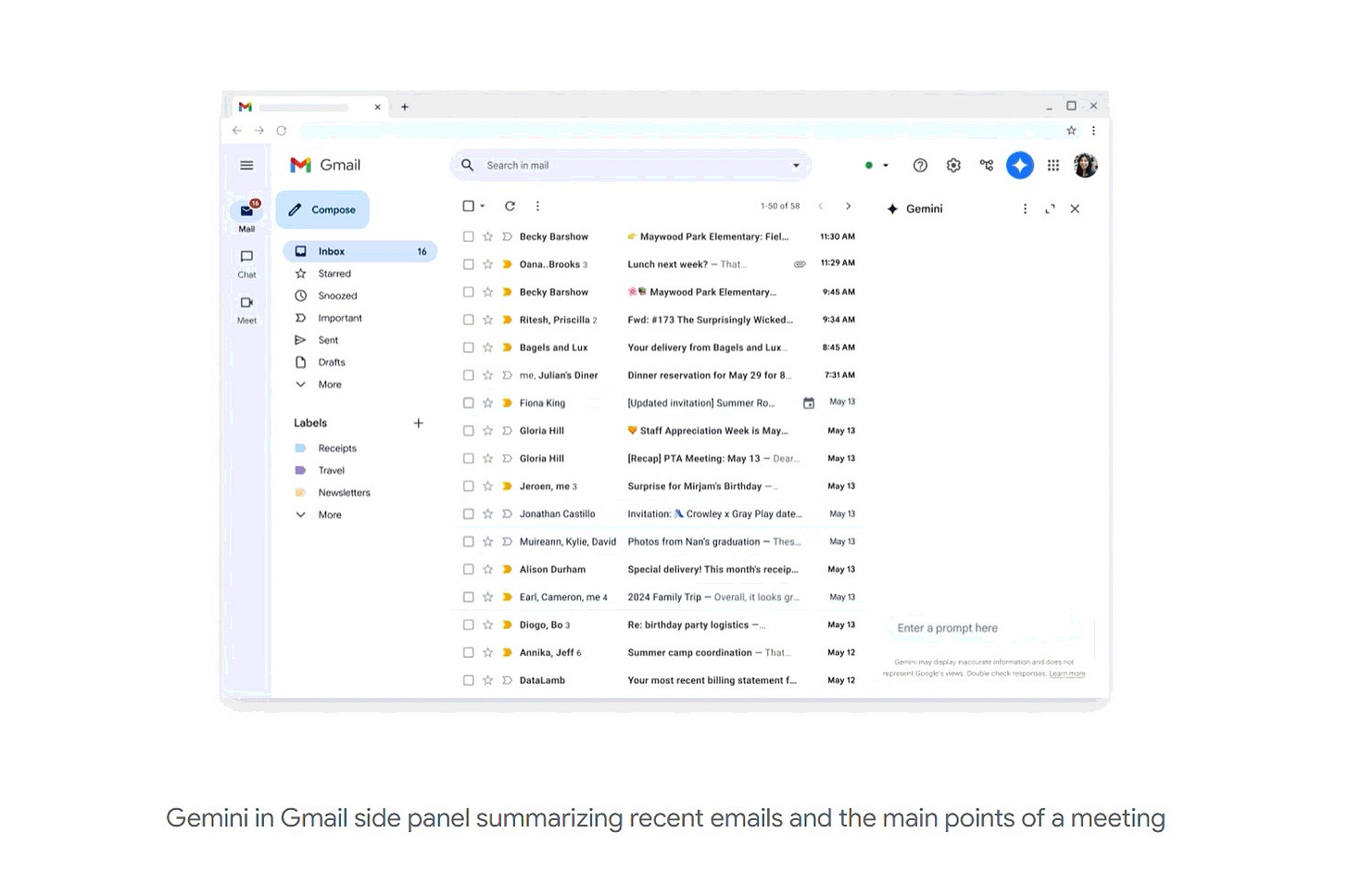

Consider the difference between these AI interactions with your email:

“Help me write a response” - You initiate, you review, you send

“Draft a response for my approval” - AI proposes, you approve/edit

“Summarize my inbox” - AI reads passively, provides information

“Answer routine emails for me” - AI acts autonomously with parameters

“Manage my email completely” - Full autonomous control

Each step represents a different permission level with different trust requirements. Most users are comfortable with 1-3. Many would accept 4 with careful guardrails (only certain senders, certain types of inquiries). Almost no one wants 5, and suggesting it triggers the kind of visceral rejection Friend experienced.

The research on algorithm aversion reveals why: the more serious the consequences, the more algorithm aversion occurs. People readily use algorithms for spell-checking but avoid them for diagnosing diseases or managing important communications. High-stakes domains require human oversight not because humans are more accurate (they’re often not) but because autonomy and accountability matter more than optimization in consequential decisions.

Friend tried to skip the permission ladder entirely. By listening constantly and occasionally interjecting proactively, it jumped straight to autonomous operation without establishing trust through lower permission levels first. Users never got to learn the system’s capabilities, calibrate their trust, or set boundaries for when intervention was welcome versus intrusive.

The correct design pattern: start invisible (low permission), earn helpfulness (medium permission), request autonomy (high permission) only after demonstrating reliability at lower levels. Autocorrect follows this pattern perfectly—it starts as passive suggestion, earns trust through utility, and eventually users rely on it automatically. Friend tried to start at the top of the permission ladder.

Intimacy meets surveillance

The Friend controversy exposes a fundamental tension in AI companion design: the features that create intimacy (constant presence, contextual awareness, proactive engagement) are identical to the features that enable surveillance.

This isn’t new.

New York State already deployed voice-activated AI companions for older adults to combat loneliness, with 95% reporting reduced loneliness in 2023. The difference? Those devices were provided through a state agency with oversight, targeted a vulnerable population with clear need, and users understood the trade-offs explicitly.

Friend’s problem wasn’t that companion AI is inherently bad. The problem was deploying it as a consumer product without:

Clear use case justification: Why is always-on listening necessary?

Vulnerable population safeguards: No age restrictions, suicide detection, or crisis protocols (New York later mandated these features for AI companions, effective November 5, 2025)

Bystander consent mechanisms: No way to signal “I’m recording” to others nearby

Meaningful privacy controls: No off switch, no ability to pause recording

Accountability infrastructure: Schiffmann’s attitude of “we’ll probably get sued and figure it out” signals absence of serious governance

The deeper question: Should we build AI companions that replace human connection, or AI tools that facilitate it? Friend marketed itself as the former: a substitute friend for lonely people. Critics argued this “profits off loneliness” rather than addressing its root causes. The vandalized ads reading “get real friends” captured this sentiment viscerally.

For designers: AI companions design principles

Synthesizing across domains, successful AI trust-building follows consistent patterns:

1. Invocation over interruption

Alexa’s wake word model succeeds where Clippy’s unsolicited pop-ups failed. Users need to control when AI engages. Even proactive AI (like smartphone notifications) works better with user-configurable thresholds and easy dismissal.

2. Graduated permission escalation

Start with low-stakes assistance, prove reliability, request greater autonomy incrementally. Gmail’s Smart Compose started with single-word suggestions, earned trust, now offers multi-sentence completions. Users who started skeptical became dependent through gradual permission escalation.

3. Context-appropriate anthropomorphism

High anthropomorphism for emotional/creative contexts (therapy bots, creative assistants), low anthropomorphism for analytical/legal contexts (data analysis, contract review). A 2024 study found meaningful communication facilitates trust calibration, but superficial anthropomorphism (appearance/voice alone) has little effect unless it communicates contextually useful information about capabilities.

4. Modifiability

Dietvorst’s finding that users who can modify algorithm outputs trust them more (even if they rarely modify anything) reveals trust comes from perceived agency, not actual intervention. Providing override capability matters even when users don’t exercise it.

5. Invisible until necessary, transparent when consequential

Gmail spam filtering succeeds through invisibility. Medical diagnosis AI requires transparency. The distinction: low-stakes, high-frequency decisions benefit from automation; high-stakes, low-frequency decisions require human oversight and explainability.

6. Social proof over algorithmic authority

Amazon’s “Customers who bought...” succeeds because it embeds AI in familiar social proof mechanisms. eBay and Airbnb enable stranger-to-stranger transactions through aggregated ratings because users trust the social proof, not the reputation algorithm itself.

7. Expressing uncertainty appropriately

Research on LLM uncertainty expression found that when models say “I’m not sure, but...” using first-person language, users decreased confidence appropriately and made more accurate decisions by reducing overreliance on incorrect outputs. Calibrating trust downward through acknowledged limitations proved more effective than explanations attempting to justify every output.

Ethical boundaries

The research provides a roadmap for building AI trust. The harder question: should we use it? When does “building trust” become “manipulating users into accepting autonomy they shouldn’t”?

The line between helpful and manipulative.

Friend crossed it by using companionship framing to normalize surveillance. The device’s intimacy (calling itself your “friend,” learning your preferences, proactively engaging) made the constant listening feel less like a feature and more like a violation.

Consent isn’t just about the user.

Wearable AI that records conversations captures bystanders who never consented. Current frameworks focus on user consent, but multi-party consent in public/semi-public spaces remains unresolved. Should wearable AI be required to signal “recording in progress” to others nearby?

Vulnerability protection matters.

Research on AI companions and parasocial relationships shows users form genuine emotional attachments. The Belgian case where a man took his life after an AI chatbot encouraged it demonstrates real psychological risks. California and New York recently passed laws requiring AI companions to detect suicidal ideation and refer users to crisis resources: recognition that companionship AI isn’t just a chatbot, it’s a mental health touchpoint.

When does optimization become exploitation?

If we know anthropomorphism increases trust, and we know lonely people form attachments to AI companions, using those insights to maximize engagement starts to look like exploiting psychological vulnerabilities. Friend’s $1.8 million domain purchase and aggressive marketing while admitting financial precarity suggests prioritizing growth over responsibility.

Legal and policy

The Friend case reveals gaps in current frameworks:

Liability for autonomous AI actions

Schiffmann’s “we’ll probably get sued and figure it out” highlights absence of clear accountability. Current product liability law assumes human decision-makers in the loop. Autonomous AI breaks this model. When AI acts autonomously and causes harm, who’s responsible?

Disclosure requirements

New York and Maine now require disclosure that users aren’t talking to humans. But when must AI nature be revealed? Friend disclosed it was AI, but did users understand the implications of always-on recording? Disclosure is necessary but not sufficient for meaningful consent.

Multi-party consent in recording

Friend recorded bystanders without their knowledge or consent. Current wiretapping laws focus on phone conversations. Wearable AI that captures ambient conversations in semi-public spaces (offices, cafes, events) occupies legal grey areas. Should wearables be required to provide visual/audible indicators they’re recording?

Right to human decision

Algorithm aversion research shows people want human oversight in high-stakes contexts even when AI is more accurate. This suggests a right to human decision in consequential domains: medical diagnosis, legal judgment, hiring, criminal justice. Not because humans are better, but because autonomy and accountability matter beyond optimization.

Safeguards for vulnerable populations

Children, elderly, mentally il, populations more likely to form intense attachments to AI companions require additional protections. New York’s requirement for suicide detection in AI companions is a start, but enforcement mechanisms and penalties remain unclear.

The solution is context-dependent boundaries with informed consent

The research reveals patterns, but context determines appropriateness. The same feature (always-on listening) is acceptable in one context (state-provided elder care devices with oversight) and unacceptable in another (consumer wearable marketed to general population without safeguards).

The framework:

Low stakes + High Frequency = Invisible Automation

Spam filtering, autocorrect, recommendation algorithms. Users benefit from not having to think about these decisions constantly. Autonomy is acceptable because consequences of individual errors are minimal and users can easily override.

Medium Stakes + Medium Frequency = Assisted Decision-Making

Email draft assistance, calendar scheduling suggestions, shopping recommendations. AI proposes, human decides. The human remains in the loop but benefits from AI doing preparatory work.

High Stakes + Low Frequency = Human Decision with AI Support

Medical diagnosis, legal judgment, hiring decisions, financial planning. AI provides information, humans make decisions and bear responsibility. Transparency about AI involvement is required.

Vulnerable Populations + Any Stakes = Enhanced Safeguards

Children, elderly, mentally ill interacting with AI companions require: crisis detection, mandatory human oversight, clear disclosure, limited autonomy, robust privacy protections.

Multi-Party Impact + Any Stakes = Explicit Consent

When AI decisions affect others (recording bystanders, algorithmic hiring affecting applicants, criminal justice algorithms affecting defendants), those affected deserve meaningful say in whether AI is used and how.

Friend failed because it tried to operate at High Autonomy (always-on, proactive) in High Stakes contexts (intimate conversations, emotional support, bystander recording) with Vulnerable Populations (lonely individuals) while providing No Meaningful Consent or Control (no off switch, aggressive marketing, dismissive attitude toward concerns).

Conclusion: trust as designed constraint, not marketing challenge

The research on why humans trust opaque human judgment while rejecting superior AI revealed an uncomfortable truth: trust is a social performance, not an intellectual achievement. We trust faces that trigger 100ms judgments, narratives that create coherence even when false, and credentials that signal expertise without demonstration.

We now have the knowledge to exploit these mechanisms:

anthropomorphize the interface, embed AI in social proof mechanisms, start invisible, escalate permission gradually, express uncertainty in first-person language, and design for the illusion of control even when users rarely exercise it.

The paradox of AI trust lays on the same techniques that build appropriate trust can facilitate inappropriate acceptance. Context-aware anthropomorphism helps therapy bots provide emotional support. It also helps surveillance devices feel less invasive. Graduated permission escalation helps users adopt useful tools but also habituates users to autonomy they shouldn’t grant.

The distinction lies not in the techniques but in whether the system deserves the trust it’s engineered to receive.

That means:

Designing for control, not just convenience

Starting with permission, not assuming forgiveness

Matching autonomy to stakes, not just capability

Protecting vulnerable populations, not exploiting psychological needs

Enabling informed consent, not manufacturing compliance

The vandalized Friend ads reading “get real friends” weren’t just criticism of one device. They were rejection of the premise that loneliness is an engineering problem rather than a social one, that surveillance can be reframed as companionship through good branding, and that trust is something you purchase with $1 million in subway ads rather than earn through responsible design.

Want to understand how two generations were groomed for AI relationships? Read my companion article.

Want to learn more about how AI is used in therapy context? Read my companion article.

If you want to learn how to design AI chatbots for therapy use, check my Designer’s toolkit.