Google’s ecosystem leverage: embedding AI in existing products

ECOSYSTEM THINKING - ARTICLE 4/4

This article is the last of a four-part analysis of the rise of ecosystem thinking in tech:

PART 1 / The rise of ecosystem thinking

PART 2 / Apple’s ecosystem across time: designing for tomorrow - Liquid Glass

PART 3 / Meta’s ecosystem independence: breaking free from smartphones - Wearables

PART 4 / Google’s ecosystem leverage: embedding AI in existing products - Gemini

When ChatGPT launched in November 2022, most analysis focused on whether Google could build a competitive model. They could. They did. Google built Gemini 3 on two decades of AI research from Brain and DeepMind. The model benchmarks at the top. Multimodal understanding, visual reasoning, code generation. Building the best model was never the hard part. Getting people to use it is.

The challenge was never technical capability but deployment speed and user adoption before “ChatGPT it” replaced “Google it” in everyday language.

Gemini is Google’s answer. Not a standalone product competing for mindshare, but intelligence embedded into every surface users already touch; infrastructure defense disguised as product innovation, leveraging two decades of digital ecosystem already in place.

What Google’s ecosystem actually means

Google’s ecosystem is digital infrastructure already deployed:

Search, Android, Chrome, Workspace... Two decades of products working together because they all feed the same advertising engine. When Pichai declared “Code Red” after ChatGPT’s launch, the threat wasn’t losing to a better chatbot. It was users forming new habits that bypass Google Search entirely. Existential for a company where 80% of revenue still comes from ads.

Gemini’s job is making that linguistic shift impossible by embedding intelligence into surfaces users already touch daily.

The infrastructure advantage

Google runs on custom silicon, tensor Processing Units, sixth generation as of 2025, designed specifically for their workloads. Pichai disclosed in the Q3 earnings call that first-party models process over 7 billion tokens per minute through API. That volume only works economically because Google doesn’t pay retail prices for compute.

This matters less for capability than for deployment. Gemini 3 benchmarks at the top across most tasks. The model quality is there. What separates Google is ability to serve it everywhere users already are, at scale, without burning venture capital on compute costs. Microsoft and Meta bid against each other for NVIDIA hardware. Google manufactures its own chips.

“Google’s free tier is significantly more generous than competitors, likely because their internal compute costs are lower. OpenAI and Anthropic must charge for API access to cover cloud provider fees.” - Source: Comparing best AI tools for business 2025 - Alumio https://www.alumio.com/blog/comparing-best-business-ai-tools-2025

The cost advantage enables strategy others can’t match:

free tiers of advanced intelligence that funnel users toward paid enterprise products. OpenAI and Anthropic can’t sustain similar loss-leader approaches while paying retail compute prices. The moat isn’t research excellence. It’s manufacturing capacity and vertical integration assembled over twenty years for different purposes that happens to solve today’s problem.

“We continue to make significant investments in our technical infrastructure, including our sixth-generation TPUs, which are powering Gemini across our products. Our first-party models now process over 7 billion tokens per minute through our API.” - Sundar Pichai, CEO of Alphabet and Google

The cannibalization

Google won’t say this plainly: AI Overviews are destroying the business model that funds everything else.

Zero-click searches where users get answers without clicking any result reached nearly 60% by late 2025, according to Semrush data tracking millions of queries. Publishers report referral traffic drops of 10 to 25% year-over-year. Major news sites collectively lost 60 million search referrals between February 2024 and February 2025.

The AI Mode toggle in Search makes this explicit: users can switch into conversational interface that synthesizes answers across sources. It’s better search for the user. It’s catastrophic for publishers who depend on that click-through traffic.

Google is disrupting itself. Whether it’s strategic self-disruption or forced response is probably both. The logic: better to serve zero-click answers and keep users on Google than lose them to ChatGPT or Perplexity entirely.

Search monetization shifts from informational queries where AI Overviews dominate toward transactional queries. Shopping and booking, where AI acts as high-value affiliate rather than just ad platform.

This only works if retention benefit outweighs revenue loss.

Google’s betting it does, but the numbers aren’t public. What we see: ad revenue still growing despite rising zero-click rates. What we don’t see: how much faster it would grow without AI cannibalization, or how much market share they’d lose without it.

“Our Search and advertising business continues to deliver strong results. Google Search advertising revenues were $49.4 billion in Q3, up 12% year over year.” - Sundar Pichai, CEO of Alphabet and Google

The defensive posture reveals the stakes.

“Our focus is on making AI helpful for everyone through the products they already use every day” - Sundar Pichai, CEO of Alphabet and Google

“This is our most capable model yet, with breakthrough performance in reasoning, coding, and multimodal understanding” - Demis Hassabis, CEO of Google DeepMind

When Demis Hassabis talks about Gemini’s capabilities, he emphasizes intelligence. When Pichai talks about it on earnings calls, he emphasizes integration. Different audiences, different framing, same underlying priority: protect the core business.

Android as ambient intelligence layer

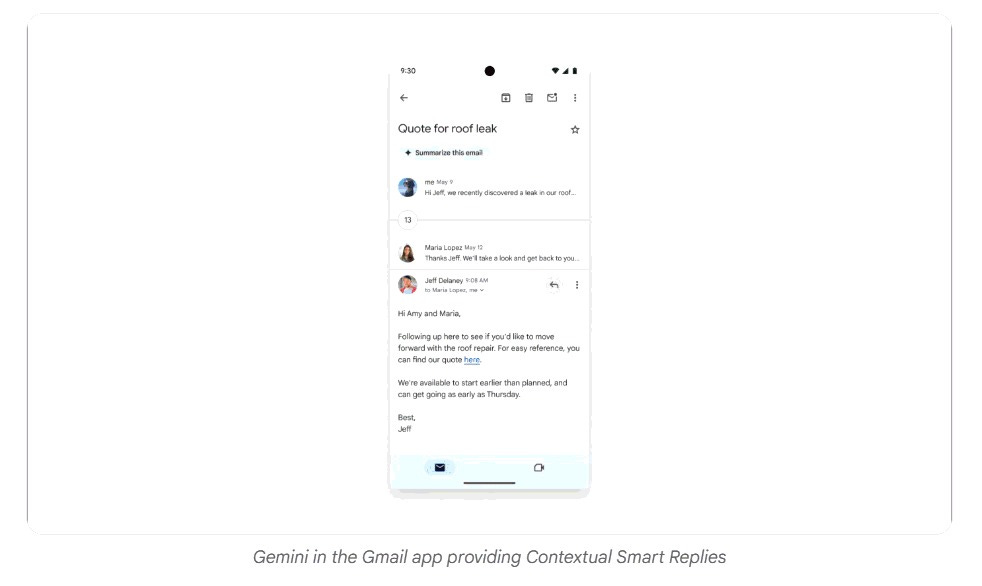

If Search is defense, Android is offense. Gemini replaced Google Assistant as the primary interaction layer on Android devices in 2025. This creates advantages that standalone AI products can’t match regardless of model quality.

Screen awareness:

because Gemini integrates into the OS, it reads what’s currently displayed. You’re looking at a restaurant menu in any app, you invoke Gemini and ask “Does this place have good vegan options?” It reads the screen, queries Maps and Search, answers without leaving the app.

To use ChatGPT on a phone: unlock device, find app, open it. To use Gemini: long-press the power button. In the economy of attention, friction determines usage. Small difference, massive impact at scale.

The data from late 2025 shows a split pattern. ChatGPT sessions average over 14 minutes. Google Search averages 2-4 minutes according to industry benchmarks. Users go to ChatGPT for deep work, complex reasoning, extended conversations. They use Google for quick answers, navigation, factual lookups.

We can assume Google’s goal with Gemini 3 is closing that engagement gap. Bring Deep Research and reasoning capabilities into the ecosystem users already inhabit so those long-duration, high-value sessions happen on Google infrastructure instead of OpenAI’s. The integration is the weapon. If Gemini becomes the ambient intelligence layer users interact with dozens of times daily, model quality becomes secondary to availability.

Workspace integration: where defense becomes offense

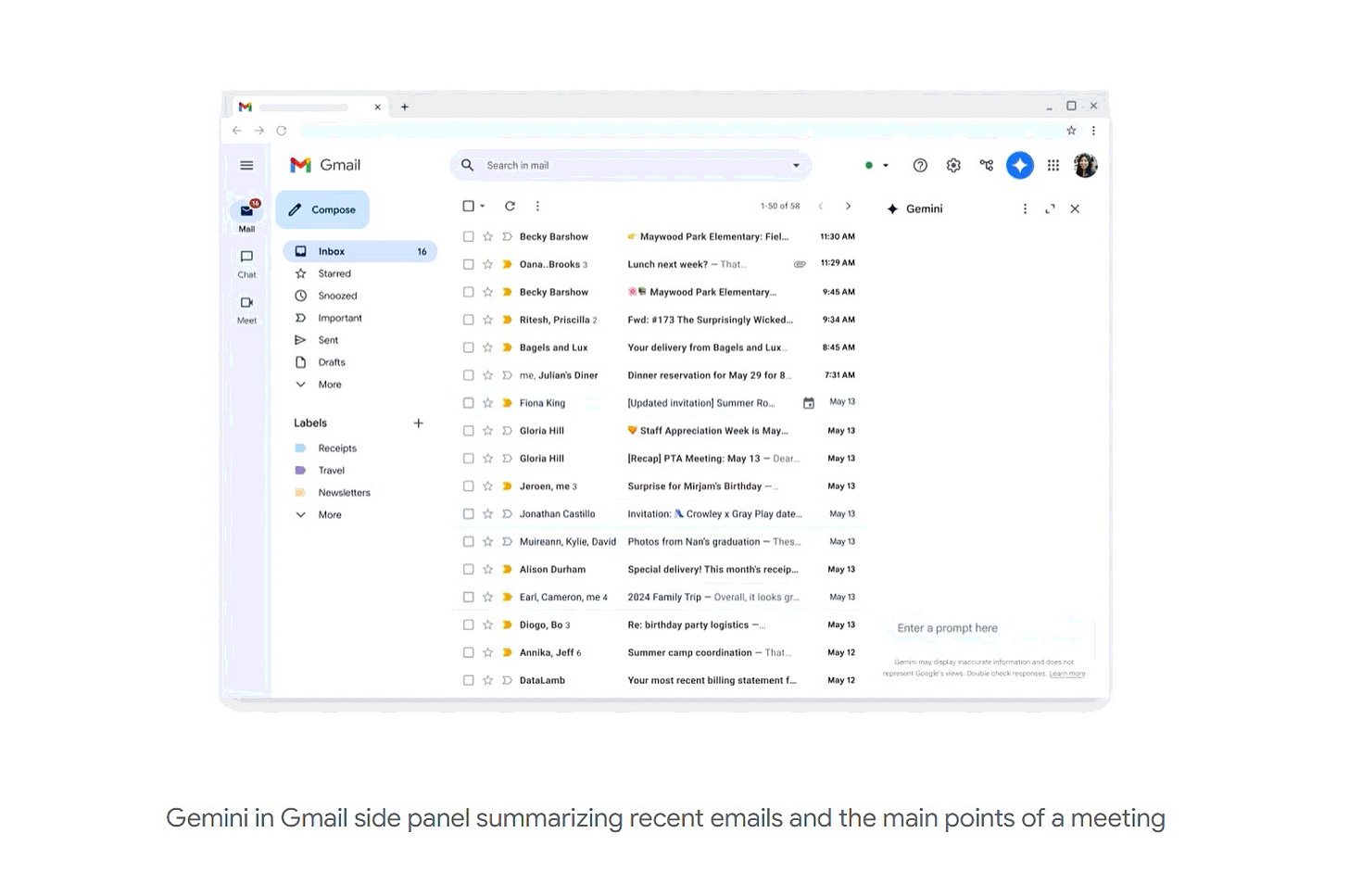

Consumer AI gets the headlines. Enterprise is where this becomes profitable. Embedding Gemini into Google Workspace transforms generic productivity software into active intelligence platform.

Deep Research within Workspace demonstrates the integration advantage clearly.

You can tell it: “Draft a Q4 strategy document based on the emails from the marketing team, the Q3 financial slides in Drive, and the chat logs from the Genesis project channel.”

It reads your Gmail, it accesses your Drive, it pulls from Chat history. Zero setup required because the data already lives in Google’s infrastructure. OpenAI can’t replicate this because they don’t host your email and files. Microsoft Copilot attempts something similar with Microsoft Graph, but Google’s cloud-native architecture where files are URLs rather than binaries provides speed advantages.

The strategic value is switching costs.

A company using Workspace with Deep Research integrated isn’t just choosing better AI. They’re choosing to migrate entire data infrastructure and retrain employees if they leave. By Q3 2025, over 30% of Workspace customers were using Gemini features. More than 70% of Cloud customers engaged with the AI stack.

One enterprise IT director told TechCrunch in October 2025:

“We evaluated Copilot and Claude. But our documents are already in Google. Our email is already in Google. Migration isn’t impossible, but the business case needs to be overwhelming. Incremental improvement in model quality doesn’t justify that disruption.”

Entrenchment through data gravity.

The developer and creative plays

Flow targets creators with Veo 3. It solves the consistency problem that makes AI video a novelty rather than p

roduction tool. Define characters and settings, reuse them across shots. Native audio generation creates synchronized dialogue matching video action. The strategic link to YouTube is direct: lower barriers to quality content creation, ensure steady uploads, and secure platform dominance.

Nano Banana demonstrates Google’s evolving developer culture. The model leaked onto leaderboards under a codename. Community nicknamed it Nano Banana: Google kept the name instead of forcing corporate rebrand. Integration into Adobe Creative Cloud acknowledges that professionals work in Adobe tools. Google doesn’t need to replace Creative Cloud, they just need to power it.

When convenience beats innovation

The framing around Google’s strategy assumes defense. Protecting search revenue, preventing ChatGPT from becoming the new verb for looking things up, retrofitting AI into existing products before losing users. All true, but it misses the offensive opportunity.

Google isn’t just defending. They’re executing what industrial designer Raymond Loewy called the MAYA principle: Most Advanced Yet Acceptable. Give people the most sophisticated thing they’ll actually use, not the most sophisticated thing that exists.

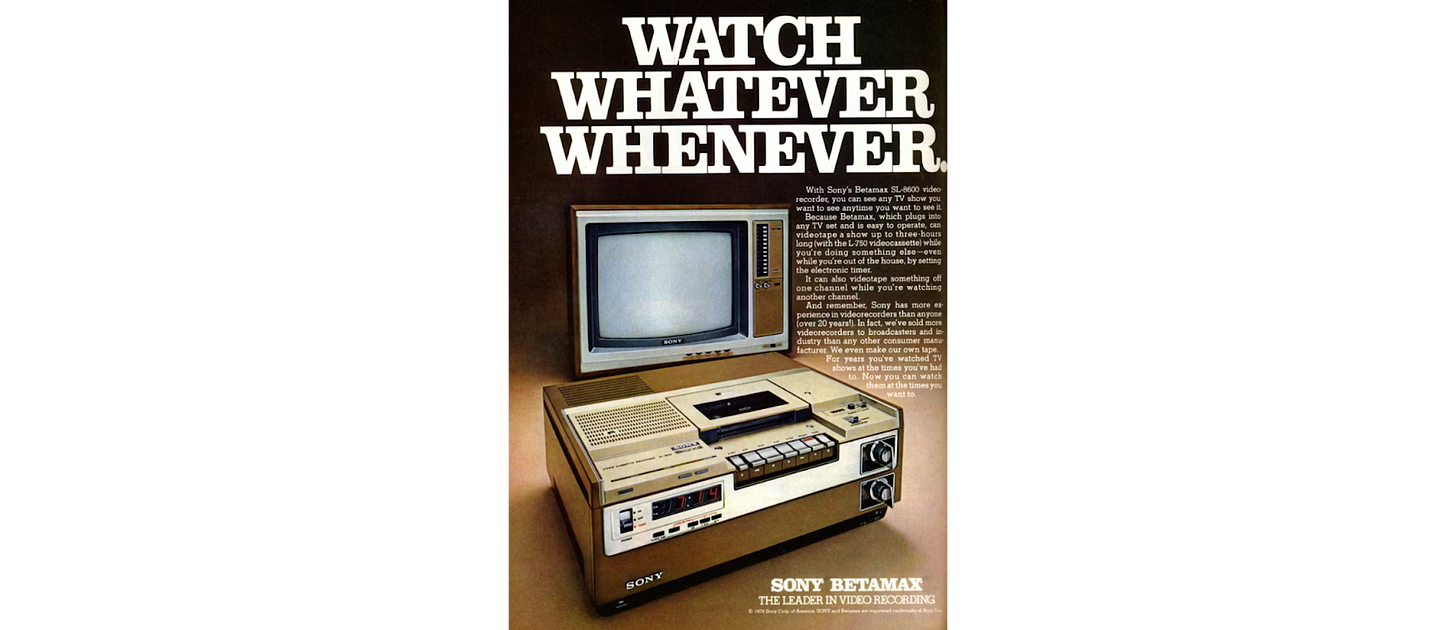

VHS beat Betamax despite worse picture quality because two-hour recording capacity fit how people actually watched TV. You could record a football game or full movie without changing tapes. Technical superiority lost to behavioral fit. Sony built the better format. JVC built the format people wanted to use.

ChatGPT is Betamax.

Superior in isolated comparison, standalone product with cleaner interface and deeper engagement. Sessions average over 14 minutes because users go there specifically for complex work. But you have to remember to go there, open the app, context-switch from whatever you’re doing.

Gemini is VHS.

Everywhere you already are: long-press the power button on Android, type in the search bar, highlight text in Docs. The AI appears in the workflow without requiring deliberate navigation. Integration creates lower friction than excellence in isolation.

The question isn’t whether Google’s defending revenue, but whether convenience at scale bleeds out standalone competitors regardless of model quality. If users develop muscle memory for invoking AI through Google surfaces dozens of times daily, ChatGPT becomes the tool you use for specific deep work, not the default intelligence layer. Relegation to specialty use case, not replacement.

“ChatGPT shows higher session duration (14+ minutes) but requires deliberate navigation. Gemini shows lower session duration (5-7 minutes) but far higher frequency of use due to integration points.” - ChatGPT vs Microsoft Copilot vs Google Gemini: Full Report (July 2025 Update)

Microsoft’s Copilot faces similar pressure. Charge $30 per user monthly on top of Office 365 subscriptions. Google makes Gemini free for existing Workspace customers. Not “introductory offer.” Permanently included. The business case for Copilot requires believing it’s $30 per month better than free integrated alternative. High bar when both models perform well.

OpenAI can build GPT-6 that’s measurably smarter. Anthropic can maintain Claude’s coding advantages. Neither changes the fundamental dynamic: if users encounter Google’s AI first, more often, with less friction, the better standalone product doesn’t get the repetitions that build habit.

Platforms win by being most convenient at the moment of intent.

Google has decades of infrastructure creating those moments: search queries, Android notifications, Workspace collaboration, YouTube consumption. Each is an opportunity to surface intelligence without asking users to remember a different destination.

The defensive posture is real, sure, but the offensive opportunity might be larger. Let competitors burn capital acquiring users one at a time, while Google has 2+ billion people already using products that can become AI surfaces. The integration strategy converts existing user base into AI users without acquisition cost. That’s leverage.

“We’re seeing strong adoption of Gemini across Google Workspace, with over 30% of customers now using AI features” and “more than 70% of Cloud customers are engaging with our AI stack.” - Sundar Pichai, CEO of Alphabet and Google - Google Q3 2025 Earnings - CEO Remarks

What this actually costs

Google’s pivot to AI-first shows up in capital expenditure. $90+ billion projected for 2025, primarily data centers and TPU manufacturing. After years of massive investment, they signaled transition to harvester phase in Q3 2025. First-ever $100 billion revenue quarter, driven by Cloud growth and AI subscriptions.

The investment makes sense only if you understand what’s being protected. Search advertising generates roughly $175 billion annually. Workspace serves millions of enterprise customers. If AI integration prevents even 5% erosion in search share or customer loss to Copilot, it justifies billions in development costs. The business case is defensive: what revenue do we lose if we don’t do this?

But the impact on everyone else reveals the actual dynamics. Publishers describe 2025 as the year of traffic crisis. Zero-click searches keep users on Google but eliminate referrals. The symbiotic relationship where publishers provide content for indexing in exchange for traffic is fracturing. Regulatory scrutiny intensifies. If Google uses monopoly position to advantage its AI while reducing traffic to competitors, that invites antitrust action.

The calculation Google is making: regulatory risk develops slower than competitive displacement. Better to integrate aggressively and deal with legal challenges over years than cede market to ChatGPT over months.

The pattern emerging

Google’s strategy with Gemini mobilizes their entire stack, silicon to software, to surround users with intelligence embedded in products they already use. Infrastructure through TPUs lowers cost of serving AI at scale. Android and Chrome distribute intelligence without requiring app downloads. Workspace transforms AI from novelty into productivity requirement embedded in existing workflows.

The bet isn’t having the best model in isolation, but being the AI users encounter most often with least friction.

Integration over standalone excellence. Convenience over feature leadership when both options work well.