How Google’s Gemma could settle the AI copyright debate

Local models mean individual accountability, and it’s great news for intellectual property rights

AI Key takeaways:

Local models shift IP accountability from corporations to end users. That makes the copyright chain auditable.

Every tool needed to regulate AI intellectual property already exists. Licensing, derivative work law, provenance tracking, transparency labeling. This is assembly, not invention.

Creators regain leverage when models need fresh licensed content to stay relevant.

The debate stays unsolvable only if you treat it as one problem. It is several problems we have already solved in other industries.

The only questions worth asking are who gets paid, under what terms, and what happens when someone does not comply. The legal system is great at that.

Index

Local models mean individual accountability, and it’s great news for intellectual property rights

Why local AI is a big deal

Gemma could be the last piece of a toolkit we already have at our disposal

Every piece exists. This is within reach.

A colleague and I were discussing how intellectual property laws will evolve. These are hot buttons in the AI space. It’s both a moral discussion about value and a practical one about legality, and it seems impossible to pacify.

Impossible, I said? Until this week.

Because four days ago (at the time I am scheduling this article…), Google released Gemma 4. Fully open source, Apache 2.0, runs on a phone. A local model. And more importantly, local models also create individual accountability: shifting the IP responsibility from massive corporations to the end user.

This is massive for AI IP laws.

Why local AI is a big deal

Businesses are adopting local AI for reasons that have nothing to do with copyright. Privacy, data security, knowledge management, regulatory compliance. A medical app that processes patient images on-device and never sends raw data to the cloud. A law firm whose case history stays on its own servers. These are nonnegotiable operational requirements driving adoption regardless of the IP debate.

But, more important for this discussion, local AI also makes the IP chain auditable. When a model runs on your machine, trained or augmented with libraries you selected, producing output you distribute, you know what went in and you can prove what was licensed. That changes the entire IP conversation.

AI local models have been around for a while now, but Gemma 4 is a signal for a larger business adoption. And that’s why I think Gemma is the last piece of a puzzle most people didn’t realize was almost complete. Because the AI intellectual property problem looks unsolvable only if you treat it as one problem. It is actually several problems we have already solved, in different industries, using tools that already exist.

Gemma could be the last piece of a toolkit we already have at our disposal

Licensed content libraries

We’ve had them for decades. Stock images, fonts, music samples, 3D assets. The stock photography market alone is a multi-billion dollar industry built entirely on licensing creative work for commercial use. Getty Images and Shutterstock merged in a $3.7 billion deal in January 2025. The infrastructure exists. The payment rails exist. The legal precedents exist. A local model that needs fresh content to stay relevant creates the same dynamic: you want current, commercially defensible output, you license the library. That library needs updating. Creators have leverage. The Spotify model applies: licensing doesn’t need to be perfect. It needs to be more convenient than piracy.

Derivative work law

The Warhol v. Goldsmith decision narrowed fair use for commercial derivatives. Music law has de minimis sampling thresholds. The U.S. Copyright Office confirmed that AI-assisted work with sufficient human expressive contribution can be copyrighted. These legal tools exist and can be calibrated for AI-generated output.

(The question of what is derivative in AI is such a deep topic, I’ll address it in a future article.)

Provenance tracking

C2PA, the Coalition for Content Provenance and Authenticity, provides the technical standard. Over 6,000 members, including Google, Adobe, Microsoft, OpenAI, Meta. LinkedIn and TikTok already display Content Credentials. The EU AI Act mandates AI content transparency starting August 2026. And underneath the wreckage of the NFT hype cycle, the core technology remains useful: an immutable ledger that can tag content with a verifiable record of who created it, who licensed it, and what rights attach. We went through the speculation so the infrastructure could mature.

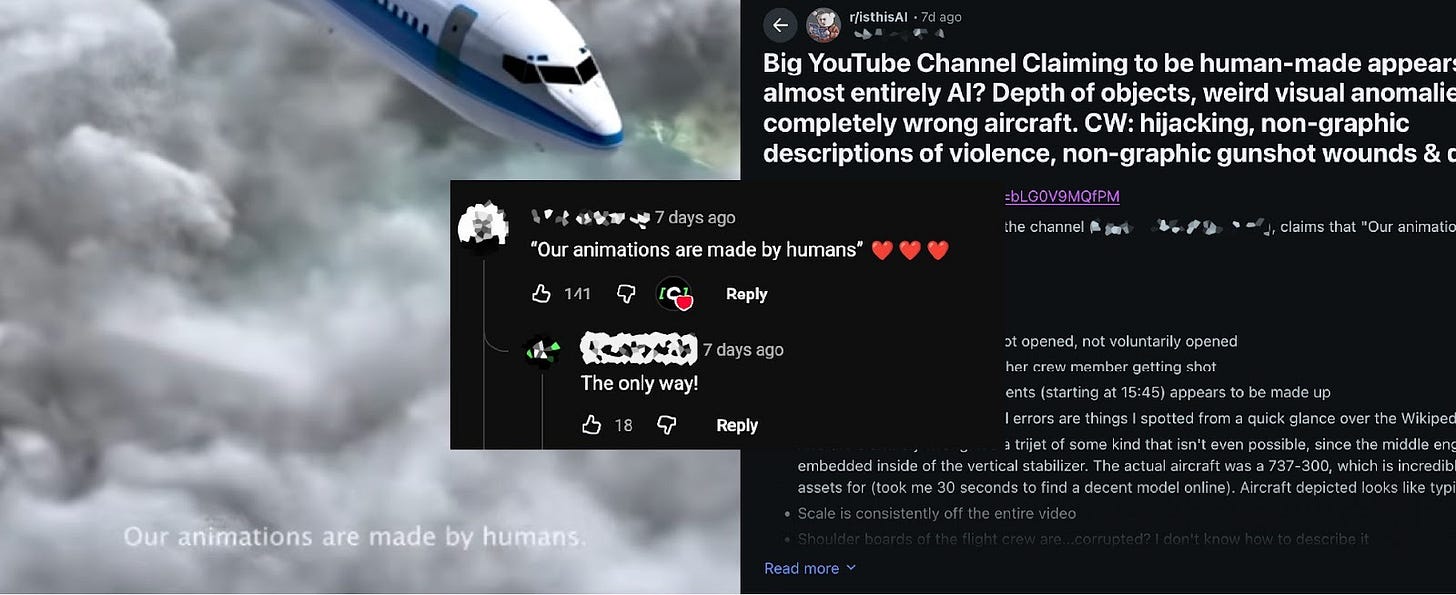

Transparency labeling

First result when googling ‘Human made YouTube’ in 12/2025: The creator advertises the ‘human-made’ label. The viewer looks for it and praises it. The suspicion that the mention may be deceitful is discussed on Reddit.

Already emerging organically. At least eight certification initiatives across the UK, US, and Australia are building “AI-free” and “human-made” labels. Films are adding credits. Publishers are stamping books. The market is creating a premium for verified human work the same way “organic” and “fair trade” labels created premiums in food. No philosophical consensus required. Just a credible signal.

Local model accountability

Software licensing already requires compliance documentation for every dependency. SBOMs list what went into a product. A local AI model is a dependency. A content library is a dependency. The same logic with the same compliance structure, extended to a new kind of tool.

Every piece exists. This is within reach.

Tech companies benefit from framing AI as so unprecedented that existing law cannot apply. That framing buys time.

Artists benefit from framing the debate around effort and emotional value. That framing generates sympathy.

Neither produces a system. And the longer the debate stays on philosophical ground, the longer the extraction continues without structure.

The only questions worth asking are : who gets paid, under what terms, and what happens when someone doesn’t comply? For that we need only one thing that local models will trigger: traceability.