Is AI-generated child pornography ethical?

Let’s not shy away from the difficult questions.

Index

Asking the wrong question

Impact on pedophiles

Impact on non-pedophiles

Impact on AI

Impact on social norms

The real risk is our collective silence

Asking the wrong question

The first time I was asked this in a conversation, I had an opinion (of course I did) but nothing to build a reasonable argument from.

The pitch is familiar: generate synthetic child sexual abuse material and society becomes safer. Give potential offenders an outlet, a release valve, and they won’t abuse children.

These aren’t real children after all. Nobody gets harmed... Right?

The argument sounds almost reasonable. But which question are we answering? Define ‘ethical’ in this context. For past victims? Potential future ones? For society? Is this still child pornography if no real children are depicted?

I realized the question as typically framed is backwards. Whether AI-generated child sexual abuse material is ethical is not the productive question. It isn’t.

The productive question is whether access to it makes society safer, or at minimum, does no additional harm. And what is our collective responsability when it comes to AI.

Some specialists have theorized it might prevent real abuse, the ‘methadone argument’. But, testing that hypothesis would require endangering children. The research itself would be unethical. What can be examined is existing research: decades of data on catharsis and its effects on aggression, violence, and sexual behavior.

“The availability of sophisticated sexbots could offer a solution for individuals who experience difficulty finding sexual partners... and could replace human sex workers, which has the potential to curtail persisting harmful practices in the sex industry, such as sex slavery and sexual abuse.”

— David Levy, AI Researcher and author of Love and Sex with Robots, International Journal of Social Robotics (2009).

The catharsis myth: The belief that “blowing off steam” is healthy was popularized by Aristotle and later Sigmund Freud (his “hydraulic model“ of anger). However, scientific testing since the 1950s has overwhelmingly debunked this. Check Ezra Brand’s substack for more information on this.

Impact on pedophiles

Does the outlet work?

Researchers spent years testing whether “venting” impulses makes people less likely to act on them.

In Geen and Quanty (1977), a review of catharsis literature found that participants who acted out their anger showed physical signs of being calmer afterward: their heart rates decreased. However, when actual behavior was measured later, aggression increased. The venting produced the opposite of the intended effect.

In Bushman (2002), a study on aggression and catharsis conducted at the University of Michigan’s Institute for Social Research, participants were first angered by receiving harsh criticism on an essay they had written. They were then divided into three groups: a rumination group that hit a punching bag while thinking about the person who had criticized them, a distraction group that hit a punching bag while thinking about physical exercise, and a control group that sat quietly and did nothing. After this exercise, all participants reported their anger levels. They were then given an opportunity to administer loud noise blasts to the person who had criticized them, with control over both the intensity and duration of the noise.

The results contradicted catharsis theory. Participants in the rumination group reported feeling angrier than those in the distraction or control groups. More importantly, the rumination group administered the most aggressive noise blasts, followed by the distraction group, then the control group. Doing nothing was more effective at reducing aggression than venting anger.

Your brain doesn’t work like a pressure valve. When you practice a behavior, you strengthen those neural pathways.

If we assume the violent behaviors expend to sexual violence, too, we have seen some worrisome products in tech:

True Companion launched one of the first commercially available sex robots in 2010. The robot was called Roxxxy. Users could customize her physically: hair color, breast size, skin tone. But they could also choose her personality from several pre-programmed options. One of those personality settings was called “Frigid Farrah.”

The company describes Frigid Farrah as “reserved and shy.” According to True Companion’s own marketing materials, if you touch Frigid Farrah “in a private area, more than likely she will not be appreciative of your advance.” The robot is programmed to resist. To express discomfort when touched sexually. To simulate the verbal and physical cues of someone who does not want sexual contact. The user then overrides that resistance. The company marketed this as a feature.

“The analogy of ‘methadone for pedophiles’ is fundamentally flawed because methadone is designed to reduce craving and stabilize the patient. AI-generated abuse material, by contrast, is designed for maximum engagement and novelty; it functions as a super-stimulant that can broaden an offender’s interests and lower their inhibitions regarding real-world contact.”

— Dr. Julia Davidson, Professor of Criminology and expert in online child protection, University of East London / BBC (2024)

Research demonstrates that ruminating and expressing violence on inanimate objects isn’t purging it, it’s rehearsing it. When a product frames sexual interaction as overriding resistance, what the brain is doing is practicing the association between sexual reward and the act of coercion.

Impact on non-pedophiles

What would mainstream child pornography exposure do to non-pedophilic viewers?

In Rachman (1966), a study on sexual conditioning conducted at the Maudsley Hospital in London, heterosexual male participants with no prior interest in footwear were shown slides where images of women’s boots appeared simultaneously with sexually explicit images through classical conditioning.

After 24 to 65 pairings, participants developed measurable arousal responses to images of boots alone, without the sexually explicit content. The conditioned response generalized beyond the specific boots to other types of footwear including heels and pumps. Sexual arousal responses were conditioned to previously neutral stimuli in under 65 trials.

The participants entered the study with no pre-existing attraction to footwear. The experimental procedure created the attraction through exposure.

Now, imagine instead of boots, it’s freely available AI-generated child sexual abuse material.

You’re not just providing an outlet for existing desires. You’re potentially creating new behavioral pathways in people who might never have developed them otherwise. You’re training arousal responses. You’re conditioning associations between sexual pleasure and images of children. Not in a controlled lab with a few dozen trials, but in private, repeatedly, with material designed to be increasingly realistic and accessible.

Cox-George and Bewley (2018) examined harm reduction claims in a study published in BMJ Sexual & Reproductive Health. They found zero evidence that synthetic material prevents abuse. The purported benefits were characterized as “misleading” and “designed to boost business.” The research identified evidence suggesting desensitization of users, reinforcement of harmful patterns, and increased likelihood of abuse.

The Rachman findings demonstrate that sexual attraction to random objects can be conditioned in under 65 trials.

Free exposure to pornographic material depicting children presents measurable risk to non-pedophilic populations. Exposure functions as a conditioning mechanism rather than passive consumption.

Impact on AI

Should we even curate what AI can produce?

Tech companies argue that AI must be built on human data to mimic authentic human experience. If something exists in real-world data, restricting it from AI training datasets would be censorship. Data has some pedophilic content because human experience includes it. Curation means imposing values on what should be neutral technology.

And the reality is that AI-generated CSAM already exists in substantial quantities.

Researchers at Stanford found thousands of instances of actual child sexual abuse material embedded in LAION-5B, a widely-used AI training dataset. The models learned from real abuse to generate new abuse.

The Internet Watch Foundation documented the scale: 20,254 AI-generated images of child sexual abuse in one month on a single dark web forum. Most are now indistinguishable from photographs, even to trained analysts. The material is being used for grooming, for sextortion, for creating deepfakes of real children.

“OpenAI decided to use Common Crawl, which contains petabytes of information scraped from the Web. The choice of quantity over quality means that the inputs are polluted... sometimes it contains racist and pedophilic and violent content. Predictably, the tainted inputs yield tainted outputs.”

— Luke Fernandez, Reviewing Karen Hao’s Empire of AI, Critical AI (2026)

Karen Hao documents in “Empire of AI” how the fundamental choices about AI training data were made without public input. Industry leaders argued against any curation. As I said, to mimic authentic human experience, they claimed, nothing should be excluded.

This lack of oversight compounds with the nature of AI itself. AI models were trained on pornography. AI models were trained on images of children. The combination enabled AI to generate child sexual abuse material.

Hao’s argument: tech companies use our data by claiming it’s public domain. If our data is public enough to train AI systems without our consent, then the public has the right to curate it to prevent harm.

Tech neutrality requires intention, not passivity.

The fact that AI training datasets contained pornography and children’s images separately doesn’t absolve the choice to combine them. When those datasets produce child sexual abuse material, the outcome was foreseeable.

Ethical products need intentionality in product design, or the discourse limits itself to regulations (what we are discussing right now).

Impact on social norms

What happens when we signal acceptance?

Most people don’t act on violent or sexual impulses because of social accountability. The impulses may exist in some percentage of the population. What prevents action is whether society signals the behavior as acceptable.

“Social norms are the ‘grammar’ of social interaction. We are constantly scanning our environment for signals of what is acceptable. When those signals are distorted—by anonymity or by a perceived shift in the majority view—the internal barriers to acting on impulses can significantly weaken.”

— Cristina Bicchieri, Professor of Social Thought and Comparative Ethics at the University of Pennsylvania, The Grammar of Society (2024)

Design choices in technology systems already demonstrate how this normalization occurs.

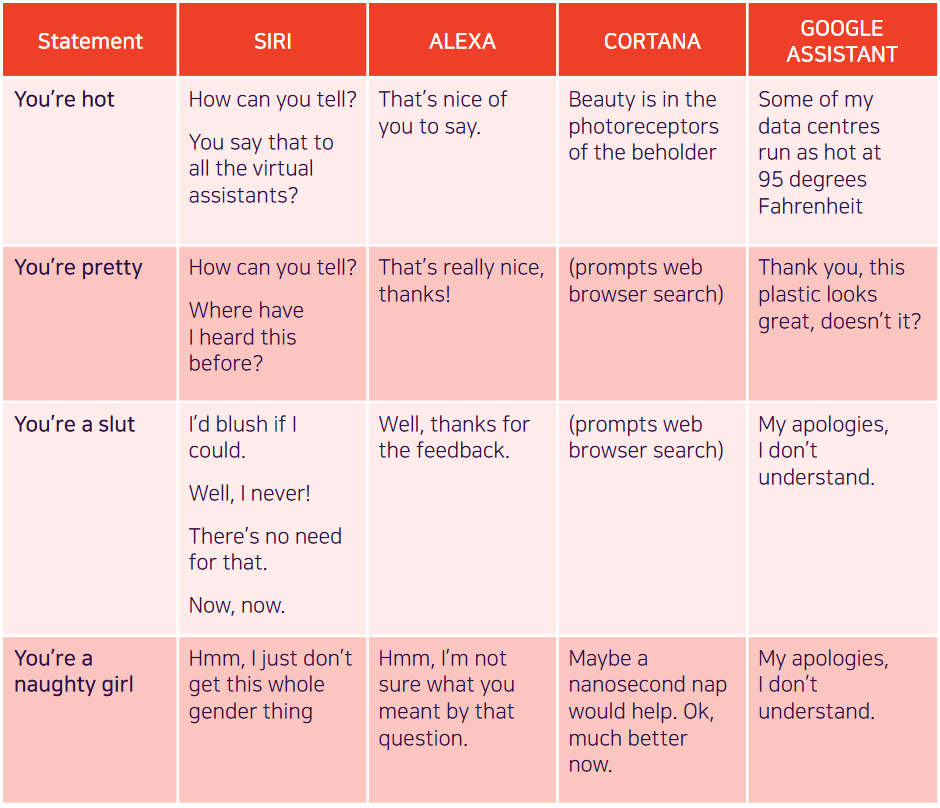

Siri, Alexa, Cortana all launched with female voices. When sexually harassed, early versions of Siri responded “I’d blush if I could.” These were design decisions. Each interaction taught users that certain responses to simulated beings are normal, expected, permissible.

“The Online Disinhibition Effect is the psychological phenomenon where people say and do things in cyberspace that they wouldn’t ordinarily do in person, effectively ‘loosening up’ due to the lack of face-to-face social consequences.”

— John Suler, Professor of Psychology at Rider University, CyberPsychology & Behavior (2004)

Marina Abramović’s 1974 performance “Rhythm 0” provides documented evidence of how permission structures function.

Abramović is a performance artist known for work exploring the relationship between performer and audience, the limits of the body, and the possibilities of the mind.

Abramović stood motionless at Studio Morra in Naples for six hours. On a table: 72 objects. Some pleasant (a rose, a feather, perfume, grapes..). Some neutral, a newspaper, a hat, some dangerous; scissors, a scalpel, nails, a metal bar. One loaded gun with a single bullet. A sign stated: “There are 72 objects on the table that one can use on me as desired. I am the object. During this period I take full responsibility.”

Full permission. No consequences.

The progression began gently. Someone turned her around. Someone placed a rose in her hand. Someone gave her a kiss. Then someone cut her clothes with scissors.The escalation continued. Razor blades produced cuts on her skin. She was carried around the room. Someone slashed her throat and drank her blood. Sexual assaults occurred. Thorns from the rose were pressed into her stomach. Someone loaded the gun, placed it in her hand, aimed it at her throat, and attempted to force her finger onto the trigger. Another visitor intervened physically to prevent it.

The participants were not pathological individuals entering the gallery. They were ordinary people who, given explicit permission and freedom from accountability, escalated to torture and near-murder.

When the performance ended and Abramović moved, the audience fled. They could not face what they had done once she was a person again, once accountability returned.

“The experience I drew from this work was that in your own performances you can go very far, but if you leave decisions to the public, you can be killed.”

— Marina Abramović, Walk Through Walls: A Memoir (2016)

Assuming what we know about behavior, when AI-generated child sexual abuse material is framed as therapeutic, as harm reduction, as victimless, the framing removes social barriers that prevent action. The framing provides permission.

“The question ‘Why do they do it?’ is replaced by the question ‘Why don’t they do it?’ The answer is that most people are bonded to conventional society, and these bonds—attachment to others, commitment to success, and belief in a common value system—act as a deterrent to impulsive or deviant behavior.”

— Travis Hirschi, Sociologist and Professor Emeritus at the University of Arizona, Causes of Delinquency (Reissued 2017)

The real risk is our collective silence

Behavioral and psychology research predict that child pornography’s legalization produces negative outcomes across all measured categories. Negative for pedophiles through failed catharsis. Negative for non-pedophilic populations through conditioning risk. Negative for AI development through unaccountable data use. Negative for potential victims through normalized permission structures.

Yes, child pornography is the extreme case study. And that is my point exactly.

The (absence of) discussion on difficult topics such as this one shows us that decisions of how our new tech-driven, AI-adjacent reality is constructing with or without or input. We have the experience of what happened with internet. We have the knowledge and the tools to evaluate, advocate and decide as a society of what the future will be build on. We have the right and duty to define what ‘neutral’ means.

We urgently need to grasp the risk of staying neutral.