Meta’s ecosystem independence: breaking free from smartphones - Wearables

ECOSYSTEM THINKING - ARTICLE 3/4

This article is the third of a four-part analysis of the rise of ecosystem thinking in tech:

PART 1 / The rise of ecosystem thinking

PART 2 / Apple’s ecosystem across time: designing for tomorrow - Liquid Glass

PART 3 / Meta’s ecosystem independence: breaking free from smartphones - Wearables

PART 4 / Google’s ecosystem leverage: embedding AI in existing products - Gemini

Meta controls the world’s largest social graph: 3 billion users across Facebook, Instagram, WhatsApp. None of it mattered when Apple changed a single privacy setting in iOS 14. App Tracking Transparency cost Meta $10 billion in ad revenue that year, demonstrating a fundamental problem: platform tenants live at the mercy of platform owners. The $75 billion Reality Labs has burned since isn’t product development spending. It’s insurance against irrelevance.

“When you build for other people’s platforms, you’re at their mercy... We’re building Reality Labs so we own the platform for the next computing paradigm.”

— Mark Zuckerberg, CEO of Meta (Reuters interview, October 2024)

You would be wrong to think that Meta is building smartphone accessories. They’re building smartphone replacements: a companion ecosystem where devices communicate peer-to-peer, complement each other’s capabilities, and gradually make phones optional rather than central. Fundamentally disrupting the hub-and-spoke model we’ve lived with for 15 years.

“This is the first time I’ve put anything on that genuinely feels like it could be post-phone.”- Andrew Bosworth, CTO of Meta

- The Verge - Meta’s Orion AR glasses are the most impressive tech demo I’ve seen

Meta is building an entire computing ecosystem designed to function without smartphones at all.

The current state

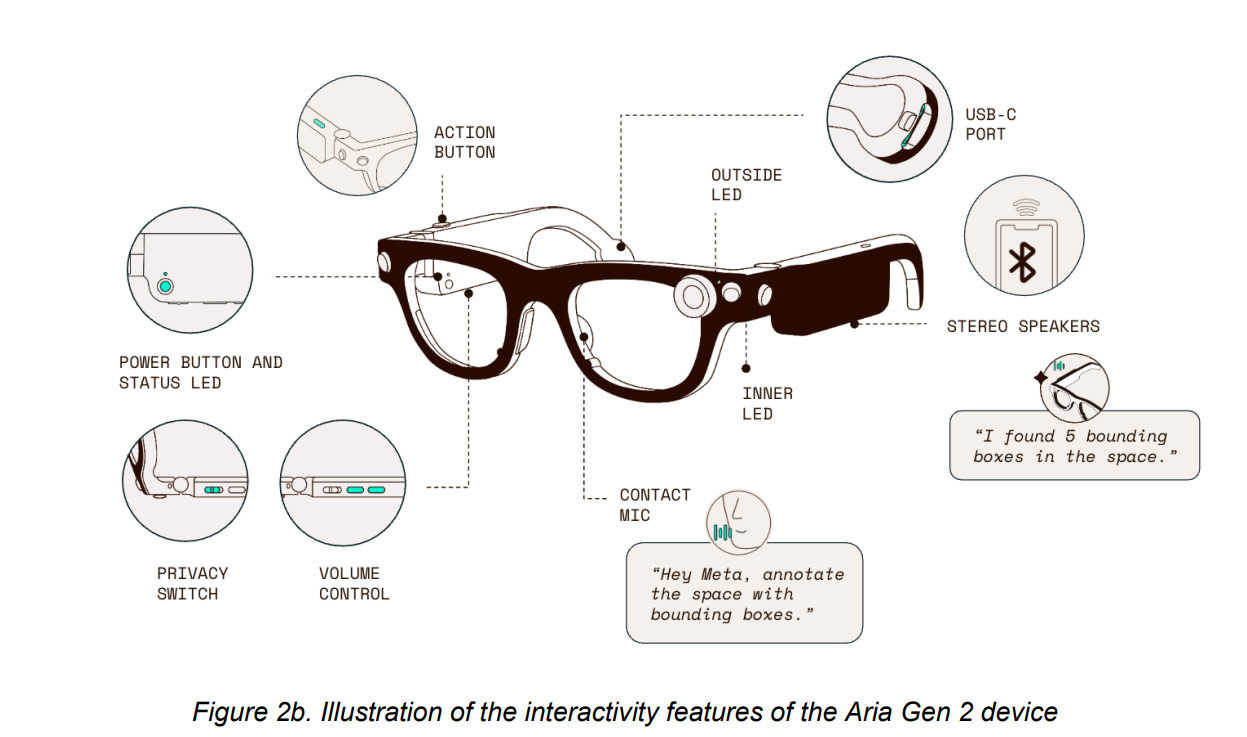

Let’s have a look at the current reality: Ray-Ban Meta glasses sold over 2 million units showing a genuine consumer traction. But these devices require smartphone connectivity for most compelling features: glasses connect via Bluetooth to Meta View app for setup, AI features, live streaming, settings. You can capture photos and videos independently with 32GB onboard storage, but the experience feels neutered without active phone pairing.

Meta is treating this as an infrastructure phase. Building user habits, refining form factors, validating use cases, establishing market presence while core technologies mature toward independence. The phone dependency is bridge architecture, not destination.

Orion is Meta’s strategic statement

The Orion prototype shows where this is heading, aka an architecture that deliberately excludes smartphones: wireless compute puck instead of phone pairing, multiple custom chips in glasses handling low-latency calculations, EMG Neural Band detecting nerve signals for gesture control. Input, compute, output ecosystem with no smartphone involvement. By design.

“Orion is a standalone product, at least in terms of needing a phone.”

— Andrew Bosworth, CTO of Meta (Meta Connect 2024)

Meta’s not licensing Qualcomm chips. They’re designing custom silicon optimized for their use cases. This level of vertical integration only makes sense if you’re building a platform, not a peripheral.

Form factor reveals priorities: under 100 grams, looks plausibly like regular glasses, smallest field-of-view display in AR to date. Meta obsessed over these constraints because wearable computing only succeeds if people actually wear the devices. Although, I am sure it would have found its audience amongst the 80s media fans, smart glasses looking like cyborg goggles would have never achieved mainstream adoption.

The shaping of the companion ecosystem

The roadmap shows coordinated multi-device strategy where different form factors serve different purposes but work together:

Smart Glasses (Ray-Ban Meta, Oakley): Audio, camera, basic AI, voice control. The current generation requires phones but we can expect future generations to be increasingly independent (by increasing the range). An educated guess would be that it will replace the phone as a central hub for the ecosystem.

AR Glasses (Orion, Ray-Ban Display): Heads-up displays, spatial computing, contextual AI. Explicitly designed as standalone with compute pucks.

VR Headsets (Quest 3, Quest 4): Immersive experiences, work environments, entertainment. Already function independently; adding inter-device communication. VR is kind of its own thing to be honest, and arguably a wearable (or at least, in the context of this article), but it is strategized as a key part of the ecosystem.

Neural Wristbands: Gesture control, biometric sensing, input mechanism without visible cameras or awkward voice commands.

“We’re building a family of devices that work together as a unified computing platform. Smart glasses, AR glasses, VR headsets, and input devices like neural wristbands—each serves different purposes but they’re all designed to complement each other.”

— Mark Zuckerberg, CEO of Meta (Meta Connect 2024 keynote)

They’re components of a unified computing platform. Meta’s building horizontal integration across wearables rather than vertical integration around smartphones.

AI as connective tissue

If devices are nodes, AI is the network making the companion ecosystem coherent. Meta AI works across glasses, Quest headsets, Meta apps and not as fragmented implementations, but unified AI following you across contexts.

“You go through your day and what’s cool is you can query your day. So you can say, ‘Hey, today in our design meeting, which color did we pick for the couch?’... your day becomes queryable... to make it agentic, where it’s like, ‘Hey, I see you’re driving home, don’t forget to swing by the store.’”

— Andrew Bosworth, CTO of Meta (discussing future AI capabilities at Meta Connect 2024)

This requires always-on sensors providing contextual awareness, cloud synchronization enabling state management. Architecture mirrors WhatsApp’s multi-device approach where each companion device connects independently rather than routing through one primary device.

AI strategy solves a fundamental problem: how do you make multiple devices work together without complex manual configuration? Well, with intelligence that adapts to context. Glasses mean visual information. Headset means immersive experiences. Neural Band interprets gestures appropriately. This ambient, context-aware computing requires sophisticated AI understanding of the user’s intentions, environment, and goals.

Worth noting the strategic contrast among Silicon Valley’s heavy weights: Apple’s conditioning users for spatial computing through interface design with Liquid Glass, while Meta is building an actual hardware ecosystem for distributed computing.

With AI democratizing interface creation, differentiation comes from personality, design risk, brand identity through interaction models. Meta is betting the interaction model itself shifts from phone-centric to ambient-wearable.

What we’re witnessing now is the rise of the ecosystem thinking, as industry strategy. Different bets, different timelines, all recognizing the platform game fundamentally changed.

Platform lock-in through openness

Meta learned from Android and iOS that platform success requires developer ecosystems.

Their strategy mirrors Android’s approach: create openness driving volume, control services layer where monetization occurs. Let’s look at the recent timeline:

April 2024: Quest OS rebranded to “Meta Horizon OS” and opened to third-party manufacturers (ASUS, Lenovo, Xbox). This commoditizes hardware while Meta controls operating system, app store, AI services.

September 2024: Wearables Device Access Toolkit gives third-party developers access to camera and sensor data from Ray-Ban Meta glasses. Early partners include Be My Eyes, Eventbrite. Creates platform lock-in through ecosystem: developers build for Meta’s wearables because that’s where users are, users stay because that’s where apps are.

By opening the platform, Meta avoids “walled garden” criticism while achieving similar lock-in through network effects and developer investment. Apps built for Meta Horizon OS won’t automatically work on competing platforms and developers who invest in Meta’s toolkits have switching costs.

This extends to its AI strategy, too:

Llama models are open-source, enabling rapid adoption and establishing Meta’s stack as default for AI applications. Open Compute shares infrastructure innovations. These moves build goodwill while ensuring Meta’s technologies become embedded in industry foundations.

“Open source AI is the path forward. By making Llama open, we enable rapid innovation while establishing our stack as the foundation layer that everyone builds on.”

— Mark Zuckerberg, CEO of Meta (July 2024)

Production scale signals serious commitment

Strategy requires execution.

Meta’s partnership with EssilorLuxottica extends through a multi-decade agreement with production capacity reaching 10 million units annually by 2026. We’re far beyond experimental: it’s an industrial-scale manufacturing commitment.

“Smart glasses will materially replace most of the functionality that today we have embedded into our phones.”

— Stefano Grassi, CFO of EssilorLuxottica (investor presentation, Q4 2024)

Reality Labs reported $4.2 billion operating losses in 2024 (staggering!) but also 40% revenue growth. Meta’s willing to sustain billions in annual losses because they view this as infrastructure investment for the next computing platform.

Market projections support the volume bet.

Smart glasses shipments grew 210% year-over-year in 2024, with 60% compound annual growth predicted through 2029. Meta targets “hundreds of millions and eventually billions“ of units. Meta missed the smartphone race and was unable to catch up with Apple and Android, but won’t be burned twice: it will fight with all its might to dominate the next digital landscape. And what we’re seeing with these numbers is smartphone-scale adoption, not niche markets, proving they might be succeeding.

The privacy and accessibility fault lines

From a user standpoint, privacy concerns are… Substantial.

Ray-Ban Meta glasses feature always-on cameras with recording indicators that may not be visible in all lighting. U.S. users can no longer opt out of cloud voice recording storage. Audio from voice commands is used for AI training. These capabilities, multiplied across multiple wearable devices with sensors, create comprehensive surveillance infrastructure, whether intentional or not.

The “queryable day” vision requires capturing, processing, and storing vast amounts of personal data. Who owns this data? How long is it retained? What prevents unauthorized access? Fundamental questions about the companion ecosystem’s social implications.

Accessibility presents different challenges.

Voice control and AI can help users with disabilities: partnerships like Be My Eyes demonstrate this. But devices presume certain capabilities: for example, visual setup through smartphone apps, navigating complex pairing, comfort with always-on sensors… Practically, multi-device complexity creates barriers.

Meta’s unique position

Meta’s companion ecosystem strategy reveals their positioning.

Unlike smartphone manufacturers extending into wearables, Meta’s building wearables first and working backwards.

They have no existing smartphone business to protect, I would argue that it is a clear advantage; they can make a full commitment to post-smartphone vision. Social graph provides natural wearables use cases, AI investment applies directly to ambient computing and the openness attracts developers without threatening core business.

But on the other hand, that means they have no hardware manufacturing expertise or supply chain ownership and are dependent on partners for critical components. It’s also a brand that has reputation challenges from privacy concerns. On top of that, their ecosystem vision requires changing fundamental user behaviors, arguably a critical risk in any industry.

The $75 billion investment reflects both opportunity scale and challenge difficulty. Meta’s betting they can establish a new computing platform from scratch, competing against incumbents with vastly more hardware experience and ecosystem lock-in.

“My biggest concern is that the market gets capped somehow... with [competitors] specifically is that they have their devices so locked down that they can self-preference a ton.”

— Andrew Bosworth, CTO of Meta (Meta Connect 2024 interview) > Context: Bosworth was discussing concerns about Apple potentially limiting Meta’s ability to compete on iOS devices, and how closed ecosystems could constrain the wearables market.

Meta’s response in anticipation: make their ecosystem volume leader through accessibility and developer friendliness, then control AI and services layer where monetization and lock-in actually occur.

Meta’s electric dream

Meta’s pursuing companion ecosystem where devices communicate and complement each other rather than orbiting smartphones.

The sequencing is the strategy, first establish wearable form factors, create compelling use cases, scale manufacturing, attract developers, then eliminate phone dependency as technology and market align. The execution is real but whether it succeeds depends on variables Meta can’t control: regulation forming in real-time, cultural acceptance of always-on sensors, paradigm wars with Apple and Google, and brand trust required for continuous data capture. The landscape is reorganizing too quickly for certainty, and to be honest, that’s what makes it incredible to witness.

But the approach might work precisely because Meta’s not waiting. By building hardware at scale now, they’re constructing the future rather than predicting it.

This is a bet. But it’s educated through pain, fifteen years of platform dependency, billions lost to policy changes: the lesson learned from missing smartphones.

“Our goal is to get these glasses on hundreds of millions of people, and eventually billions. We’re not building a niche product. We’re building the next computing platform.”

— Mark Zuckerberg, CEO of Meta (Meta Connect 2024)