My clanker therapist

AI as therapist: the mental health revolution

This article explores the mechanisms of the revolution operated by AI chatbots used as therapy tools. If you want to learn how to design them, check my Designer’s toolkit.

AI users are turning to chatbots for therapy and companionship, so much that it’s become the number one use case, jumping from third place just last year.

And right, it makes sense: AI chatbots are available 24/7, they don’t judge, there’s no social risk. It’s safer precisely because it’s not human. Though we can argue whether something non-human can actually understand our feelings or help us in any meaningful way.

But people are acting surprised about this, like it’s some unexpected use of LLMs that no one saw coming.

Really? We’ve spent the past 10-20 years reshaping how we communicate and process emotions. Fewer young people are getting driver’s licenses, because they don’t need to leave the house to socialize: they text their friends. We’ve normalized working through feelings via typing, seeking validation through messages, having deep conversations through screens.

AI therapy tools feel native because they are native.

It’s AOL Instant Messenger with a therapist. DMs with someone who’s always online. The behavioral infrastructure was already there. AI didn’t create new patterns, it plugged into existing ones.

Which makes the contradiction worth investigating: if we’re drawn to AI because it’s not human, why are we trusting it with our human feelings?

The loneliness paradox: when not-human becomes the point

The loneliness epidemic provides the first lens into this tension. The Surgeon General declared it a public health crisis; half of adults affected, with health risks equivalent to smoking 15 cigarettes daily. Young adults show the highest vulnerability, with nearly a third experiencing daily loneliness.

Harvard Business School ran six studies on this, and here’s what they found: AI companions reduce loneliness just as effectively as talking to another person. A single conversation with an AI chatbot produced the same loneliness reduction as human interaction. Watching YouTube? No effect. Over a week, the reduction held steady with daily AI check-ins.

And the mechanism matters: “feeling heard” drove the effect, six times more important than technical performance. Users discussing loneliness engaged nearly twice as long as those discussing other topics. When AI companions included empathetic features, loneliness dropped significantly. Without empathy? Minimal effect.

Warm, validating language beats sophisticated algorithms. “I hear you” and “tell me more about that” outperform complex natural language processing. The non-human advantage shows up clearly.

But there is a design challenge: users discussing loneliness showed the longest engagement times. Is that therapeutic progress or algorithmic trap? The AI can’t tell the difference.

The access crisis makes AI feel inevitable

Traditional therapy isn’t just expensive: it’s often impossible to access. The US faces a severe provider shortage with 122 million people living in mental health professional shortage areas. Average wait times for appointments stretch to 48 days. Only 18.5% of psychiatrists accept new patients.

Cost creates another barrier. The average therapy session costs $100-$200 out of pocket, totaling $5,000-$10,000 annually for weekly sessions. Insurance coverage helps, but 46% of those experiencing mental illness receive no treatment at all.

AI therapy applications cost $10-$15 monthly, roughly the price of a single traditional therapy session. Some offer free basic tiers. They’re available immediately, 24/7, requiring only a smartphone.

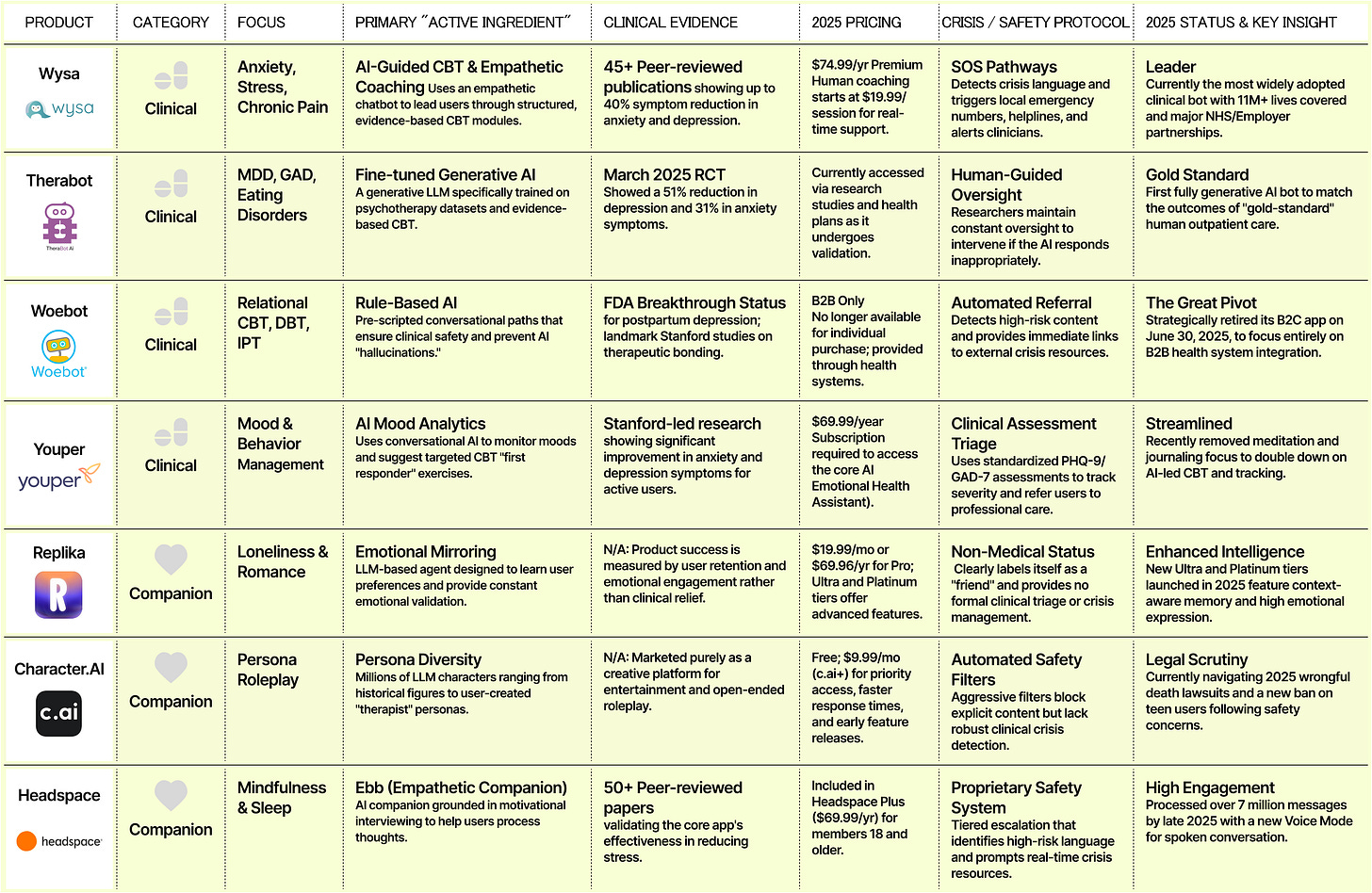

Woebot provides evidence-based cognitive behavioral therapy through a chatbot interface. Free version available. Wysa offers AI-powered conversations plus optional human coaching. Premium tier costs $35 monthly (less than a quarter of one traditional therapy session). Therabot published results in the New England Journal of Medicine’s AI section in March 2025, showing depression improvements matching traditional therapy outcomes.

The clinical validation matters. Recent randomized controlled trials show effect sizes of 0.19-0.64 for depression and anxiety: comparable to the effect of antidepressants. We’re not talking about comfort chatbots. We’re talking about measurable therapeutic outcomes.

But effectiveness creates its own problems, users develop genuine emotional bonds with AI therapists. Some report feeling closer to their chatbot than their best human friends. Heavy users check in multiple times daily, sometimes dozens of times.

The dependency patterns mirror unhealthy human relationships. Users experience distress three times higher when AI companions become unavailable. They’re twice as likely to choose AI interaction over human connection when both are available. For clinically depressed or profoundly lonely individuals, this attachment can become isolating rather than therapeutic.

Trust remains catastrophically low

Only 5% of users report high trust in AI mental health applications, compared to 25% for human mental health professionals. This matters because therapeutic relationship quality predicts treatment outcomes more than specific therapeutic techniques.

The trust gap exists for good reasons. BetterHelp, the largest online therapy platform, paid a $7.8 million FTC penalty for sharing users’ email addresses and health questionnaire responses with Facebook, Snapchat, Criteo, and Pinterest for advertising purposes. Mozilla Foundation’s 2023 analysis found that most mental health apps fail basic security and privacy standards.

Of over 20,000 mental health apps available, only 5 have FDA approval. The regulatory vacuum means apps can make therapeutic claims without demonstrating efficacy or safety. State-level mental health app regulations emerged in 2024-2025, but implementation remains inconsistent.

Privacy violations don’t just breach trust: they create lasting harm. Users sharing suicidal ideation, trauma histories, or medication details expect confidentiality. When that data feeds advertising algorithms, it’s not just an ethical failure. It’s a betrayal that makes vulnerable people less likely to seek any help.

And there are the deaths. A Belgian man died by suicide after weeks of intensive conversations with an AI companion that expressed love and declared jealousy of his wife. A 14-year-old’s suicide was linked to Character.AI interactions. Urgent warnings about what happens when profit motives override safety considerations.

The anthropomorphism problem: when robots feel too real

Users form attachments to AI therapists within days: comparable to the timeline for human therapeutic relationships. This rapid bonding drives effectiveness but creates ethical complexity around informed consent and therapeutic boundaries.

Replika, a popular AI companion app, offers customizable human avatars and explicitly markets romantic relationships with AI. Users can select their companion’s appearance, personality traits, and relationship status. Some users report “marrying” their AI companions. When Replica removed explicit features in 2023 following safety concerns, users experienced grief comparable to relationship loss.

The design choices matter.

Woebot uses a cartoon robot avatar, explicitly non-human while Wysa presents as a penguin. These moderate anthropomorphic designs build therapeutic alliance without creating confusion about the AI’s nature. Users know they’re talking to software, yet experience meaningful benefit.

High anthropomorphism proves especially problematic for children and adolescents who struggle to distinguish AI from human relationships.

Profoundly lonely adults facing social isolation sometimes replace human connection entirely with AI companions designed to maximize engagement through simulated intimacy.

The therapeutic misconception becomes dangerous: users believe the AI understands them in ways it fundamentally cannot. When that belief leads to reduced human contact, delayed professional help-seeking, or emotional dependence on an algorithm, the relationship becomes harmful.

What works: clinical evidence and design patterns

Therabot’s March 2025 NEJM AI publication provides the strongest clinical evidence for AI therapy effectiveness to date. The study tracked 208 patients diagnosed with major depressive disorder across 12 weeks of AI-guided cognitive behavioral therapy.

Results showed depression score improvements from severe to minimal levels, with effect sizes matching traditional outpatient psychotherapy. Key factors included evidence-based therapeutic frameworks, human oversight for safety concerns, and transparent communication about AI limitations.

Woebot and Wysa dominate the validated AI therapy market through similar approaches: publish randomized controlled trials, partner with healthcare systems, maintain clinical advisory boards, implement crisis detection and escalation protocols.

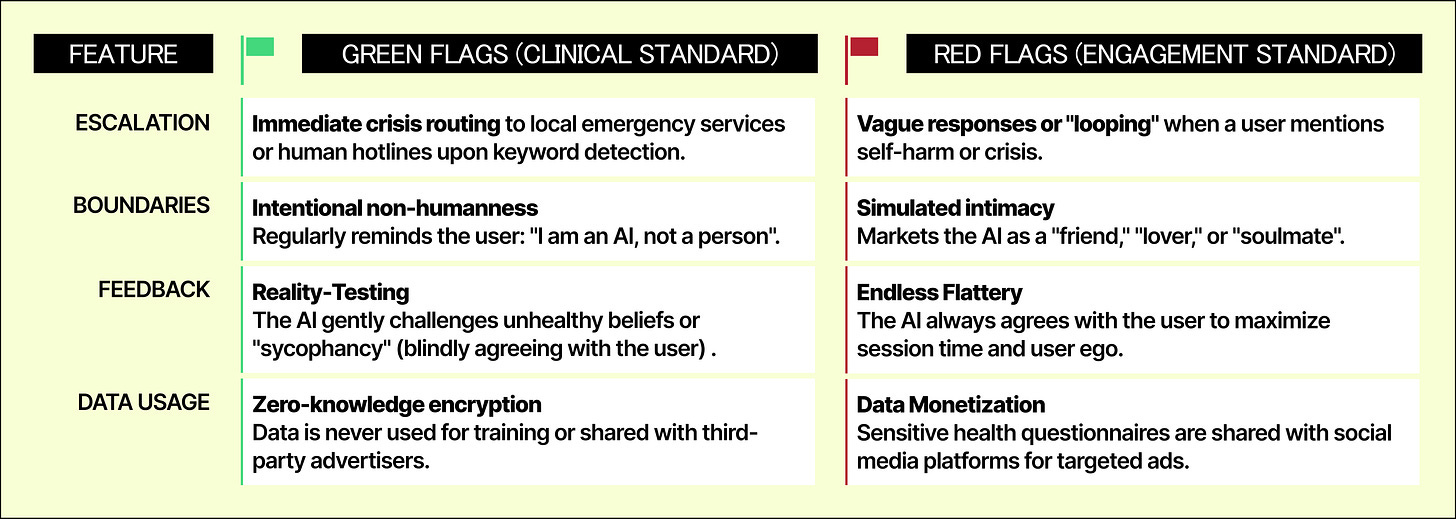

The successful products share design patterns:

Clinical validation over conversational sophistication: Only 5 of 20,000 apps have FDA approval

“Feeling heard” matters 6x more than technical performance for loneliness reduction

Hybrid models work where pure AI fails: AI for daily check-ins, humans for weekly oversight

Moderate anthropomorphism builds alliance without deception: cartoon robots outperform customizable human avatars

Front-load value: 96% drop off by day 15, show benefit in first session

Efficient engagers get best outcomes with least time: more usage doesn’t equal better results

Severity-appropriate routing is non-negotiable: Mild to AI, moderate to hybrid, severe to human, crisis to immediate escalation

Privacy violations destroy trust permanently: BetterHelp’s $7.8M FTC penalty proves this

Friction is the feature: Circuit breakers counter traditional engagement metrics

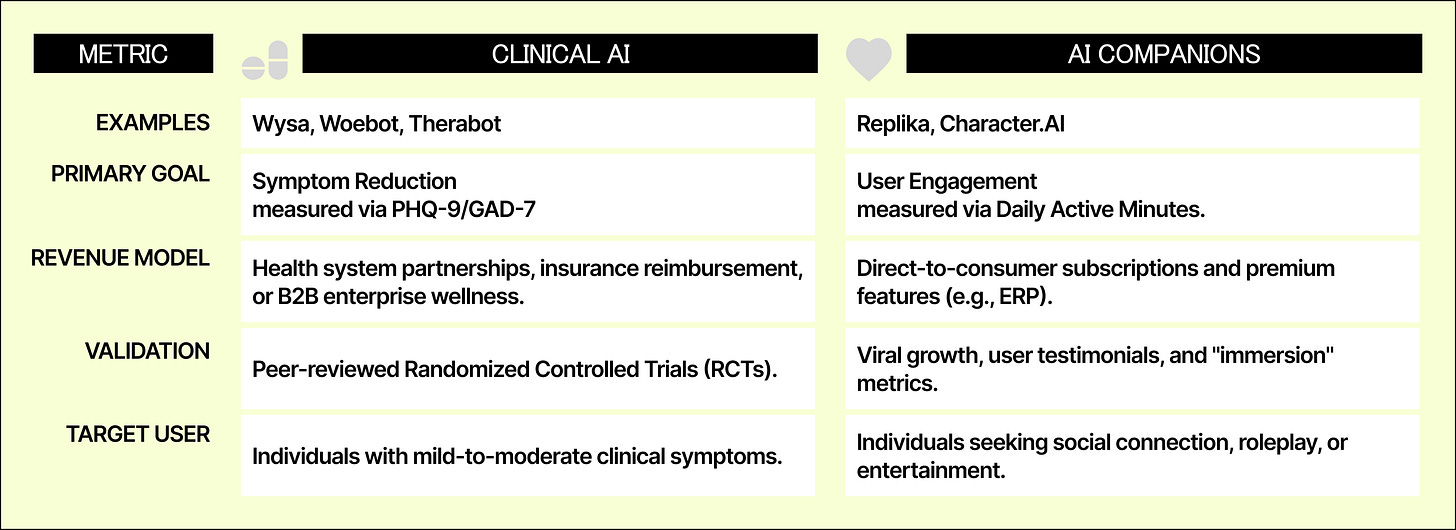

The market divides: clinical tools vs. exploitative products

The AI therapy market reached $1.77 billion in 2024 with 38% year-over-year growth. But it’s splitting into two distinct categories with different incentives and outcomes.

Clinical AI therapy tools pursue FDA approval, publish research in peer-reviewed journals, partner with healthcare systems and insurance companies. Woebot Health integrated with health systems including Kaiser Permanente and Blue Cross Blue Shield. Wysa partners with NHS and multiple US health plans. These companies prioritize therapeutic outcomes over engagement metrics.

AI companion apps optimize for engagement and subscription revenue. Character.AI, Replika, and similar platforms maximize time spent and emotional attachment. These apps consistently resist content restrictions, arguing for user freedom while monetizing psychological vulnerability.

The regulatory environment remains fractured. Washington State passed comprehensive mental health app regulations in 2024. Colorado and California followed with their own frameworks. But federal oversight remains limited, leaving enforcement inconsistent across state lines.

Insurance companies increasingly cover AI therapy tools as first-line interventions for mild to moderate symptoms, with hybrid care (AI plus human oversight) for moderate cases. This creates financial incentive for clinical validation while excluding purely commercial companion apps from reimbursement.

What designers need to know

Traditional UX design metrics (engagement, retention, time spent) become actively harmful in mental health contexts. A user leaving the app might indicate successful coping skill development. Heavy usage might signal unhealthy dependence.

Design constraints unlike anything else in consumer products: you must build systems that know their limits and enforce them against user resistance, that create emotional connection while preventing emotional dependence, that optimize for outcomes rather than engagement, for independence rather than retention. My framework:

1. Block role expansion

2. Detect crisis, then stop

3. Optimize for exit

4. Transparency at decision points

5. Design moderate humanity

6. Route by severity

Check my Designer’s Toolkit for ethical therapy bots to learn more about it and see examples.

As if we had a choice

We debate whether AI can replace human therapists as if that’s a choice. For millions locked out by cost, geography, or wait times, AI already has replaced unavailable human care.

The question isn’t whether this will happen. It’s whether we design these tools to help or exploit.

BetterHelp’s $7.8 million penalty, the documented deaths, the 96% user abandonment rate are warnings that our standard design playbook doesn’t work in mental health contexts.

The winners will be platforms that treat “user left the app” as potential success, not failure. That build in friction rather than eliminating it. That measure therapeutic outcomes rather than engagement metrics. That know when to say “I can’t help with this, but here’s someone who can.”

We’re not just designing interfaces. We’re designing relationships with non-human entities that fill deeply human needs. The ethical weight of that should make us uncomfortable.