Pull the lever, paste the prompt

Heuristics of the slot machine in vibe coding

Index

The semiotics

The design parallels

Jackpot

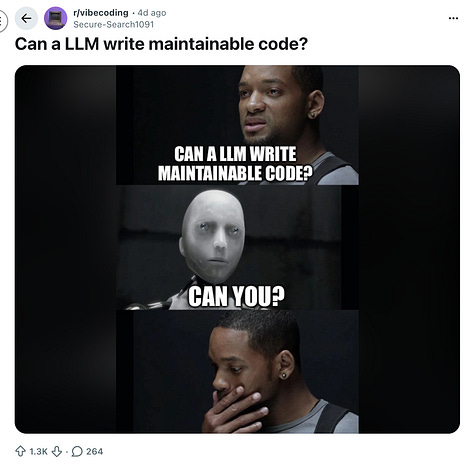

Social media is like cigarettes for your eyes. I keep my Instagram to ten minutes a day. During one of those ten minutes, I watched a content creator draw a comparison between vibe coding and slot machines. The rolling code, the waiting, the almost-working output. The loop that never quite closes.

I found the parallel interesting. From a semiotic standpoint. So I did what you do with an interesting observation andI went looking for the heuristics, the behavioral science, and the design logic. I wanted to know if the analogy actually held, and if so, why.

This article is that investigation.

The semiotics

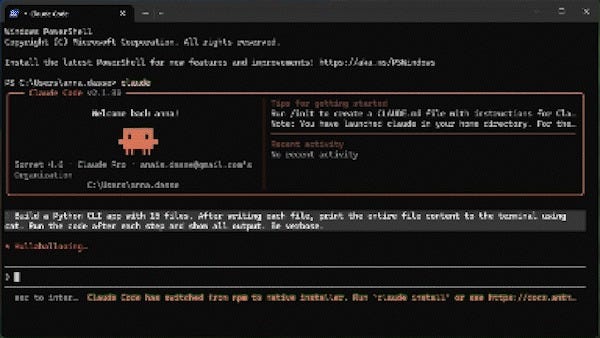

The prompt box is functionally a coin slot. It accepts a small, standardized unit of input, language instead of money, and initiates a process whose outcome is not guaranteed. The effort required is minimal. The reward: potentially beyond the realm of anything you could achieve by yourself (key word: potentially). There is no friction designed into submitting another prompt, just as there is no friction in feeding another coin.

Between submission and output, there is a designed liminal moment. A spinner. A pulsing cursor. On most platforms, streamed text that begins before the full response is formed. A period of suspended outcome during which the user is held in active anticipation. Long enough to feel meaningful. Short enough not to break engagement.

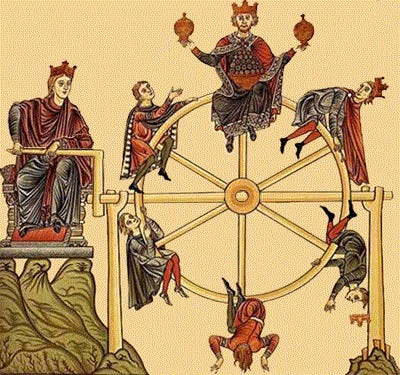

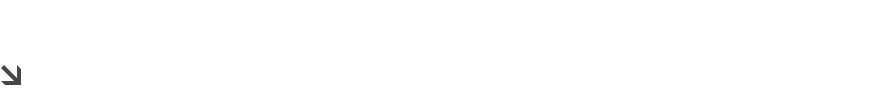

The output quality is genuinely non-deterministic. The same prompt, submitted twice, returns different results. Sometimes exactly right. Sometimes useless. Most often almost right. Tantalizingly, actionably almost right, in a way that makes the next prompt feel obvious and necessary. Near-misses activate the same neural reward pathways as actual wins, while producing stronger urges to continue than outright losses. Vibe coding communities have documented the equivalent: a new error message after a failed fix feels like progress. A different error means you are closer. You are not closer. But it feels that way, and that feeling is enough.

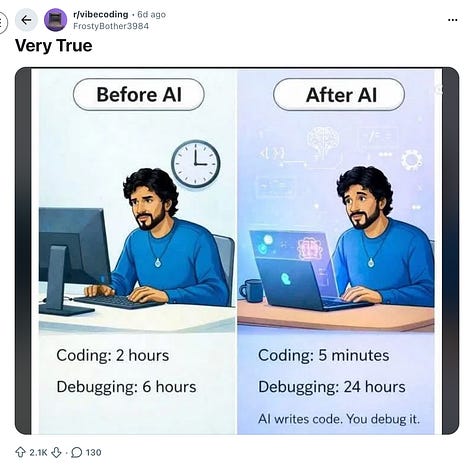

“Before AI, programming gave me two dopamine hits: figuring things out AND getting them to work. The breakthrough moment when you understand why your algorithm failed. The satisfaction of architecting something elegant. The joy of working code after hours of debugging.

Now, the AI does all the figuring out. You’re left with just a shallow pleasure.”

-Namanyay Goel, “Vibe Coding Is Creating Braindead Coders”

This architecture, low-cost repeatable input, variable partially-rewarding output, no natural stopping point, is what behavioral science calls a variable ratio reinforcement schedule. The most compulsion-resistant behavioral pattern known. It is not a coincidence that slot machines are engineered around it, nor that social media platforms were found to exploit the same mechanism to drive compulsive return.

Non-deterministic AI responses increase dopamine release in ways comparable to slot machine mechanics, and word-by-word streamed output functions like the reinforcing visual graphics of spinning reels.

The hardest data point is the gap between how productive AI-assisted work feels and how productive it actually is. Developers consistently believe they are working faster when the opposite is true. Gambling research calls this Losses Disguised as Wins, the machine returns less than was put in while every signal says otherwise. Applied to vibe coding, the equivalent is code that compiles and renders, feels like a win, but is unmaintainable or wrong. The reward fires. The underlying need remains unmet.

Design parallels

None of these interface choices arrived from nowhere. They have histories. They solved real problems.

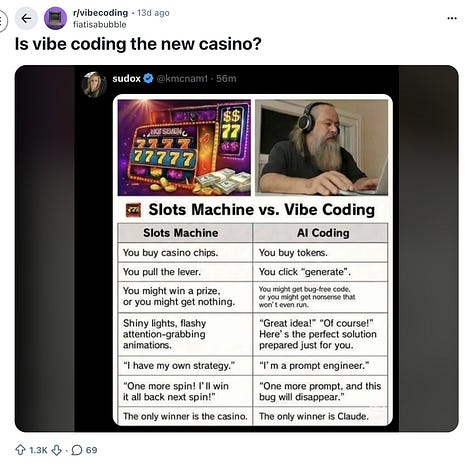

Streamed output, the token-by-token display that makes generation visible in real time, was a deliberate response to a trust problem. Answers that appeared instantaneously felt arbitrary, like a lookup rather than a reasoning process. Making the thinking visible built trust. It also solved for patience: perceived wait time drops when progress is visible, so thirty seconds watching text appear is experienced differently than thirty seconds waiting for a result. These are good design reasons. They worked.

Many AI chatbots employ engaging visual presentations of responses. Rather than displaying an entire block of text at once, some AI chatbots stream their responses word-by-word.(...) Coupled with the rewarding responses AI chatbots can provide, these displays form strong visual cues that over time become reward-predicting cues, upon which dopamine neurons start to fire. Similar to the reinforcing visual graphics that accompany winning outcomes in slot machines, when users see the engaging visual presentation of an AI chatbot’s response, they may become driven to achieve rewarding responses from AI chatbots even more.”

- Karen Shen and Dongwook Yoon, “The Dark Addiction Patterns of Current AI Chatbot Interfaces”

The same choice is also the spinning reel. The mechanism that builds trust and the mechanism that holds the gaze in compulsive anticipation are one decision with two effects. Output variability has the same quality. In consumer AI products, variability is partly a tunable parameter. Higher variability produces more surprising, more engaging responses and a stronger compulsion loop. That tradeoff exists and is not part of any design conversation happening in public.

The absence of stopping points has a simpler explanation: use cases are too varied. A general-purpose tool cannot know when your task is complete. So the input field stays open and the loop continues until the user decides to stop. Slot machine design deliberately removes natural endpoints as a core retention mechanic. In AI interfaces there is no deliberate removal, there is simply no design for completion at all. The effect is the same. Users iterate past sufficiency consistently, not because they choose to, but because nothing signals enough.

Jackpot

This matters more than it would if we were talking about experienced developers. We are not. The majority of people now using these tools to generate code have no prior relationship with software development to calibrate against. For them, this loop is not a deviation from a known practice. It is the practice. It is forming a baseline. A generation is learning to relate to code through an interface that structurally resembles a slot machine, and they have nothing to compare it to. This is not a value judgement that I am making here, but an assessment.

Now, to keep us honest, these are inherited choices. They come from a design tradition in which engagement was the primary signal of value, because for a long time it was the only reliable one. If users came back, the product was working. The slot machine dynamic was not designed in maliciously. It was not designed out either.

AI tools introduce a different kind of possibility. For the first time, a product can potentially know whether the task it was used for was actually resolved, not just whether the user returned. The design tradition being applied to these tools was built before that was possible.

Success could be measured differently.