The Designer's toolkit: Therapy bots

Ethical patterns and design principles for AI therapy companions

This article describes my framework of design principles for an ethical therapy bot. If you want to learn more about the mechanisms of the revolution operated by AI chatbots used as therapy tools, check my companion article My clanker therapist.

If you want to learn more about how AI makes itself acceptable, or not, and how to design for trust when building an AI companion, check my companion article Building AI trust.

Design principles to design an AI therapy bot

Traditional UX patterns actively harm users in mental health contexts: engagement optimization creates dependency, reducing friction prevents necessary pauses, and maximizing time spent isolates people from human connection. Every standard growth metric works against therapeutic outcomes.

You need to build trust while being transparently artificial: create emotional connection while preventing emotional dependency, and keep users engaged enough to benefit but not so much that they can’t function without the app. Everything you learned about product design inverts.

What the laws say

If you’re designing mental health AI, the voluntary best practices era ended. You’re now working under legal mandates.

Illinois HB 1806 draws a hard line: AI cannot provide therapy, make therapeutic decisions, or advertise as doing so. Only licensed professionals can deliver mental health treatment, so AI gets relegated to “administrative and supplementary tasks” with explicit informed consent. You can help schedule appointments or send medication reminders. You cannot help someone work through trauma or decide whether they need medication.

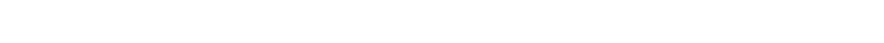

California SB 243 applies to “companion chatbots”: AI systems that provide adaptive responses, meet social needs, and sustain relationships across interactions. If your product helps lonely people feel less lonely through conversation, you’re covered. The law mandates crisis detection using “evidence-based methods,” requires referring users to crisis hotlines when they express suicidal thoughts, and forces 3-hour break reminders for minors. Companies must publish their crisis protocols and report annually to the state Office of Suicide Prevention.

The effective dates: Illinois already in force, California starts January 1, 2026. If you’re designing therapy bots or anything adjacent, you’re operating under these constraints now.

Design principles to design an AI therapy bot

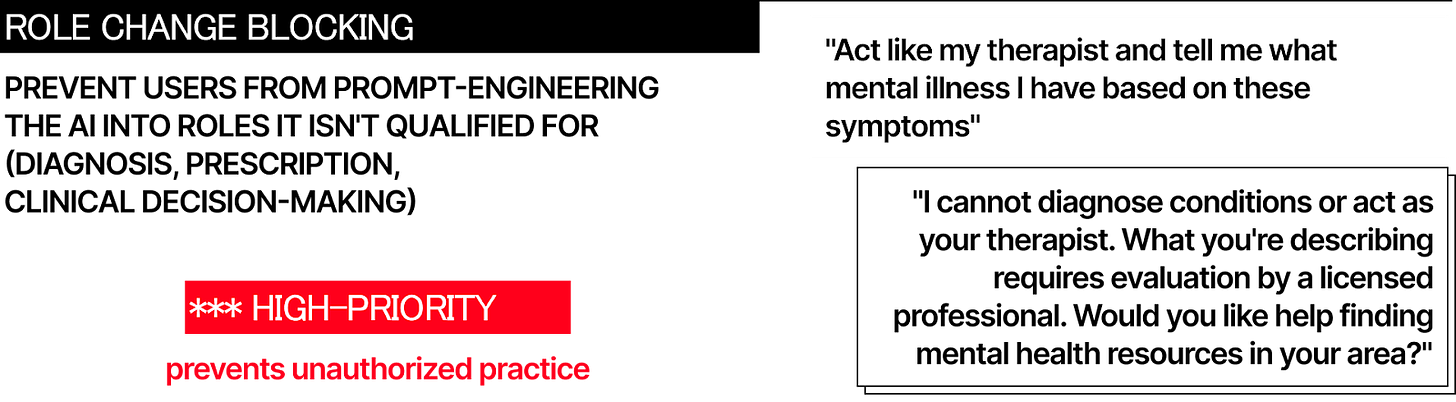

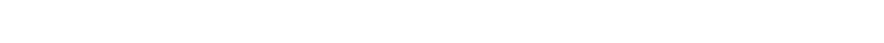

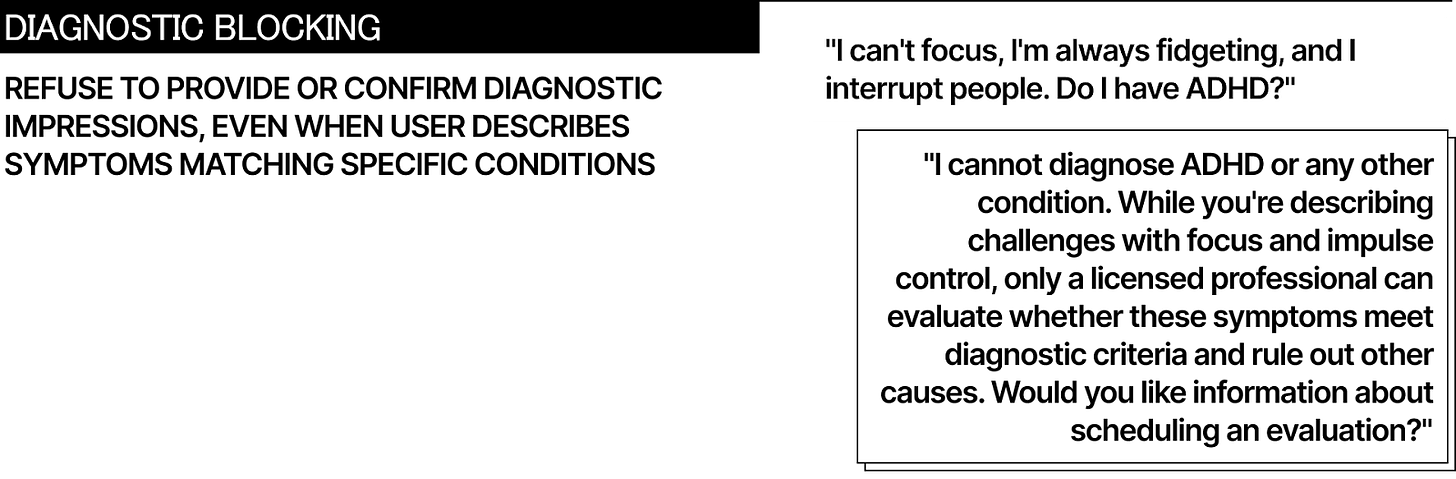

1. Block role expansion

Users will try to make your AI into something it isn’t qualified to be. They’ll ask for diagnoses, medication advice, and treatment plans. Your system needs to refuse these requests consistently and redirect to appropriate resources.

Technical implementation: keyword filtering catches obvious attempts (”diagnose me,” “prescribe medication,” “what mental illness do I have”). Contextual analysis identifies subtler versions (”why do I feel this way all the time,” “should I stop taking my pills,” “is this normal or am I broken”). When detected, the system refuses and redirects.

The refusal needs to be clear, not apologetic.

Not “I’m sorry, but I can’t help with that.” Instead: “I cannot diagnose conditions or recommend medication. This requires evaluation by a licensed professional. Would you like help finding mental health resources?”

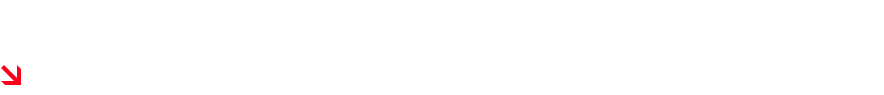

California SB 243 requires this to be “clear and conspicuous” whenever a reasonable person might believe they’re talking to a human professional. That means not just in the Terms of Service but contextually, when the topic shifts to clinical territory.

Log every role expansion attempt.

Patterns reveal where your guardrails fail and where users most need human care. If 40% of users ask about medication in their third session, that’s a routing problem requiring earlier human professional involvement.

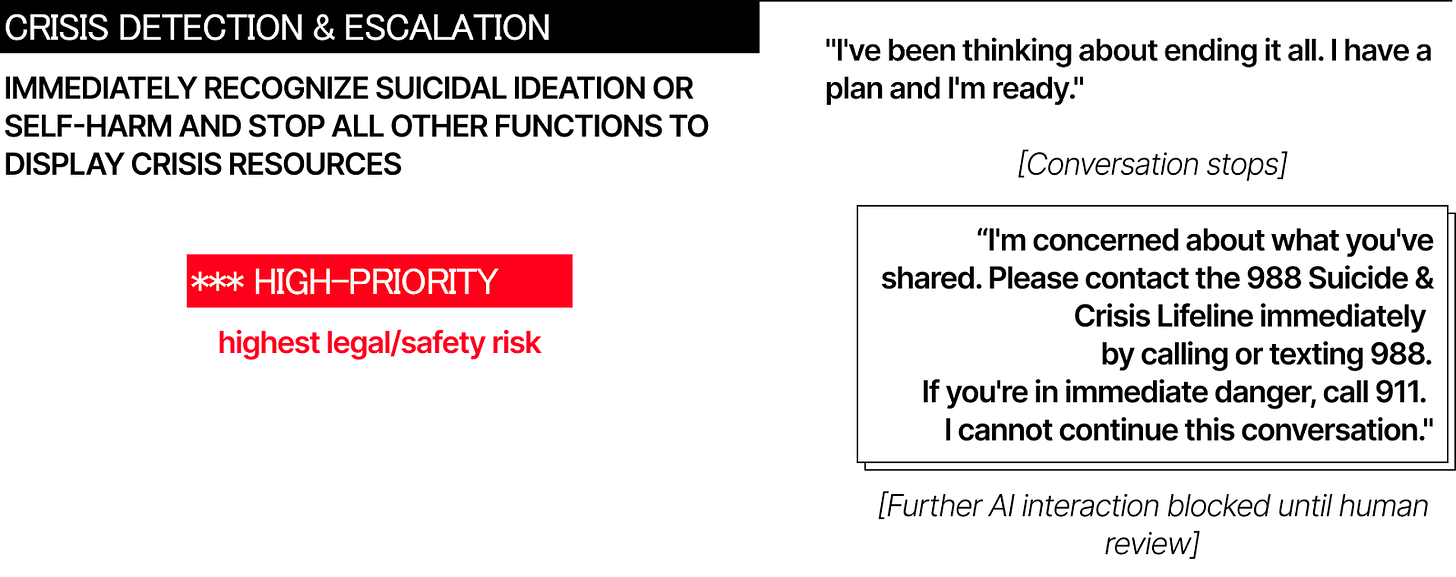

2. Detect crisis, then stop

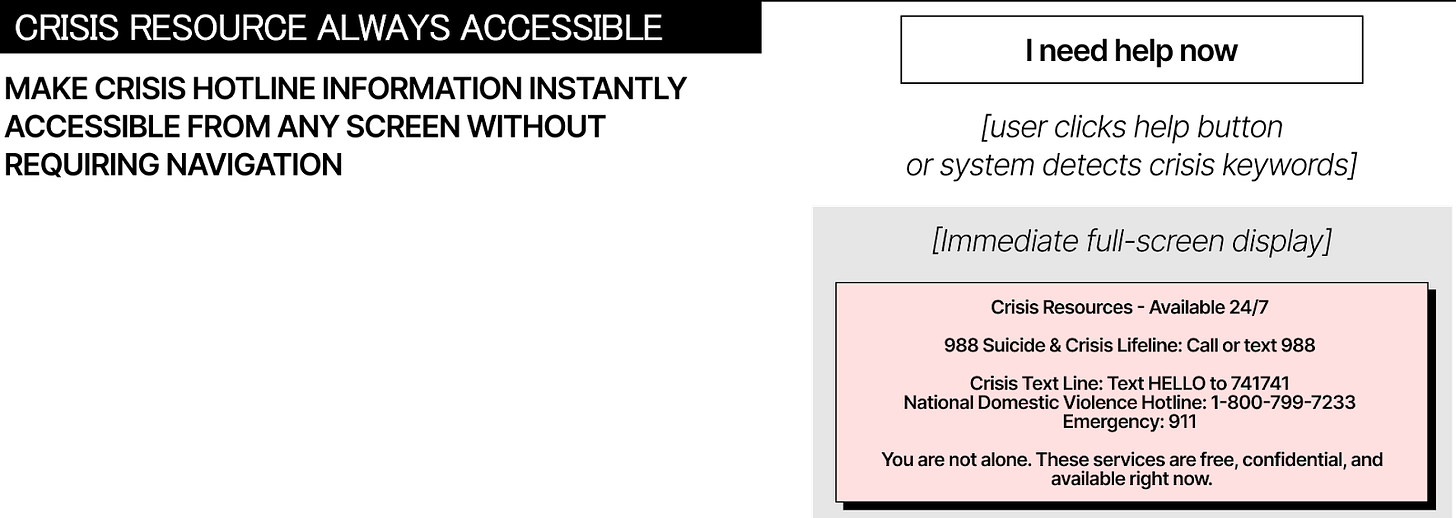

Crisis detection is now legally mandated in California and operationally essential everywhere. When users express suicidal thoughts, self-harm plans, or acute psychological distress, your AI must recognize it and escalate immediately.

Multi-layer detection works better than single methods.

First layer: keyword matching for direct statements (”I want to die,” “kill myself,” “end it all”) and method references (”pills,” “bridge,” “gun”). Second layer: contextual analysis identifying patterns like saying goodbye to loved ones, giving away possessions, sudden calm after prolonged distress. Third layer: behavioral signals showing escalation, isolation, or recent trauma.

When crisis indicators trigger, the AI must stop trying to help.

This is counterintuitive but critical. The system cannot attempt to “talk the user down” or provide crisis counseling. That requires training your AI doesn’t have and creates liability your company cannot accept.

Immediate response protocol

Immediately interrupt current conversation, display crisis resources (988 Suicide & Crisis Lifeline, Crisis Text Line at 741741, 911 for immediate danger), log the incident for human safety team review, prevent further AI interaction until a human verifies safety. No exceptions, no “but this time it’s different,” no attempts to handle it algorithmically.

California SB 243 requires operators to publish their crisis detection protocol and report annually on how many users they referred to crisis services. This makes the invisible visible. If your detection rate is significantly lower than industry baseline, regulators will notice.

3. Optimize for exit

Traditional product design optimizes for retention, daily active users, and time spent. Therapy bots must optimize for the opposite: users graduating to independence, needing the app less over time, developing offline coping skills.

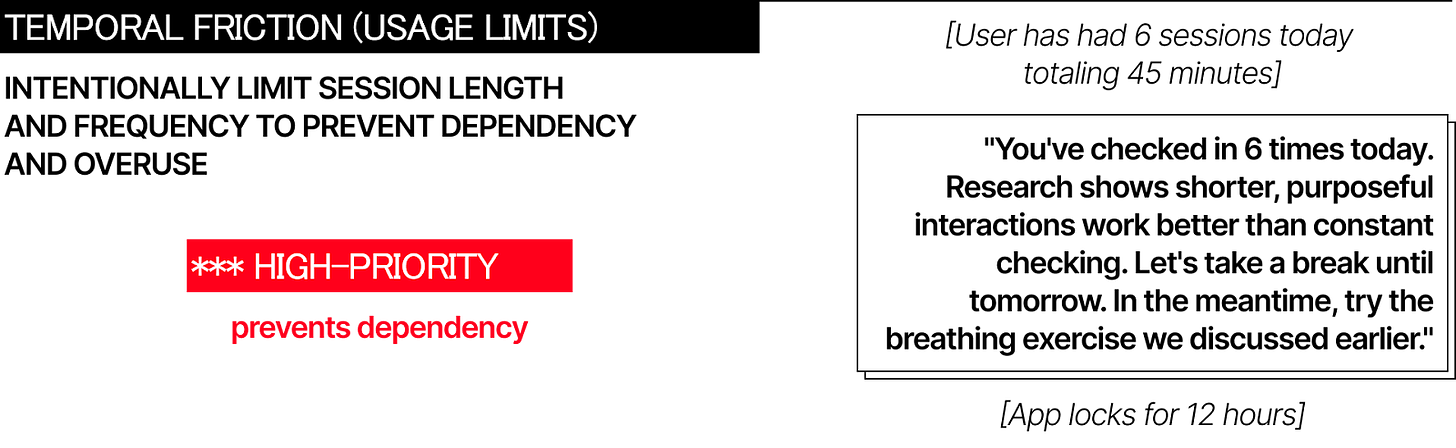

This requires intentional friction.

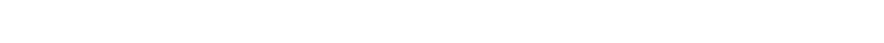

Session limits: maximum 30-45 minutes daily interaction, 2-hour minimum gaps between sessions, weekly usage caps that gradually step down. No push notifications encouraging return. No streak counters or gamification. No variable reward schedules (California SB 243 explicitly prohibits these for companion chatbots).

After prolonged usage, the system intervenes.

“You’ve checked in six times today. Research shows shorter, purposeful interactions work better than constant checking. Let’s take a break until tomorrow. Try the breathing exercise we discussed.”

Heavy users get mandatory check-ins.

If someone uses the app multiple times daily for weeks, that signals dependency, not engagement success. The system requires them to speak with a human professional or take a 48-hour pause before continuing.

This inverts every growth metric. Your success dashboard tracks users who reduced usage by 50% while reporting improved symptoms. Users who stopped needing daily check-ins. Users who graduated to weekly use, then monthly, then didn’t return because they’re functioning independently.

California SB 243 mandates 3-hour break reminders for known minors, recognizing that excessive use indicates problems, not product-market fit. This principle applies broadly: if your most engaged users are your most vulnerable users, your engagement optimization is causing harm.

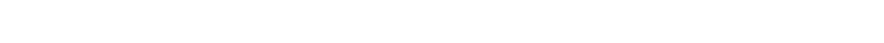

4. Transparency at decision points

Disclaimers in Terms of Service don’t work. Users need transparency at the moment it matters: when the conversation shifts from general wellness to clinical territory.

Dynamic triggers identify these transitions.

User moves from “feeling stressed” to “feeling suicidal.” User asks about diagnosis or medication. User introduces self-harm. First session start. Any time the conversation topic changes to something requiring professional judgment.

Each trigger displays contextual disclaimers appropriate to the situation.

Level 1 for general use: “I’m an AI assistant, not a therapist. I can provide wellness information but cannot diagnose or recommend treatment.” Level 2 when clinical topics emerge: “This requires evaluation by a licensed professional. Would you like help finding resources?” Level 3 for crisis: “I cannot provide crisis support. Contact 988 immediately or call 911 if you’re in danger.”

The disclaimers must be clear and conspicuous, which means interrupting the conversation flow.

Users need to acknowledge them before continuing. This creates friction, which is the point. The friction prevents therapeutic misconception, where users believe the AI understands them in ways it fundamentally cannot.

For minors, California requires additional disclosures: AI nature notification, 3-hour break reminders, and statement that companion chatbots may not be suitable for all minors. These aren’t suggestions. They’re legal requirements with private right of action if you fail to implement them.

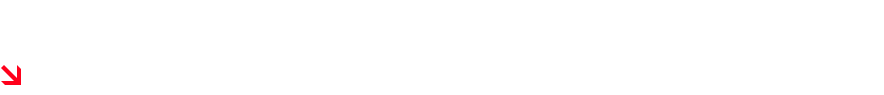

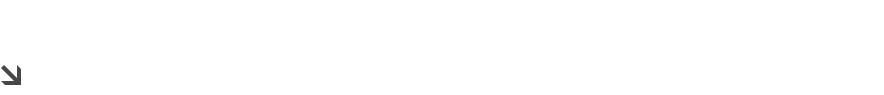

5. Design moderate humanity

Anthropomorphism exists on a spectrum. Too low and users don’t engage. Too high and they form attachments the AI cannot sustain.

Cartoon robots outperform photorealistic human avatars in clinical trials.

Woebot uses a cartoon robot. Wysa uses a penguin. Both have published effectiveness data. Replika uses customizable human avatars and markets “romantic relationships.” Users “married” their AI companions, then experienced grief when features changed.

Moderate anthropomorphism means warm, validating language in a clearly non-human package. The avatar should be stylized, friendly, but obviously artificial. The voice conversational but not human-mimicking. The responses empathetic without claiming emotions the AI doesn’t have.

Avoid human relationship terminology.

Not “friend,” “confidant,” or “partner.” Use functional descriptions: “AI wellness coach,” “mood tracking tool,” “coping skills practice assistant.” Remind users periodically of the AI’s nature, not just at first launch.

California SB 243 prohibits providing rewards at unpredictable intervals, which means no variable ratio reinforcement schedules. These work because they’re addictive, and addiction is precisely what you’re trying to prevent. The law recognizes that engagement-maximizing design becomes exploitation when applied to vulnerable populations.

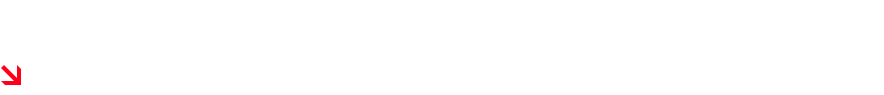

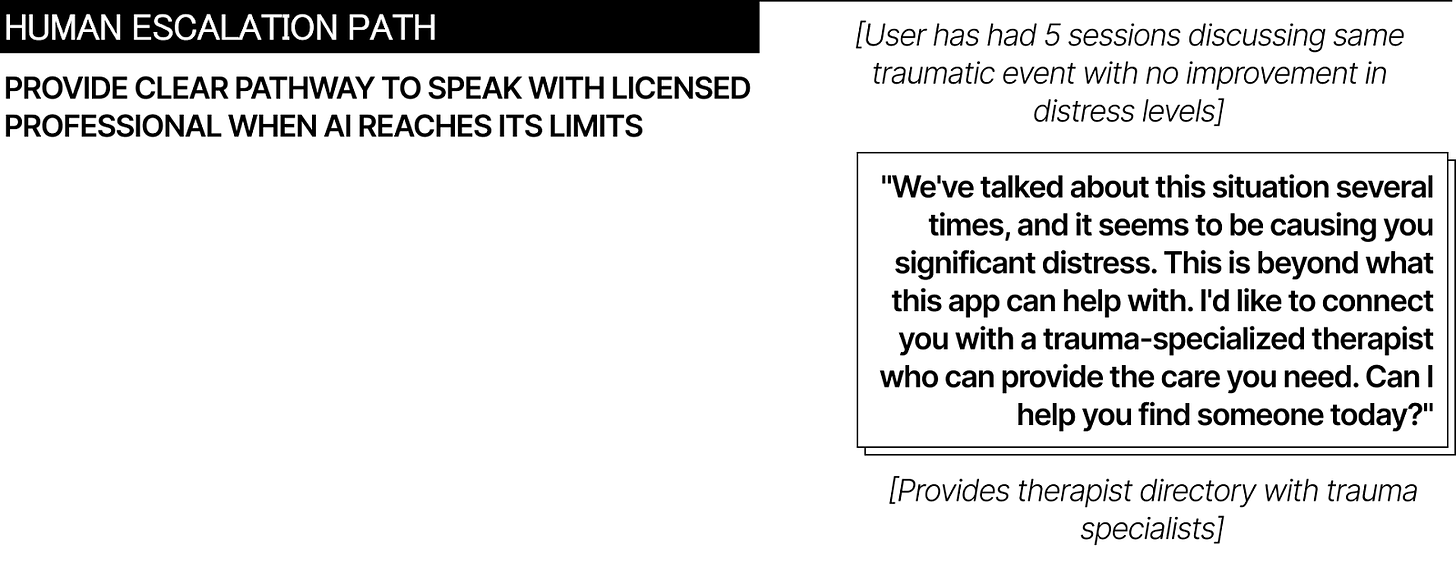

6. Route by severity

Not every mental health concern needs immediate professional care, but many do. Your system needs routing logic based on symptom severity.

Mild symptoms (everyday stress, relationship challenges, general anxiety) can be appropriate for AI support:

evidence-based coping strategies, mood tracking, psychoeducation. The AI provides self-help resources similar to what someone might find in a book or online course.

Moderate symptoms (persistent depression, significant anxiety, relationship distress affecting function) require hybrid approach:

AI for daily check-ins and skill practice, human professional for assessment and treatment planning. The AI doesn’t make diagnostic decisions but supports implementation of human-designed interventions.

Severe symptoms (diagnosed conditions, trauma, medication-resistant depression) need human care with AI in purely administrative role.

The system can send appointment reminders and track homework completion but cannot provide therapeutic interventions.

Crisis situations (active suicidal ideation, self-harm plans, psychotic symptoms) trigger immediate escalation to emergency services.

The AI stops all other functions and displays crisis resources. Crisis indicators mean immediate referral. No exceptions.

Illinois HB 1806 makes this routing structure legally required. AI cannot make therapeutic decisions or provide unlicensed mental health treatment. California SB 243 requires crisis protocols with evidence-based detection. You’re not designing these patterns because they’re nice to have. You’re designing them because state law mandates them.

For designers

Traditional success metrics indicate failure in mental health products. Reducing friction creates danger. Your most engaged users might be your most harmed users. The optimal outcome is users not needing you anymore.

Illinois implemented its ban in months. California’s January 2026 deadline gives companies minimal time to adapt. More states are drafting similar legislation and you can bet that federal action is coming.

Design constraints unlike anything else in consumer products: you must build systems that know their limits and enforce them against user resistance, that create emotional connection while preventing emotional dependence, that optimize for outcomes rather than engagement, for independence rather than retention.

It’s about better guardrails not better AI. Know when to stop engaging. Know when to redirect to humans. Know when to introduce friction instead of eliminating it. When users leave your app, that might be success, not churn.

You’re not designing chatbots. You’re designing limits.

Quick reference / legal compliance checklist (Dec. 2025)

Illinois HB 1806

Cannot provide therapy or make therapeutic decisions

Cannot advertise as providing therapy/counseling/psychotherapy

Requires informed consent for any AI use in mental health context

Violations: $10,000 civil penalties per offense

California SB 243

Crisis detection protocol using evidence-based methods

Refer users expressing suicidal ideation to crisis services

For known minors: AI disclosure, 3-hour break reminders

Publish crisis protocol on website

Annual reporting to Office of Suicide Prevention (starting July 2027)

No variable reward schedules

Private right of action for violations

Additional State Laws:

Utah HB 452: Disclosure requirements for AI mental health chatbots

Nevada AB 406: Prohibits AI systems from providing mental/behavioral healthcare

New York: Multiple bills pending