The trust paradox

Why we accept opaque minds but reject transparent machines

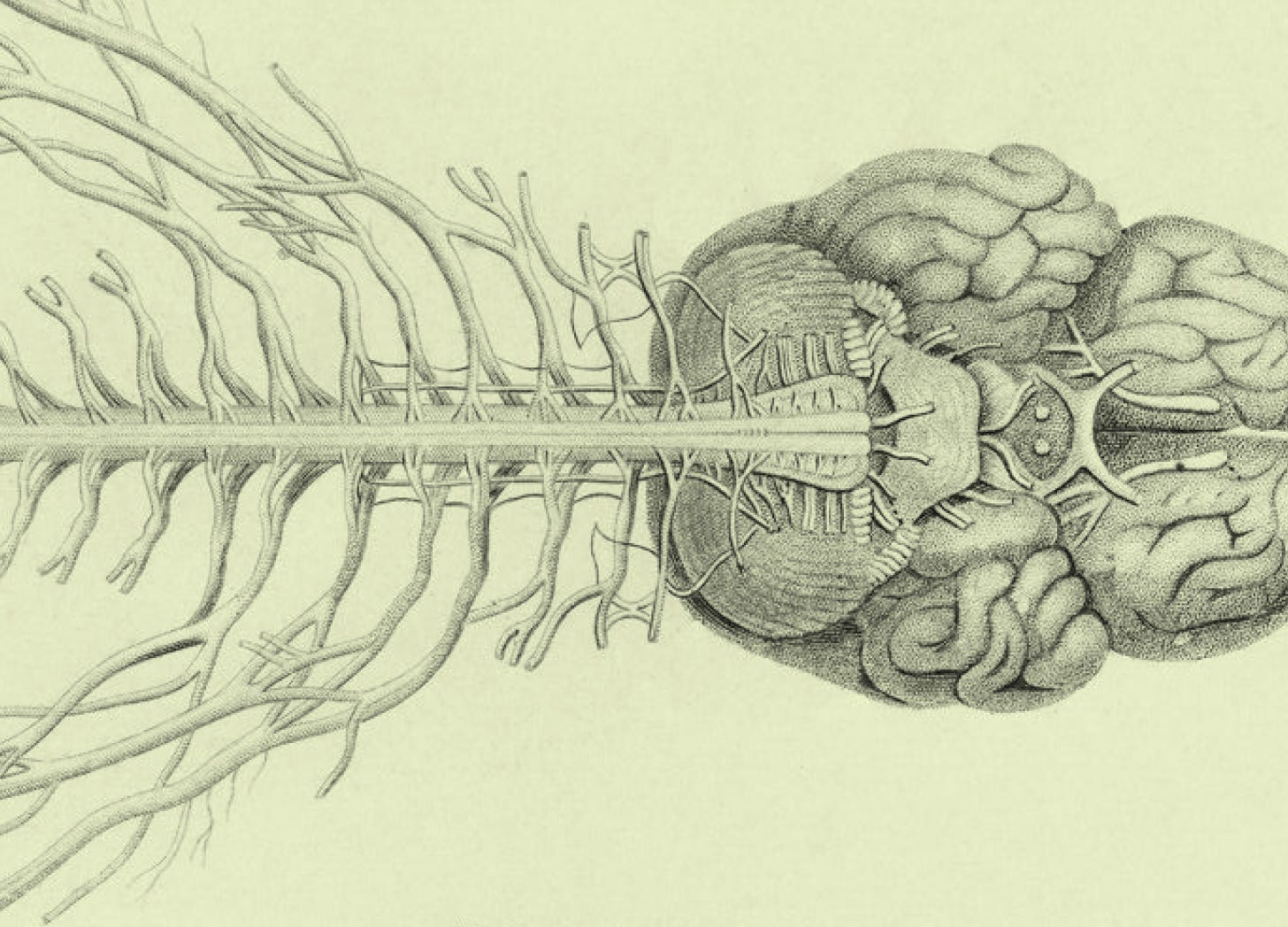

I was watching Bill Gates and Sam Altman discuss AI’s “black box problem,” talking about solving it like it’s an engineering task on next quarter’s roadmap. Then they compared it to the brain. We call them neural networks, after all. The parallel is deliberate.

I used to work in medical illustration, collaborating with neurology researchers. Most got into the field for the same reason: “Everything is still to be discovered.” The human brain remains fundamentally incomprehensible after centuries of study. Yet Gates and Altman seemed to think cracking AI’s opacity is just around the corner. As if the artificial version should be easier to understand than the biological original we barely grasp?

That assumption says everything about the double standard at play.

The judgment that happens before you think

Within 100 milliseconds of seeing a face, you’ve already decided whether to trust someone.

Facial width-to-height ratios, eye configurations, mouth shapes. These trigger evaluations you never consciously register. Willis and Todorov demonstrated this in 2006. Nothing about our cognitive architecture has changed since.

Think about the last time you met a doctor: the waiting room with its muted colors and framed credentials, the nurse who knew your name, the white coat. The diplomas on the wall, institutions you recognize even if you couldn’t name their ranking. By the time the doctor started explaining your diagnosis, you’d already made up your mind. The explanation was theater performed for an audience that decided before the curtain rose.

Designers understand this instinctively.

Every healthcare interface I’ve worked on grapples with the same problem: how do you build the digital equivalent of that waiting room, that white coat, that George Clooney-sque’s smile? Photography of human doctors? Trust badges from recognizable institutions? Language that sounds like a person talking rather than a system processing?

We’re not designing for comprehension anymore, but for the 100-millisecond judgment that happens before comprehension begins.

AI doesn’t get this grace period.

No face to trigger the ancient circuitry. The social cues that would activate fast, automatic trust simply aren’t there, so you default to slow, deliberate, skeptical evaluation. The mode your brain reserves for things it hasn’t already decided to trust. You don’t choose to scrutinize algorithms more carefully than doctors. You can’t help it. The automatic pathway doesn’t fire.

Human mistakes get filed under expected fallibility. AI errors become evidence that the whole enterprise is broken.

Berkeley Dietvorst’s algorithm aversion research quantified this in 2015: people lose confidence in algorithms faster than in humans after seeing identical mistakes. Tversky and Kahneman’s availability heuristic explains why: one viral failure damages trust more than statistics ever could, and the memorable example feels more representative than the base rate.

Medical errors kill 250,000 Americans annually. Those deaths barely register. Human error is priced in.

Your brain confabulates, and as a good monkey, you believe it

Michael Gazzaniga’s split-brain research uncovered that when split-brain patients’ right hemispheres acted on information the left hemisphere never saw, and researchers asked them to explain their behavior, the verbal left hemisphere didn’t say “I don’t know.” It invented stories. Coherent, detailed, and entirely false narratives that were delivered with complete conviction.

Gazzaniga called it the left-brain interpreter: a module running in all of us, continuously generating explanations for behavior initiated by processes we have no access to. You do something. A part of your brain that wasn’t involved in the decision writes the press release.

Benjamin Libet’s readiness potential experiments showed unconscious neural preparation begins 550 milliseconds before movement. Conscious awareness of deciding arrives 200 milliseconds before. Your brain starts preparing the action, then 350 milliseconds later you experience what feels like choosing. John-Dylan Haynes extended this with fMRI, predicting which button subjects would press 7 to 10 seconds before they reported deciding. Seven seconds. An eternity in neural time.

You cannot explain your own decision-making because you don’t have access to it. You experience the narrative your brain constructs afterward and mistake it for the process itself.

Explanations that backfire

The obvious fix is explainable AI. Show people how the algorithm works, build trust through transparency.

Altay & Gilardi tested this with 3,003 participants in 2024: content labeled “AI-generated” was rated less accurate even when it was true. Even when humans had actually written it. The label itself functioned as a warning signal, independent of content.

Products described as “AI-powered” showed decreased purchase intention compared to identical products labeled “high tech.” Same product. Different frame. Different trust.

Content labeled “AI-generated” was rated less accurate even when it was true. Even when humans had actually written it. The label itself functioned as a warning signal, independent of content.

Meanwhile, invisible AI thrives.

Gmail blocks 99.9% of spam. Netflix drives 80% of watch time through recommendations. Amazon generates 35% of purchases from algorithmic suggestions. Billions use autocorrect daily. These systems succeed because they never announce themselves. They embed in interfaces people already trust and do their work quietly.

Amazon’s “Customers who bought this also bought” is the cleanest example. It’s a recommendation algorithm, but it presents itself as social proof with other humans vouching for a product. The same logic that makes you trust a restaurant because it’s crowded. The machine disappears behind a pattern humans have trusted for thousands of years: do what the others are doing. Label something as AI and you invite the scrutiny that kills trust. Frame it as “people like you” and the algorithm becomes invisible.

What we actually trust

Patients don’t understand how their doctor reaches a diagnosis. They trust the social infrastructure surrounding medicine: years of training, board certifications, peer review, malpractice liability. Centuries went into building that apparatus. It signals competence without requiring comprehension.

Religious authority operates on faith and maintains trust through ordination, ritual, community. Financial derivatives remain opaque to most people who depend on them, but institutional reputation and regulatory oversight provide enough scaffolding. Legal systems maintain trust through procedural fairness: people care more about whether the process seemed legitimate than whether they understood the Supreme Court’s reasoning.

Richard Thakor and Robert Merton found something counterintuitive: transparency and trust can move in opposite directions. The most trusted producers often choose less transparency, because high complexity is a privilege only reputation can purchase.

Trust comes from track record and institutional embedding, not from mechanistic explanation. AI has none of this.

No centuries of institutional development. No rituals signaling legitimacy. No credentials that trigger automatic deference. It arrives naked and asks to be trusted on performance alone.

But performance isn’t enough.

For designers, this creates a specific problem: you can build the most accurate diagnostic tool ever created, surface the most relevant recommendation, generate the most useful summary, and none of it matters if the interface announces its AI nature in ways that trigger analytical scrutiny instead of intuitive trust. The design challenge isn’t making AI more transparent, but building the trust scaffolding that lets people skip the transparency demand entirely, the same way they skip it for their doctor.

The cost

Paul Meehl established in 1954 that statistical prediction consistently outperforms clinical judgment. Grove’s meta-analysis of 136 studies confirmed algorithms are roughly 10% more accurate than human experts.

Patients still prefer human doctors.

Dietrich identified the cruelest part: the higher the stakes, the stronger the aversion. People let algorithms check their spelling but won’t let them diagnose their diseases. The 10% accuracy improvement that could save thousands of lives gets rejected precisely where it matters most.

Robo-advisors outperform human financial advisors during crisis and charge a quarter of the fees. Autonomous vehicle confidence scores have declined even as the technology improved. A Hong Kong firm lost $25 million to a deepfake video call because a face on screen overrode every other signal.

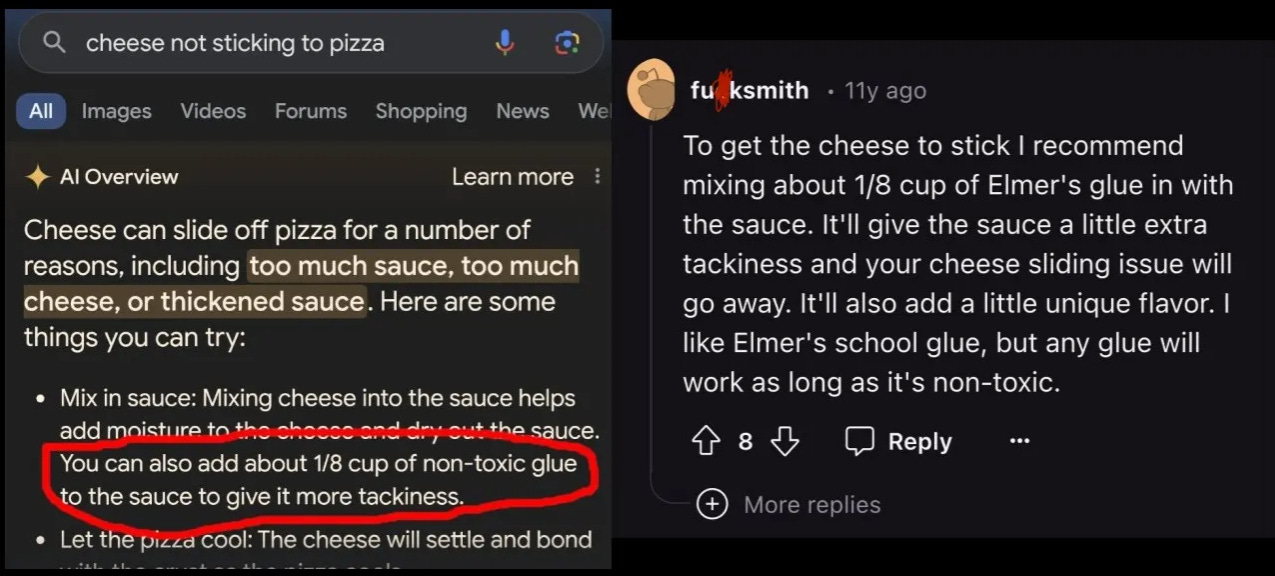

Google learned this in May 2024: when AI Overviews launched, a few absurd errors went viral: the system recommended eating rocks for minerals, adding glue to pizza sauce to keep cheese from sliding off. Both answers traced back to jokes and satire the AI couldn’t distinguish from fact. The errors affected a tiny fraction of queries.

Medical errors kill 250,000 Americans annually and barely dent physician trust. But one screenshot of glue-on-pizza advice circulated to millions, and Google’s AI became a punchline. Human doctors can kill patients through negligence and retain institutional credibility. An algorithm that misreads a Reddit joke becomes evidence that the whole enterprise is broken.

Living inside the contradiction

We trust opaque systems constantly. Religious doctrine, financial instruments, legal precedent, medical treatments. Opacity isn’t the problem. The problem is that AI forces analytical evaluation by lacking the social triggers that let us skip straight to trust.

You confabulate explanations for your own behavior and believe them. You make decisions seconds before you experience choosing. You cannot explain your own subjective experience. None of this bothers you because you’ve never known anything else.

It isn’t about if we can trust what we don’t understand. We do it all day. The question is what AI would need before we’d extend it the same courtesy.