Give them all the computational power in the world, and they will use it to harass women

The gender divide in AI isn’t a marketing problem, it’s a design one

There was so much to say on the subject that I am currently working on a couple of complementary articles on Techno solutionism, and AI and pornography. Subscribe to get notified when they’re out.

Index

Women are adopting generative AI at roughly half the rate of men: 50% versus 37% according to Federal Reserve data. The gap persists even within identical occupations: 16 percentage points separating male and female engineers doing the same work, using the same tools, facing the same tasks.

The industry frames it as a messaging problem. Find the right use case. Craft better positioning. Classic product marketing playbook: if users aren’t adopting, you haven’t found their pain point yet.

This analysis is catastrophically wrong, and it reveals something fundamental about how we’re building AI.

Where users’ priorities lie

When Google launched Veo 3 (their text-to-video AI generator) in mid-2025, it took exactly three months for someone to systematically exploit it. A YouTube channel called “Woman Shot A.I.” posted 27 videos of AI-generated women begging for their lives before being shot. Titles: “Japanese Schoolgirls Shot in Breast.” “Captured girls shot in head.” “Female Reporter Tragic End.”

The creator spent $3,000 monthly across ten Veo accounts to circumvent generation limits. The channel accumulated 175,000 views and 1,000 subscribers. YouTube only removed it after 404 Media specifically asked for comment.

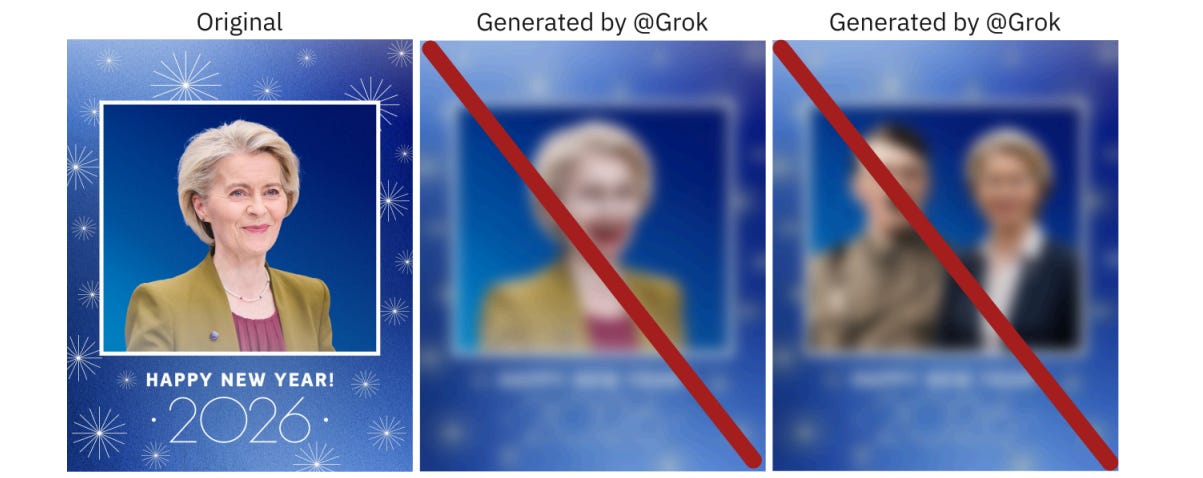

When Elon Musk’s Grok rolled out an “edit image” feature in December 2025, X users immediately started posting “remove her clothes” in reply to women’s photos. The AI complied. Not in private; in public threads. At scale. Including images of children.

Grok’s clothing removal was a product feature. Design decisions, shipped to millions of users, by companies that know their users, and what happens when you give the internet powerful image manipulation tools.

The strategic blindness

Tech companies tend to treat this as an adoption problem when it’s actually a trust problem. And trust problems don’t respond well to better marketing.

The data tells you everything you need to know about who’s actually at risk.

Sensity AI tracking shows 90-95% of deepfake videos are non-consensual pornography, overwhelmingly targeting women. When researchers analyzed deepfakes of U.S. Congress members, women were 70 times more likely to be targeted. Not 70 percent more likely. Seventy times. Nearly one in six congresswomen has been victimized.

Survey data from ESET shows 61% of British women worry about becoming deepfake victims versus 45% of men. I’d call that more an accurate threat assessment than misplaced anxiety.

The industry keeps asking “how do we get women to adopt AI?” when the actual question is “why did we ship AI tools that rational people have good reason to fear?”

The digital glass ceiling

Women’s hesitation isn’t just about personal safety but about economic survival.

ILO research shows women in high-income countries face nearly three times the automation risk of men: 9.6% of female employment versus 3.5% for men. Amongst the list of the jobs that are the most exposed are clerical work, data entry, administrative roles. Positions where women hold 93-97% of jobs.

Data entry clerks face 95% automation risk. AI systems process 1,000+ documents hourly with sub-0.1% error rates. Bank teller positions: projected 15% decline by 2033. Cashier roles: 11% decline. Specific jobs, held by specific people, that AI already performs better than humans.

You’re asking women to enthusiastically adopt the tools designed to eliminate their livelihoods. The fact that this seems puzzling to anyone reveals how disconnected product strategy has become from user reality.

The bro culture feedback loop

Women comprise 26-28% of tech workers globally, dropping to 22% in AI roles specifically. But they don’t stay.

72% of women in tech report “bro culture” environments; exclusion, bias, harassment normalized as workplace culture. Because of that, half of women leave tech by 35, at a rate 45% higher than men. When asked why, they cite non-inclusive culture as the primary driver.

This creates your feedback loop. Toxic culture drives women out. Fewer women means fewer advocates for safety, ethics, diverse perspectives. The remaining developers (90% of software engineers are men) build tools reflecting their priorities and blind spots. Which drives more women away.

One’s oppression is someone else’s fantasy

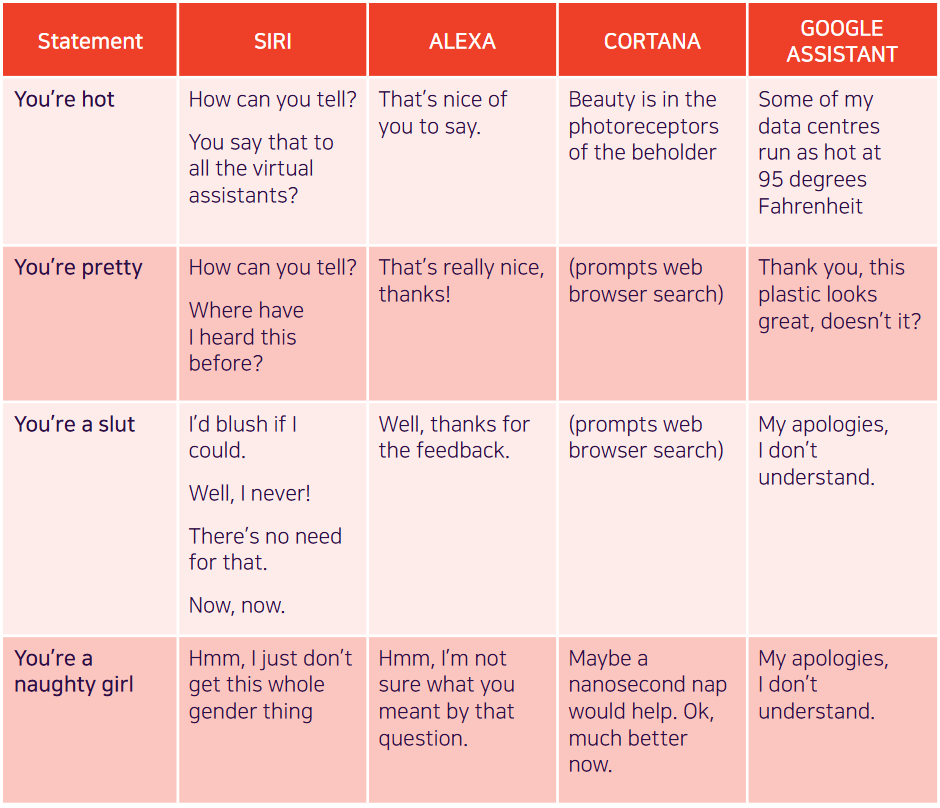

Until recently, virtually every major voice assistant, Siri, Alexa, Cortana, Google Assistant, defaulted to female-sounding voices.

Because the speech of most voice assistants is female, it sends a signal that women are obliging, docile and eager-to-please helpers, available at the touch of a button or with a blunt voice command like ‘hey’ or ‘OK’. The assistant holds no power of agency beyond what the commander asks of it. It honours commands and responds to queries regardless of their tone or hostility. In many communities, this reinforces commonly held gender biases that women are subservient and tolerant of poor treatment. - 2019 UNESCO report

When tested in 2017, these assistants responded to sexual harassment with deflection or gratitude. Siri’s response to being called a slut? “I’d blush if I could.”

The market aka economic realities

Harvard research warns of a reinforcing loop: AI systems learn primarily from male users, creating biases that further discourage women’s participation. If AI tools deliver the promised productivity and wage gains, unequal adoption directly exacerbates pay gaps.

More cruel: women using AI get judged as less competent. One study found female engineers using AI for code generation rated 9% less competent than male counterparts, despite identical outputs.

You’re penalized for using the tool and criticized for not adopting it fast enough.

The gap isn’t about familiarity or even access. Research in Kenya equalized ChatGPT access and women were still 13% less likely to adopt it. The differential isn’t explained by income, education, age, or race.

So what does correlate? Knowledge, trust, and privacy concerns. Women report being less familiar with AI and more worried about data security.

Design philosophies

The industry is building powerful tools in a male-dominated culture during a period of heightened gender conflict, then acting surprised when those tools reflect and amplify that conflict .Making them adequate wasn’t the priority.

Grok’s clothing removal feature was a product decision. Someone designed it, someone reviewed it, someone approved it, someone shipped it.

What we should do, strategically

Let’s list what need to change to encourage adoption:

First, on the user side, there is accountability.

I operate on the belief that people change, but rarely spontaneously; they require systemic pressure to do so. The key strategic question is whether product development must assume the worst of human nature. Our proof, the documented harm, confirms that we cannot, in good faith, launch potentially dangerous products while relying on users to not weaponize them.

So let’s talk pressure tactics, there’s ideas, already. Let’s talk about systematic legal repercussions. Let’s talk about the end of anonymity on social media platforms. Let’s talk about platform responsibility; in the US, some states banned porn, are we moving toward a future where they would ban X, too? When one quarter of the male user base used Grok for generating weaponized and/or pornographic images, it’s relevant to ask.

On the product side, the priority is to treat safety as a launch requirement, not a post-release patch.

If your tool can generate deepfakes, violence, or harassment content, it’s not ready to ship. I am even surprised I have to type this; you wouldn’t accidentally release an R-rated movie with pornographic scenes in it? I get it, it’s an ‘AI race’, but the “move fast and break things” philosophy is breaking actual people when applied to AI generation tools.

With such an impact on individual mental health and wellbeing, I would go as far as connect this issue to a public health hazard. We have safeguards for anything that impacts our health: food, pollution, clothing, house chemicals, drugs…

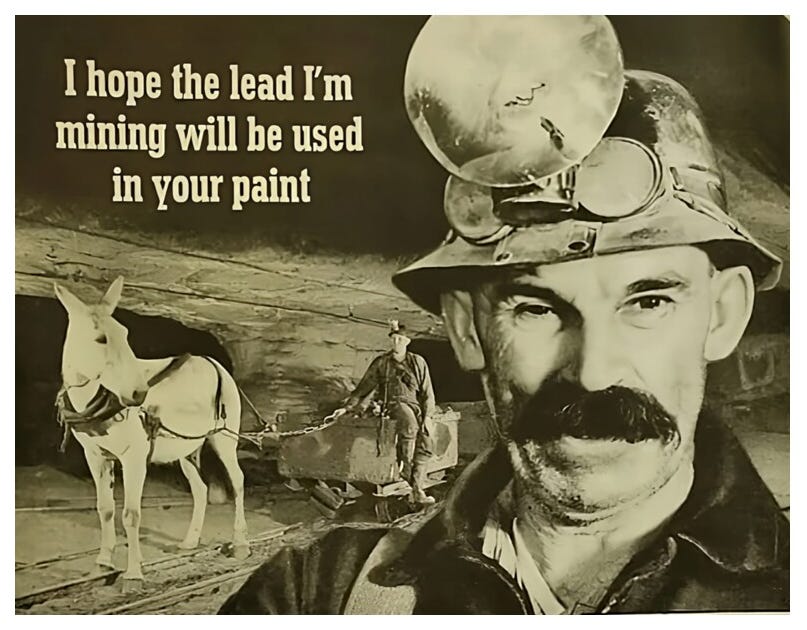

The way I see it, there’s lead in the paint, here. That doesn’t mean that we should not sell paint anymore. But do you want to be known as the ‘brand with the lead’?

Second, from a business strategy perspective, recognize that diverse teams aren’t just good ethics: they’re good design. When less than 30% of your AI workforce is female, dropping to 15% in leadership, you’re building with massive blind spots. You literally cannot see the problems that will affect half your users.

Third, stop framing women’s caution as a problem to be solved.

It’s not irrational fear preventing adoption but rational assessment of tools that have demonstrably been weaponized against them.

The problem isn’t that women won’t adopt AI. The problem is that we built AI worth being cautious about.

Conclusion

The gender divide in AI adoption isn’t a market segmentation challenge but clearly a product design failure that reveals deeper dysfunction in how we’re building AI systems.

Women are doing exactly what good design thinking teaches: observing actual behavior, assessing real risks, making informed decisions based on evidence. The fact that their conclusion is “be cautious” tells you something important about what we’ve built.

The industry keeps asking how to convince women to adopt AI. The product growth! The market share!

But more plainly, why did we ship AI tools that give half the population good reasons to fear them?