What happens when junior roles disappear

Everyone cutting junior roles does not make it a sound strategy. It makes it baseline behavior, and baseline behavior produces no competitive advantage.

This article is directly following my previous one about the strategic baseline shifting.

Index

Let’s define senior expertise

“Off with their heads!”

The formalization trap

Freezing your internal knowledge

Not sure that we are getting other jobs instead

The strategic read

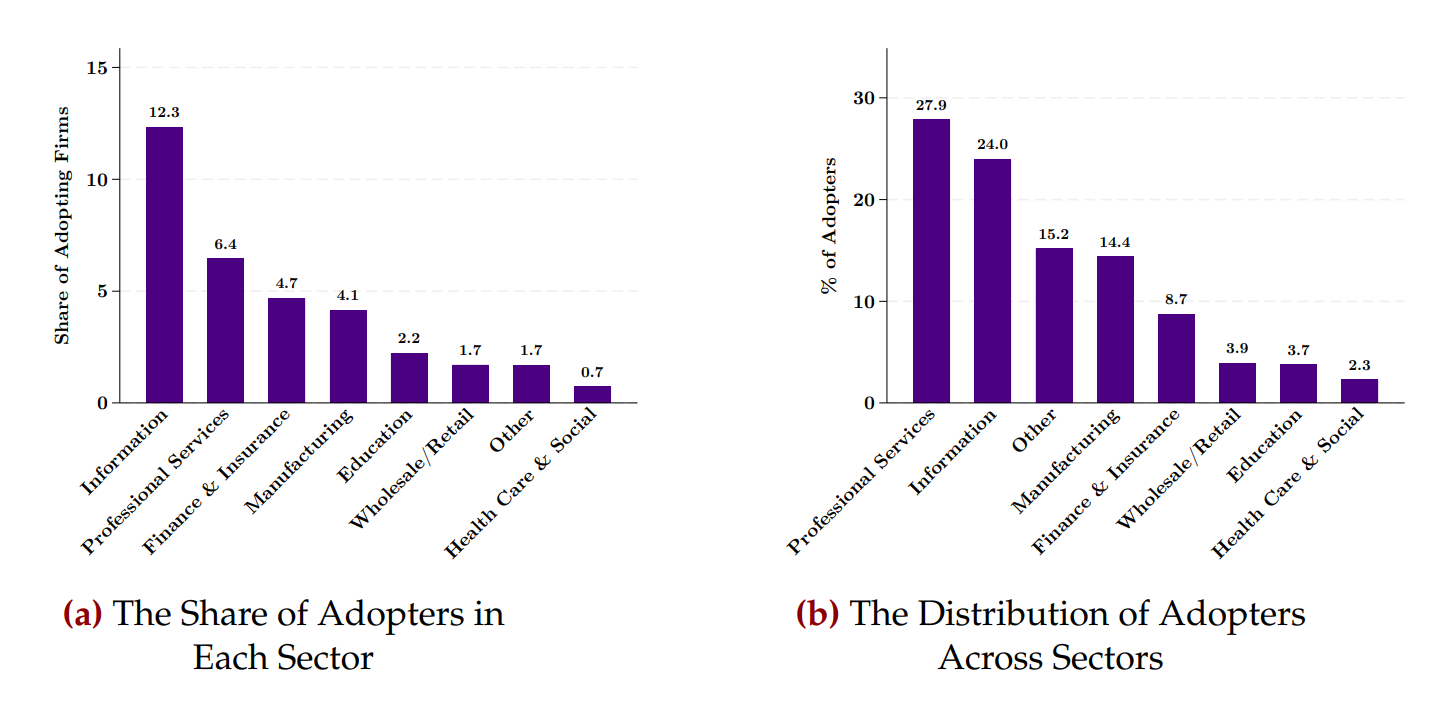

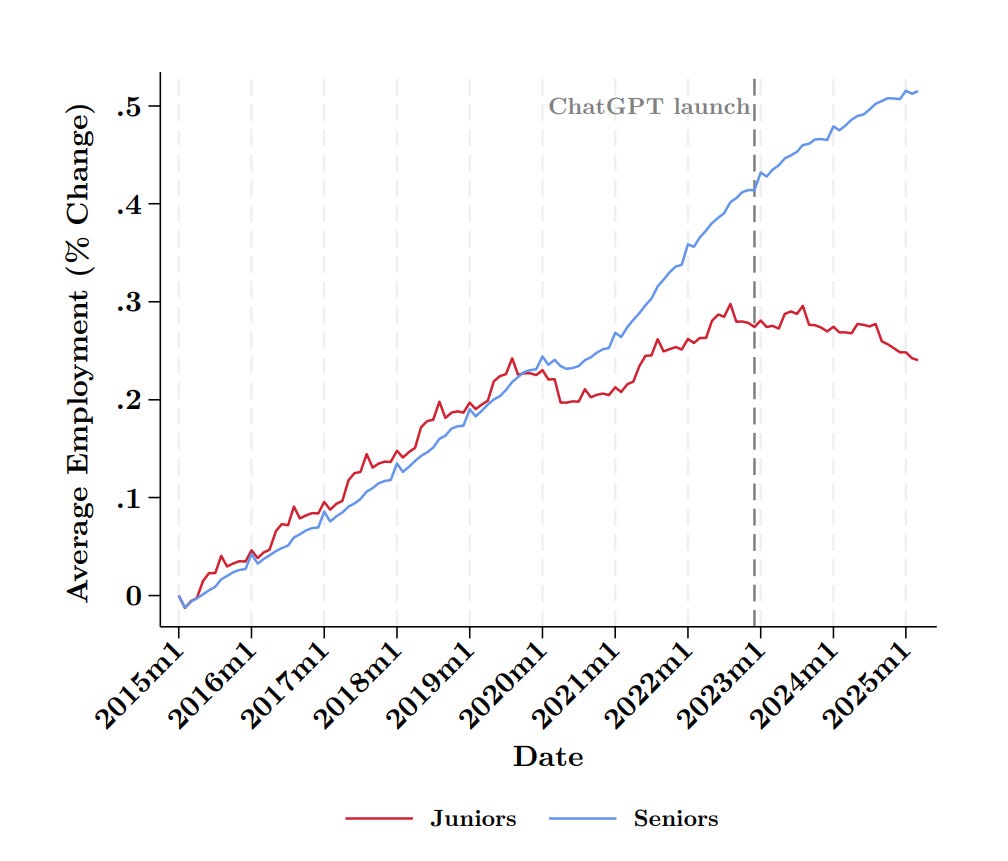

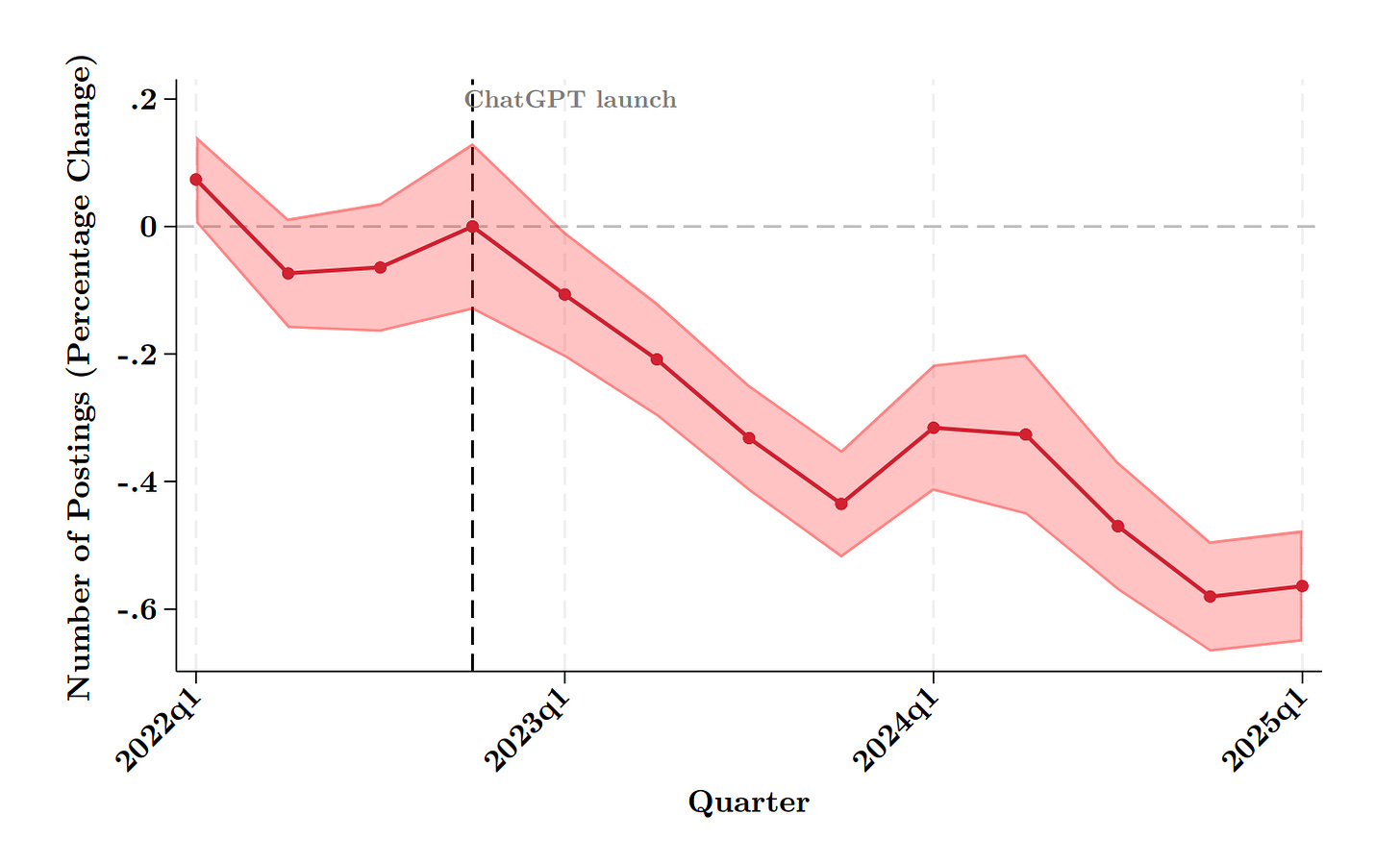

Between 2022 and 2024, entry-level design and tech roles in the United States dropped by roughly 25%. The companies cutting those positions frame it as an efficiency gain. AI handles the tasks. Headcount shrinks. Margins improve. By every short-term metric, the decision looks clean.

The problem is the timeline it’s being evaluated on.

Note: When writing this article, I found this fantastic paper: Generative AI as Seniority-Biased Technological Change: Evidence from U.S. Resume and Job Posting Data by Seyed M. Hosseini and Guy Lichtinger. It’s an employment data gold mine on this topic and most of the graphs I included here are from it.

Let’s define senior expertise

Senior expertise is not accumulated knowledge. It is accumulated experience with failure and correction.

You learn to read a brief by writing bad briefs and having them taken apart. You learn when a design is wrong by shipping designs that fail and understanding why. You learn to push back on a client because you spent years not pushing back and watching what happened next. None of that can be compressed, outsourced, or prompted. It is time-dependent and embodied.

“The businesses that will succeed tomorrow will not be those with the best tools, but those that have managed to maintain learning paths, moments of confrontation with reality, and spaces for slow training in judgment.”

— Bertrand Duperrin, Digital Transformation Practice Lead, 2025

The junior role was never primarily a productivity unit, but a developmental structure. The work it produced was secondary to the experience of doing it under conditions where failure had real consequences.

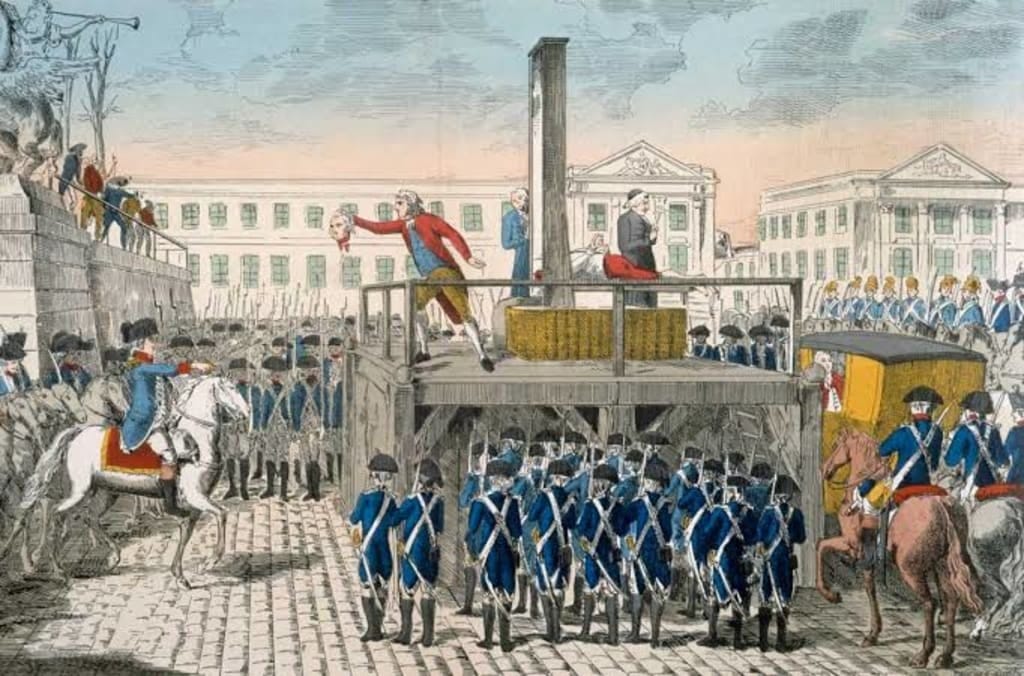

“Off with their heads!”

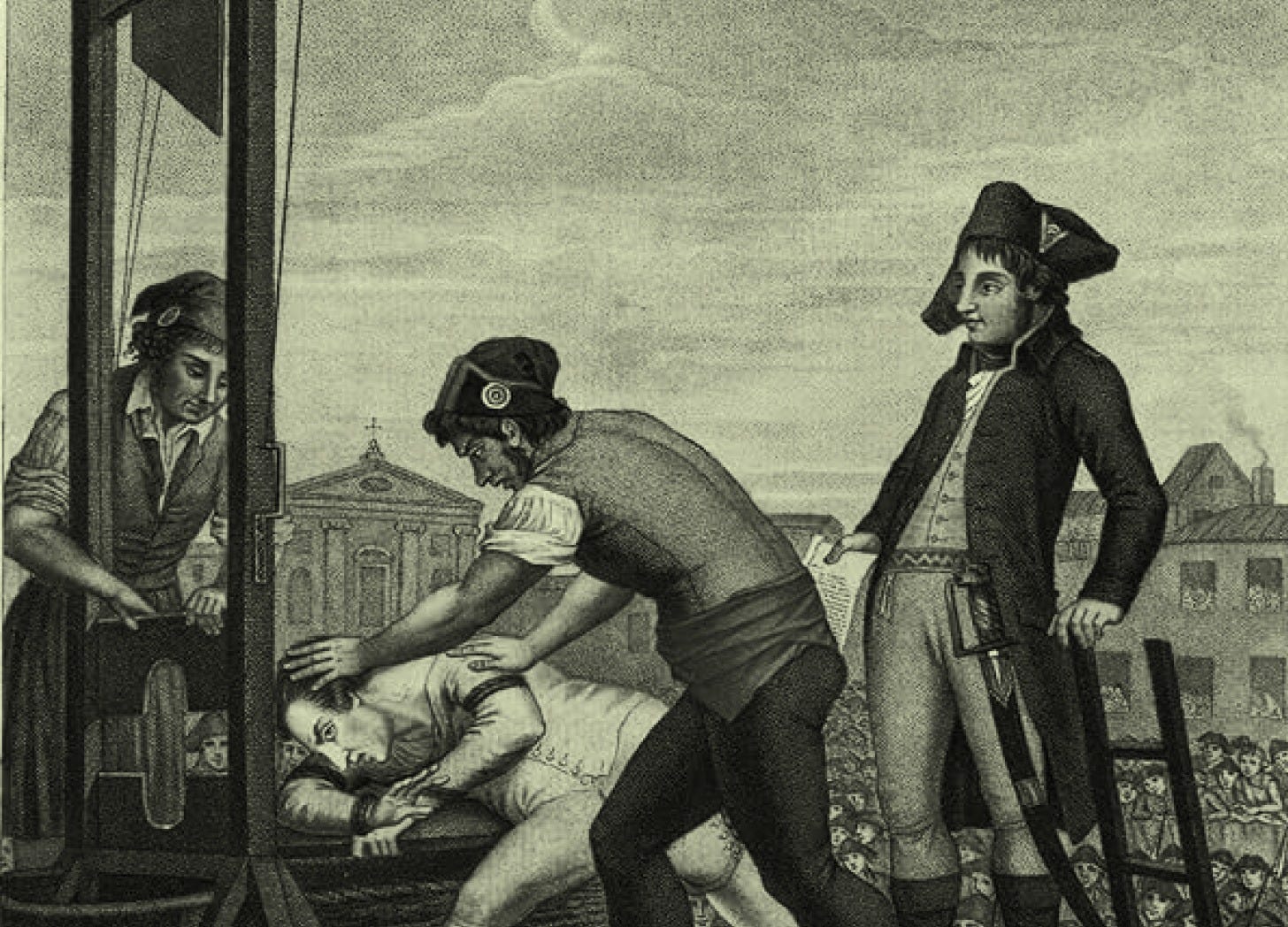

I am French, you’re getting a French Revolution metaphor.

In 1794, Robespierre was guillotined by the same revolutionary apparatus he had built. The Terror he led had consumed the lawyers, the intellectuals, the civic architects of the Republic, precisely the people capable of defending it once the momentum shifted. When the Thermidorian Reaction ended the radical phase, there was no institutional body left to hold the Republic together. The vacuum did not stay empty. Napoleon’s authoritarian Empire walked into it six years later.

The organizations cutting junior roles are using the momentum of an AI transition to justify a decision that looks rational in the short term and becomes structural in the medium one. They are spending the capital that would have defended them against what comes next.

The Robespierre frame adds consequence: by the time you realize it, the people who could have defended the organization are gone. The competitive pressure that fills the vacuum will not announce itself in advance.

The formalization trap

AI executes what can be formalized, documented, described, turned into a prompt or a process.

“Preserving certain ambiguities and gray areas, not documenting everything, not turning every corporate culture into a playbook.”

— Bertrand Duperrin, on what actually protects organizational distinctiveness, 2025

The more thoroughly you document your processes for AI to execute, the more precisely you have described your own replaceability. Organizations that moved fastest on automation did one thing consistently: they made themselves legible to their competitors. They are now running on formally documented processes that any organization with the same tools can replicate.

Freezing your internal knowledge

When you eliminate junior roles, you do not just lose future senior practitioners. You freeze your organization’s internal body of knowledge at its current state. The world does not freeze with it.

New regulations around AI-assisted decisions are already expanding in the EU and coming elsewhere. Industry standards will shift. Technology will continue evolving in ways that are not yet formalized, which means they cannot yet be prompted. An organization with no internal pipeline has no mechanism to develop the people who would navigate those changes from the inside.

It becomes structurally dependent on external expertise it cannot evaluate. If your team cannot assess whether a consultant’s recommendation is sound, how can you push back on it? If your leadership cannot evaluate whether an agency’s work is exceptional or merely polished, how can they specify what you actually need? You become a consumer of expertise with no purchase on its quality.

When you’ll need them, the last few experts will dictate their terms. Millennials and GenZ pick employers based on their values, so mass layoff announcements have a long memory. The remaining experts will choose where to work, if they want to work there and be sure that they will price accordingly.

Worst case is liability: a regulatory change, a legal challenge, a product failure that requires deep domain judgment, and nobody internally positioned to respond.

Not sure that we are getting other jobs instead

The standard response is that new roles will emerge above the AI layer. The industrial revolution eliminated certain jobs and created others. The internet eliminated travel agents and created UX designers. This is historically accurate.

But for the first time in history, the people that created AI are very vocal about its primary purpose: cutting jobs. They are not dismissing the risk of mass unemployment, they are anticipating it, and no one can really tell what these next jobs would be.

The pattern holds only if the new higher-level roles are accessible to people developing from entry points. Remove those entry points and the pipeline does not restart at a higher level. It breaks. The argument also addresses the labor market in aggregate, saying nothing about whether your organization will have the internal capability to fill emerging roles, or whether you will be buying that capability externally at rates you cannot negotiate, from people whose quality you cannot evaluate.

The strategic read

As established in my previous article “The Baseline Advantage,” AI capability is now shared infrastructure. The floor is available to everyone. Differentiation in that environment will be organizational, built from context, judgment, and institutional knowledge that took years to develop and cannot be replicated by an API call.

“When every company can use the same AI models, the same AI-enabled tools, and the same vendor ecosystem, organizational context becomes the differentiator.”

— Ravi Kumar S, CEO of Cognizant, Harvard Business Review, 2026

Everyone cutting junior roles does not make it a sound strategy. It makes it baseline behavior, and baseline behavior produces no competitive advantage.

What produces advantage is the organization that looks at the same pressure and makes a weighted decision rather than a reflexive one.

Every AI implementation decision carries a strategic cost that does not show up in a short-term efficiency calculation. Leadership responsibility is to hold that full weight. That may still mean reducing headcount. It may still mean restructuring junior roles. The argument here is not that you should never cut. It is that you should know what you are cutting before you cut it.

This is so accurate, and I cannot believe people are not realizing this. Part of me wonders if those in charge of making the cuts recognize this, but in an effort to save their position by increasing margins and shareholder value, they are willing to take actions that lead to short-term benefits while ignoring the long-term consequences. Maybe they are thinking whoever is in charge when shit hits the fan will someone else, so it's simply not their problem, and they get to sail away with all the money they received from making these junior-level cuts.