Techno-solutionism is a pacifier

Our emotional support AI (etc.)

This article is a companion to the one about Gender divide I shared earlier this month. Go check it out!

It’s not the first time humanity has surrendered its hope for a better future to an all-knowing, all-powerful entity. Throughout the ages, we have been praying, singing, and dancing our problems away. Sound familiar?

“I think A.I. is the only way we solve climate change... I don’t know [exactly how], it’s smarter than me... But if you have something that can solve the physics of things that currently seem like they are going to kill us... then that’s the path.”

Birth rates dropping? Build artificial wombs. People lonely? Deploy AI companions. Climate collapsing? Train bigger models to optimize everything. The pattern repeats because it works, but not at fixing things, at deferring the harder questions about why things broke in the first place.

Whenever you hear “we can solve this with technology,” what follows is usually a strategy to avoid solving the actual problem. This doesn’t mean the tech won’t help (one can hope) but that isn’t what the “solution” is truly designed for. It is designed to establish a sense of agency where we have none.

One example: synthetic wombs

In 2024, there were 5.7 million more childless women in their primary childbearing years than historical trends predicted. Among women aged 25-29, childlessness hit 63%. Housing costs quadrupled while wages stagnated. Childcare now costs more than college tuition in most states.

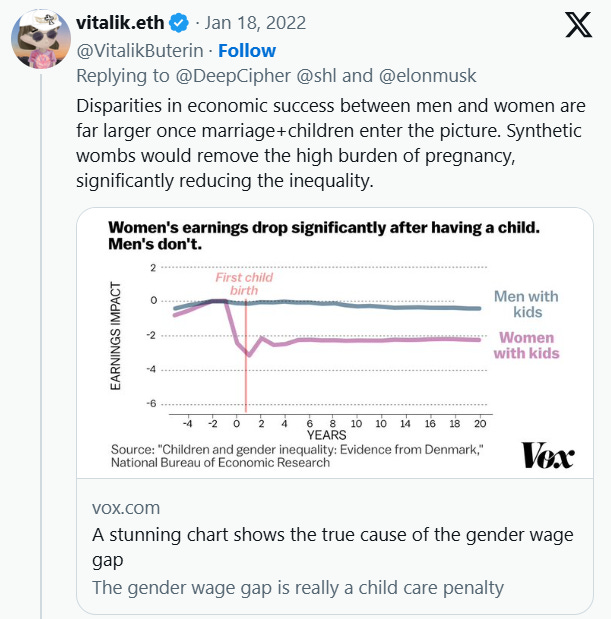

Vitalik Buterin and Brian Armstrong saw these numbers and proposed artificial wombs (ectogenesis). Mechanized gestation to “reboot” birth rates.

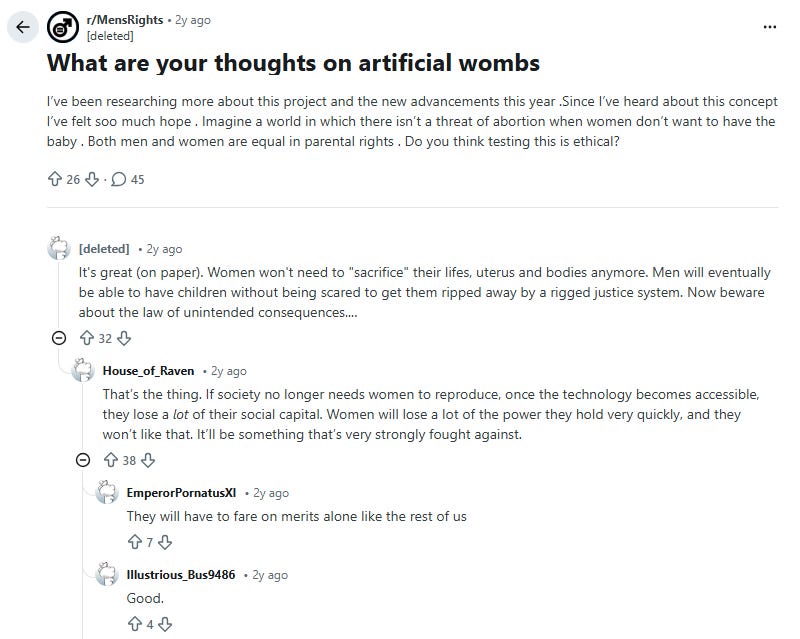

The proposal split along predictable lines. Online manosphere communities framed it as making women “obsolete”: once artificial wombs work, women lose their leverage.

Many women looked at the threat of obsolescence and said: good. Shulamith Firestone called pregnancy the “tyranny of reproduction” decades ago. Plenty of women today agree. They don’t view reproductive capacity as leverage. They view it as unpaid labor in an economy that treats children as an individual problem instead of a collective responsibility.

“The final step to [women’s] liberation... is the freeing of women from the tyranny of their reproductive biology by every means available.”

— Shulamith Firestone Source: The Dialectic of Sex (Archive.org) (See Chapter 10: The Ultimate Revolution).

Nobody’s asking why housing became unaffordable or why the United States remains the only developed country without paid parental leave. Those questions require confronting wealth concentration and labor rights. Building an artificial womb is conceptually simpler, even if the engineering is brutal.

How evasion becomes strategy

The pattern works because it lets the people creating problems avoid responsibility for them. AI data centers consume more energy than entire countries. Training large models generates carbon emissions equivalent to hundreds of transatlantic flights. Rare earth mineral extraction for hardware devastates local environments.

“Market forces and Big Tech companies often control this narrative, attempting to wriggle out of their responsibility to create technology that benefits all of us... They say creating AI might do a bit of environmental damage now, but AI is also going to come up with the tools to solve that environmental damage... This is a moment for us to push back on that.”

- The Friends of the Earth, Friends of the Earth: Harnessing AI for Environmental Justice (Policy Report)

For the sceptics, the assumption that AI will eventually optimize us out of the crisis it’s accelerating functions as permission to continue the damage.

There’s a personal version of this. People struggling with social skills turn to AI chatbots that provide unconditional validation. The “always-kind” interface lets you rehearse self-awareness without vulnerability. The chatbot won’t tell you you’re being unreasonable. It won’t push back. You get the feeling of introspection without the friction that drives actual change.

When the data reflects power, not truth

Developers use a term: “ground truth.” It means the reality outside their models, what their systems try to capture. But the term assumes this reality is objective, neutral, just how things are.

An AI hiring system trained on historical data reproduces sexist patterns. Advocates defend it as “unbiased” because it accurately reflects reality. The algorithm learned from real decisions, so it’s describing the world, not creating bias. This is where the sleight of hand happens.

The ground truth being captured is the outcome of decades of discrimination. The data reflects a reality shaped by bias. Training a model on this data encodes historical inequality into a system that perpetuates it at scale while claiming mathematical objectivity.

“People are less morally outraged by algorithmic (vs. human) discrimination... The algorithmic outrage deficit is driven by the reduced attribution of prejudicial motivation to algorithms.”

— Source: Bigman, Y. E., et al. (2023). “Algorithmic discrimination causes less moral outrage than human discrimination.” Journal of Experimental Psychology: General.

This is how you hide bias behind objectivity: when a machine trained on privileged data validates your worldview, you can treat it as scientific evidence instead of a reflection of existing power structures.

It’s not because of the tech

Psychologists call this problem displacement. When confronting an issue creates anxiety, humans redirect attention to something more manageable. The original problem doesn’t disappear. It gets reframed as something you can control. Another close behavioral concept would be Terror Management Theory (TMT): when people are reminded of their own mortality or the potential collapse of civilization (climate anxiety), they cling more tightly to their “cultural worldviews” with in this case, the worldview that “Human Ingenuity/Technology is boundless.”

“Solutionism presumes rather than investigates the problems it tries to solve... it recasts all complex social situations either as neatly defined problems with definite, computable solutions or as transparent and self-evident processes that can be easily optimized.”

- Evgeny Morozov, To Save Everything, Click Here (2013)

Artificial wombs are easier to scope than housing policy. An AI companion has clearer success metrics than social infrastructure. Carbon capture is more tractable than regulatory reform. The technology becomes what behavioral specialists call a “soothing mechanism”: not a solution, but a way to reduce the discomfort of facing problems outside your domain.

Designing the safety blanket

I am a designer, this is how problem-solving works when you’re trained to think in systems. You look at a messy social problem and instinctively break it down into components you can engineer. Housing unaffordable? That’s a supply problem. Loneliness epidemic? That’s a connection problem. Climate crisis? That’s an optimization problem. Each reframing makes the problem feel solvable, which makes it feel worth working on.

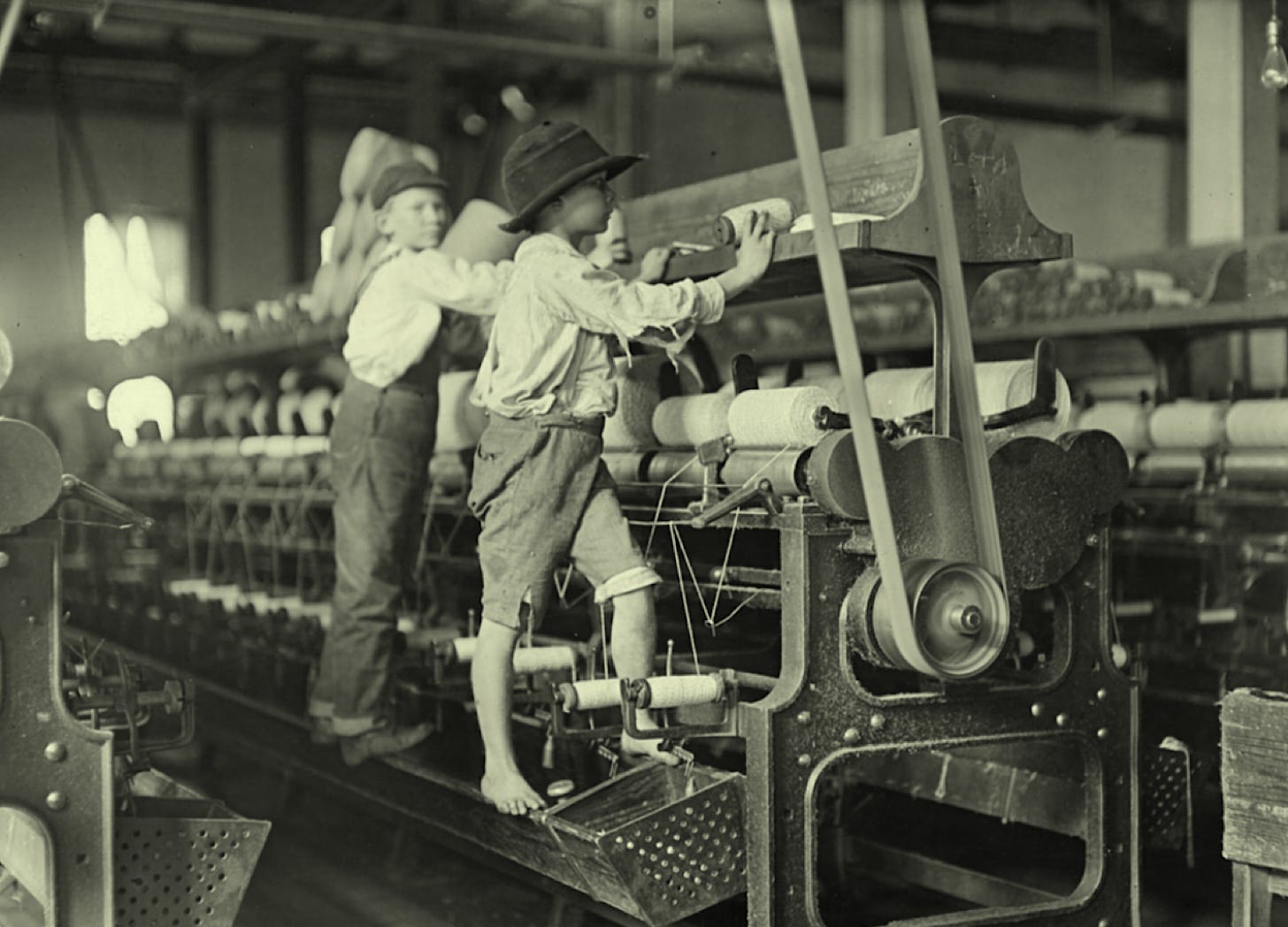

The displacement is almost invisible from inside. You’re not avoiding the hard problem. You’re tackling the part that’s actually tractable. Religion promised divine intervention. Alchemy promised transmutation. Eugenics promised selective breeding. Each generation finds a lens that transforms intractable social dynamics into problems they know how to solve.

Technology is ours. It’s appealing because it offers measurable progress. You can benchmark an artificial womb. You can A/B test an AI companion. You can optimize carbon capture efficiency. You can’t easily measure whether you’ve addressed the economic structures that made housing unaffordable or the social dynamics that created isolation. So you work on what you can measure.

We’re doing what we’re trained to do: find the tractable subproblem, build the tool, iterate on the metrics. The reframe happens automatically. Your brain does it before you notice, converting “this is complex and political” into “this is technical and solvable.”

The algorithm becomes both the project and the permission structure. You get to feel productive while working on something adjacent to the actual problem. The technology is the soothing mechanism.