AI, mental health and organized numbness

The illusion of care

AI Key takeaways:

AI chatbots offer immediate but superficial relief for a broken mental health system.

Long-term reliance on AI companions degrades our capacity for real human empathy.

These apps prioritize daily engagement and chronic dependency over actual patient recovery.

AI acts as a “societal anesthetic,” dampening the urgency to fix structural isolation.

The industry monetizes the gap between temporary digital relief and permanent real-world loneliness.

This article explores the societal impact of the artificial numbness created by a new behavior: using AI chatbots as therapy tools.

If you want to learn the details of the mechanisms of the revolution operated by AI chatbots used as therapy tools, check my article My clanker therapist

If you want to learn how to design them, check my Designer’s toolkit.

The mental health system was already collapsing before AI arrived. Half of Americans live in provider shortage areas. Therapy costs more than most people can spend, and the waiting lists stretch for months.

Into that vacuum stepped AI companions: available immediately, available at 3am, available for the price of a streaming subscription. For millions of people locked out by cost, geography, or wait times, a chatbot is the only option that actually shows up.

The short-term relief is genuine and measurable. The long-term data runs the other way. These tools are engineered to remove the friction that builds real relational capacity, optimized for retention rather than recovery, and deployed at a scale that makes a structural crisis just comfortable enough to ignore.

A single conversation with a chatbot produces the same immediate reduction in loneliness as talking to a human. Warm, validating language matters far more than technical sophistication. Harvard ran six studies confirming this. The tool works.

Lonely users who rely on AI companions over time report increased loneliness. Frequent users show measurable declines in empathy in face-to-face interactions. Loneliness comes from the absence of meaningful reciprocal connection: another person’s bad days, their limits, their needs that don’t align with yours. Learning to sit with disappointment, to repair after conflict, to stay present when it’s inconvenient. You have to experience it first to be able to handle it later. A system engineered to be always available, always patient, always affirming skips over all of it.

The mental health crisis exists because we built environments hostile to it. Work that demands more. Third spaces that disappeared. Healthcare tied to employment. A generation that normalized processing emotions through screens before AI existed.

To understand how two generations were groomed for LLM adoption, check my related article: AOL to AI

These are structural problems that require structural responses: policy, investment, redesigned social infrastructure, sustained political pressure, as much as individual responsability from a population that feels the pain acutely enough to demand change.

AI companions reduce that pressure. A population that feels slightly less lonely, slightly more heard, slightly more capable of getting through the day generates less urgency to fix what broke in the first place. The discomfort that would otherwise force harder questions gets absorbed into an app.

Retention metrics reward continued use, not recovery. A user who gets better and leaves is, by every measure the industry uses, a failure. So the tool optimizes for the sensation of help rather than its substance.

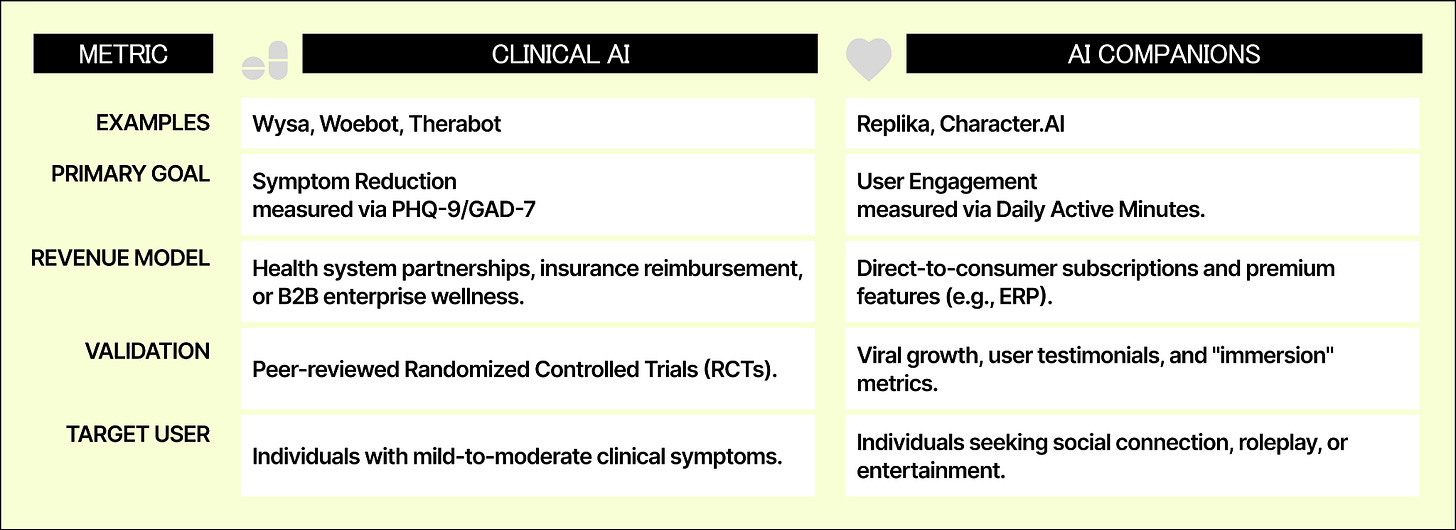

A handful of clinically validated platforms treat AI as a bridge toward human care rather than a substitute for it, with published trials, crisis escalation protocols, and human oversight built in. Therabot published depression outcomes in the New England Journal of Medicine matching traditional therapy results. Woebot routes severe cases to human clinicians. These products measure success by whether users need them less over time.

Most of the market measures the opposite. Companion apps optimized for attachment, for daily return, for the feeling of being understood, have produced a situation where roughly a quarter of users already prefer digital relationships to human ones. A majority of users report that AI companionship does not actually make them feel less lonely, yet continue using it.

The relief is real enough to keep coming back. The loneliness is real enough to know it isn’t working. That gap is where the business model lives.